Docker has quickly become one of the most popular open source projects in cloud computing. With millions of Docker Engine downloads, hundreds of meetup groups in 40 countries and dozens upon dozens of companies announcing Docker integration, it’s no wonder the less-than-two-year-old project ranked No. 2 overall behind OpenStack in Linux.com and The New Stack’s top open cloud project survey.

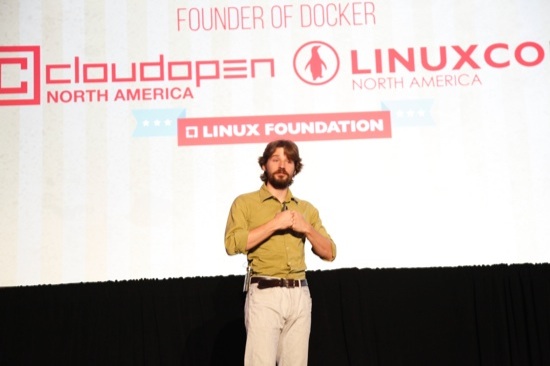

This meteoric rise is still puzzling, and somewhat problematic, however, for Docker, which is “just trying to keep up” with all of the attention and contributions it’s receiving, said founder Solomon Hykes in his keynote at LinuxCon and CloudOpen on Thursday. Most people today who are aware of Docker don’t necessarily understand how it works or even why it exists, he said, because they haven’t actually used it.

“Docker is very popular, it became popular very fast, and we’re not really sure why,” Hykes said. “My personal theory … is that it was in the right place at the right time for a trend that’s much bigger than Docker, and that is very important for all of us, that has to do with how applications are built.”

Why Docker Exists

Users expect online applications to behave like the Internet – always on and globally available, Hykes said. That’s a problem for developers who must now figure out how to decouple their applications from the underlying hardware and run it on multiple machines anywhere in the world.

Many companies have become skilled at building such distributed systems, and employ hundreds of engineers dedicated to doing this. But there isn’t yet a standard, efficient way to do this in a way that still allows for flexibility in how the system is set up and run.

“Everyone is looking for a standardized way to build distributed applications in a way that leverages the available system technologies but packages them in a way that’s accessible to application developers,” Hykes said.

What Docker Does

Docker is a toolkit to build distributed applications in a very specific way. But central to the project’s design philosophy is the idea that developers can pick and choose the tools they need to build applications in the way that best suits their needs and preferences. As a result, not all projects use Docker the same way.

Docker’s first advantage is that it offers a way to package and distribute the components of an application so that it works on a wide array of hardware. This functionality is what Docker is best known for and the source of its signature shipping container analogy.

“In a way Docker is a packaging system of its own,” Hykes said. “It specifies, from source, how to create a tarball with extra metadata, and versioning and a way of transferring a new version with minimal overhead.”

Second, it offers a sandboxed runtime, which is built upon key Linux kernel features including cgroups and namespaces. It provides more certainty for application developers by providing a set of known abstractions that define how the application will run, no matter what hardware is underneath. Examples include how a network is exposed to the process, how to set an environment variable, and how to access the file system.

It’s possible to separate the packaging and distribution from the runtime feature, but developers get the most benefit from combining them.

“You can package bits in a more useful way if you can make assumptions about how they’ll be consumed on the other side.”

There are also many more forthcoming features in Docker’s September release, and beyond. They’re working on a solution to one common issue that an entire application doesn’t fit into one container, for example, which creates scaling issues.

“The building blocks are there… Linux can do incredible things,” but there are too many options for developers, he said.

“We spend a lot of time digesting all the different ways people hack Docker to do their plumbing and then gradually we pull in the patterns and in the next revision we release a new interface,” Hykes said. He welcomes ideas and feedback and encourages users and developers to get involved in the project.