Cyrus is an advance IMAP daemon widely used in small to large scale organizations. It is a full fledge IMAP system and offers some greater advantages over other freely available IMAP alternatives. It is designed to handle massive flow of emails effectively, and it runs on “Sealed†servers where normal users are not permitted to login. Alongside IMAP, it also support POP protocol for email retrieval and provides ability to setup and mange quotas on emails/accounts. Read more at LinuxPitstop

Track the progress of your remote staff with Hubstaff – Installation on Linux is easy

What is HubStaff and How it Works Hubstaff provides software application for remote team management. It provides software tool for all popular operating systems, so in this way the remote staff’s activity and time is tracked properly. It takes occasional screenshots of the activity of your employees so that you can have better idea of their productivity. Read more at LinuxPitstop

Mobile Phone and Smart Phone Market – Global Industry Analysis and Forecast 2015 – 2021

Cellular phones with basic facilities such as text messaging, voice calling, audio and video visualization and camera are referred to as mobile phones. Cellular phones that offer advanced computing abilities such as Wi-Fi, web browsing, third party applications and mobile payment, solutions for information management, such as documents, emails and contacts, inbuilt GPS applications, and provides features such as voice and video calls and web access are referred to as smart phones. Apart from being a communication device, smart phones offer additional features such as internet access, Bluetooth, gaming, camera, multimedia messaging, FM radio, and multimedia functionalities. With technological advancements, phablets are witnessing gradual growth traction and has resulted in decline in rate of adoption for laptops and personal digital assistants globally. Recent years have witnessed a substantial change in the dynamics and structure of the global mobile phone and smart phone landscape. Currently, mobile phones and smart phones market is experiencing proliferation owing to factors such as decreased cost, improved design and functionalities such as enhancement in mobile browsing and email services, emergence of new network technologies such as 3G and 4G, improved professional and personal data supervision and the standardization and up-gradation of all operating systems.

The market is highly competitive with major players facing strong competition from the regional players thus creating difficulties for vendors retain their market shares. For instance, Nokia has lost a considerable amount of market share in the past two years. Android, iPhone OS (operating system), BlackBerry OS, Symbian and Windows are some of the operating system used in the smartphone. Blackberry operating system is popular in North America. iPhone operating system has recently witnessed a high growth rate in North America and is anticipated to grow in the forecast period as well. Increase in penetration of internet with technological advancements and up gradation of the network infrastructure is contributing to the growth of the market.

Browse Full Report:http://www.persistencemarketresearch.com/market-research/mobile-phone-smart-phone-market.asp

Currently, mobile phone and smart phone market is matured in the developed world with an average of more than one device or subscription per person. The growth of this market is from emerging regions such as Asia Pacific, Latin America, Eastern Europe, the Middle East and Africa, where smart phone have witnessed proliferating with regional players introducing low cost products to obtain a competitive edge. China and India are currently the top contributors to this market and with the market still at the nascent stage; it is expected to witness exponential growth in near future.

Major players in the mobile phone and smart phone market include Apple Inc., Acer Inc., Asustek Computer Inc., Google Inc., Benq Corporation, Hewlett-Packard Company, Huawei Technologies, Htc Corp, LG Electronics, Motorola Inc., Mitac Technology Corp., Nokia Corp., Research In Motion Ltd., Panasonic Corporation, Sagem Wireless, Sony Ericsson, Samsung Electronics Co., Ltd. and Spice Mobility Limited. The market has less entry barriers, so to reduce the threat from new entrants, these players are continuously engage in innovating new products to retain its customer base and in-turn its market share.

Mobile/Micro Data Center Market – Global Industry Analysis and Forecast 2015 – 2021

The mobile/micro data centers enable consolidation of maintaining, monitoring and storing data. These data centers are compatible with the existing platforms and operating systems, this reduces the time and cost to develop new platforms for micro data centers. Micro data centers primarily deploy high speed connectivity and user-server configuration. This helps the datacenter providers to fulfill the service level agreement with their clients. Additionally, these data centers are as reliable as the traditional data centers due to presence of continuous power supply, desired cooling, safe wiring and high precision environmental monitoring systems. As a result, these data centers provide site continuity, performance continuity and business continuity. Moreover, these data centers reduce the cost of purchasing or leasing a building to deploy the data center. These micro data centers offer a contingency backup for the primary data center as a result, this increases the demand for a cost effective secondary data storage solution.

There are various standards and guidelines for micro data centers developed by American National Standards Institute (ANSI), Telecommunication Industry Association (TIA), National Electric Manufacturers Association (NEMA), Canadian Standards Association (CSA) and Underwriters Laboratory (UL) .The rapid growth in cloud based services across the IT industry and the rising adoption of wireless connectivity are the major driver for mobile/micro data center market. Furthermore, the low capital expenditure, features such as scalability and portability, and energy efficient solutions encourages the organizations to use micro data centers. Further, innovative designs and fast deployment of such data centers are the potential opportunities for the growth of this market. However, the lack of awareness about these data centers is hindering the growth of this market.

Browse Full Report:http://www.persistencemarketresearch.com/market-research/mobile-micro-data-center-market.asp

The mobile/micro data centers market is segmented on the basis of organizations size, applications, end-use industry, rack size and geography. There are several small and medium (SMEs) sized and large enterprises that have the potential resources to deploy mobile/micro data center for various applications such as disaster recovery, remote admin support and mobile computing among others. The cost effective and easy installation of these data centers is encouraging the rising number of small and medium organizations to adopt mobile/micro data centers for their operations. Further, depending upon the size of data and resources available with the organizations the size of rack is selected. The rack size can be from 5 to 25 rack unit, 26 to 50 rack units and 51 to 100 rack unit. The end-use industry segment of this market is further segmented as banking and financial institutions (BFSI), telecommunication, government institutes, and military and defense among others. The telecommunication segment for mobile/micro datacenters is expected to witness tremendous growth in developing economies. In addition, on the basis of geography the market is segmented into North America, Europe, Asia- Pacific and Rest of the World.

Currently, the market for mobile/micro data centers is less competitive, however, with the growing data and cloud based services it is expected that the market will be highly competitive over the coming years. Major players dominating the mobile/micro data centers market include AST Modular, Canovate Group, Elliptical Mobile Solutions, Huawei Technologies Co. Ltd., Panduit Corporation, Rittal GmbH, Silicon Graphics, Inc., Zellabox and others.

Storage Networking Market – Global Industry Analysis and Forecast 2015 – 2021

Storage networking, also known as storage area network, is a network used to provide access to a secured, block level data storage. These networks are used to enhance the capacity of mass storage devices (tape libraries, optical jukeboxes and disk arrays) that have access to servers. Storage networking ensures that these storage devices appear as locally attached drives to any operating system. It links all storage devices together in any network and connects them to different operating systems in other IT networks. The storage networking market is primarily driven by explosion of digital data which generates the need for developing secure, efficient and effective data storage infrastructure among enterprises. Storage networking is a technology specially designed to allow computer systems to share huge volumes of data across high-speed local area networks, owing to which it isexpected to be the future of recent IT storage needs. The storage networking is used in various applications in industry sectors such as government communications, media and services, banking and securities, manufacturing and natural resources, insurance, retail, transportation, healthcare, entertainment and education.

In the information driven world, the amount of data that is generated is increasing at a rapid pace. The digital era has made possible electronic data capture for individuals, government agencies and private companies that enables them browse, store and share huge volumes of data securely. Private companies have been key contributors for information explosion and creating large amounts of data. Additionally, increase in implementation of customer relationship management and enterprise resource planning solutions have majorly contributed to data explosion through generating huge volumes of information about suppliers, partners and customers.

Browse Full Report:http://www.persistencemarketresearch.com/market-research/storage-networking-market.asp

Healthcare and entertainment industries are primary contributors fueling the growth of the global storage networking market. Data explosion is making these two sectors lucrative adopters of storage networking technology. Another key factor responsible for an increased demand for storage networking technology from the entertainment industry is the continuously rising data volume due to propagation of the broadband internet services. For instance, social networking sites such as Facebook and Twitter accumulate huge amounts of data and therefore are potential opportunities for storage networking market. Similarly, rising pressure to store huge volumes of medical data in the healthcare industry is also a key reason forcing this industry to adopt storage networking technology. Growing importance of maintaining patient related data is likely to offer a banquet of opportunities for storage networking industry.

Rising adoption of cost reduction techniques such as server virtualization is likely to boost storage networking market in the near future. Server virtualization integrates various emerging technologies such as Service-oriented architecture (SOA), Green IT and Software as a Service (SaaS) and therefore is a key strategy among other virtualization drives. Additionally, the implementation of centralized servers which requires more networked and distributed storage facilities is anticipated to enhance market growth. Effective working of virtualized environments depends on factors such as, efficiency, scalability, speed of the storage technology and reliability. With such integrated benefits in storage area network, this technology is poised to reap the highest benefits.

Major players in the storage networking market include Cisco Systems, Inc., Brocade Communications Systems, Inc., Coraid, Inc., Dell, Inc., Cutting Edge Networked Storage, EMC Corporation, Hewlett-Packard Company, Emulex Corporation, International Business Machines Corporation, NetApp, Inc., LSI Corporation, NETGEAR, Inc., Overland Storage, Inc., Nexsan Technologies, Inc., and QLogic Corporation.

IoT Security Market – Global Industry Analysis and Forecast 2015 – 2021

Internet of Things (IoT) connects devices such as industrial equipment and consumer objects on to a network, enabling gathering of information and management of these devices through software to increase efficiency and enable new services. IoT combines hardware, embedded software, communication services, and IT services. IoT helps create smart communication environments such as smart shopping, smart homes, smart healthcare, and smart transportation. The major components of IoT include WSN (Wireless Sensor Network), RFID (Radio Frequency Identification), cloud services, NFC (Near Field Communication), gateways, data storage & analytics, and visualization elements. IoT helps in effective management and monitoring of the multiple interconnected. IoT security can be addressed through network powered technology.

The Internet of Things has access to organizations existing operational technology (OT) networks and information technology in addition to multiple devices, sensors and other smart objects. Increasing dependence on the existing network connectivity gives rise to challenges including security threats. The priority and focus of the IT network is to protect data confidentially and secure access, ensuring operational and employee safety. Thus, there is an increased demand for IoT security solutions at workplace. The companies such as Cisco systems are trying to develop the approach that combines physical and cyber security components for employee safety and protection of the entire system.

Browse Full Report:http://www.persistencemarketresearch.com/market-research/iot-security-market.asp

To ensure the efficient functioning of devices such as smartphones, tablets, and PDAs at workplace, it is crucial to maintain network infrastructure security. The global IoT security market can be segmented on the basis of end-users and geography. Based on end-users, the market can be segmented into utilities, automobiles, and healthcare among others. On the basis of geography the global IoT security market can be segmented on five major regions which includes North America, Asia Pacific, Europe, Latin America and Middle East & Africa.

The need for the regulatory compliance is one of the major factors driving the market growth. With huge amount of digital information being transferred between people, the government of several economies are taking steps to secure networks from hackers and virus threats by establishing strict regulatory framework. Thus, compliance with such regulations is expected to support the demand for IoT security solutions. Furthermore, with advancements in technologies such as 3G and 4G LTE, threats such as data hacking have increased, which in turn have forced governments across the globe to establish stringent regulatory framework supporting the deployment of IoT security solutions. Emergence of smart city concept is expected to offer sound opportunity for the market growth in the coming years. The governments in the developed economies have already taken steps to develop smart cities by deploying Wi-Fi hotspots at multiple locations within a city. However, the market for IoT security solutions suffers from high cost of installation. The cost of installation is usually high to provide machine to machine communication, which has impeded the market growth in emerging cost sensitive economies.

Some of the key players in global IoT security market includes Cisco Systems, Infineon Technologies, Intel Corporation, Siemens AG, Wurldtech Security, Alcatel-Lucent S.A., Axeda Machine Cloud, Checkpoint Technologies, IBM Corporation, Huawei Technologies Co. Ltd, AT&T Inc., and NETCOM On-Line Communication Services, Inc. among others.

How to Best Manage Encryption Keys on Linux

Storing SSH encryption keys and memorizing passwords can be a headache. But unfortunately in today’s world of malicious hackers and exploits, basic security precautions are an essential practice. For a lot of general users, this amounts to simply memorizing passwords and perhaps finding a good program to store the passwords, as we remind such users not to use the same password for every site. But for those of us in various IT fields, we need to take this up a level. We have to deal with encryption keys such as SSH keys, not just passwords.

Storing SSH encryption keys and memorizing passwords can be a headache. But unfortunately in today’s world of malicious hackers and exploits, basic security precautions are an essential practice. For a lot of general users, this amounts to simply memorizing passwords and perhaps finding a good program to store the passwords, as we remind such users not to use the same password for every site. But for those of us in various IT fields, we need to take this up a level. We have to deal with encryption keys such as SSH keys, not just passwords.

Here’s a scenario: I have a server running on a cloud that I use for my main git repository. I have multiple computers I work from. All of those computers need to log into that central server to push to and pull from. I have git set up to use SSH. When git uses SSH, git essentially logs into the server in the same way you would if you were to launch a command line into the server with the SSH command. In order to configure everything, I created a config file in my .ssh directory that contains a Host entry providing a name for the server, the host name, the user to log in as, and the path to a key file. I can then test this configuration out by simply typing the command

ssh gitserver

And soon I’m presented with the server’s bash shell. Now I can configure git to use this same entry to log in with the stored key. Easy enough, except for one problem: For each computer I use to log into that server, I need to have a key file. That means more than one key file floating around. I have several such keys on this computer, and several such keys on my other computers. In the same way everyday users have a gazillion passwords, it’s easy for us IT folks to end up with a gazillion key files. What to do?

Cleaning Up

Before starting out with a program to help you manage your keys, you have to lay some groundwork on how your keys should be handled, and whether the questions we’re asking even make sense. And that requires first and foremost that you understand where your public keys go and where your private keys go. I’m going to assume you know:

1. The difference between a public key and private key

2. Why you can’t generate a private key from a public key but you can do the reverse

3. The purpose of the authorized_keys file and what goes in it

4. How you use private keys to log into a server that has the corresponding public key in its authorized_keys file.

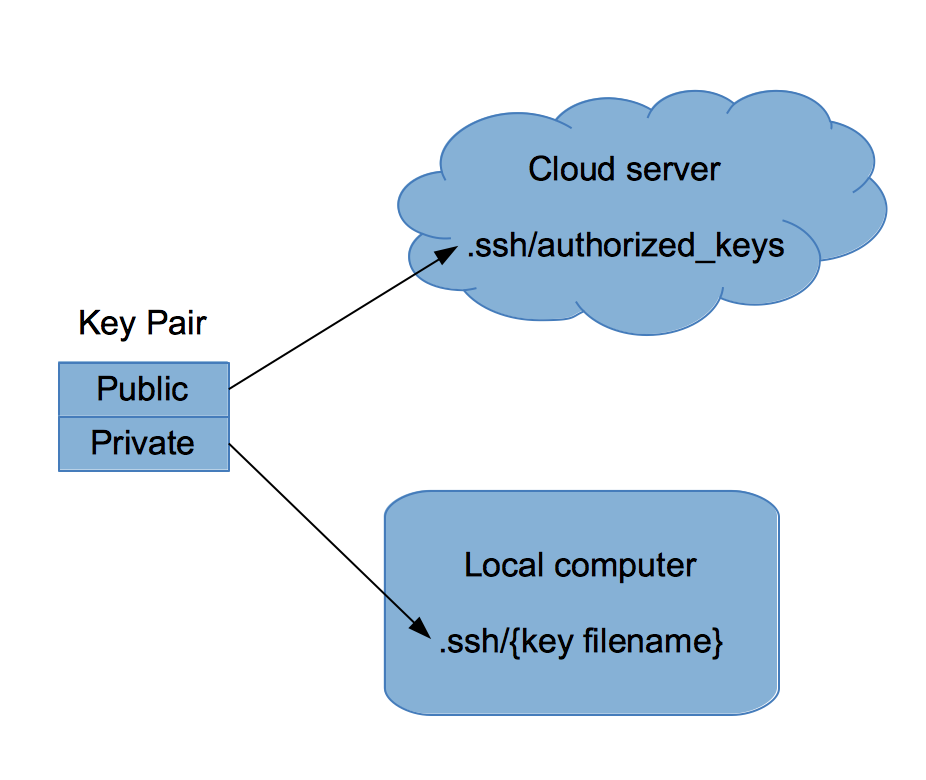

Here’s an example. When you create a cloud server on Amazon Web Services, you have to provide an SSH key that you’ll use for connecting to your server. Each key has a public part and a private part. Because you want your server to stay secure, at first glance it might seem you put the private key onto that server, and that you take the public key with you. After all, you don’t want that server to be publicly accessible, right? But that’s actually backwards.

You put the public key on the AWS server, and you hold onto your private key for logging into the server. You guard that private key and keep it by your side, not on some remote server, as shown in the figure above.

Here’s why: If the public key were to become known to others, they wouldn’t be able to log into the server since they don’t have the private key. Further, if somebody did manage to break into your server, all they would find is a public key. You can’t generate a private key from a public key. And so if you’re using that same key on other servers, they wouldn’t be able to use it to log into those other computers.

And that’s why you put your public key on your servers for logging into them through SSH. The private keys stay with you. You don’t let those private keys out of your hands.

But there’s still trouble. Consider the case of my git server. I had some decisions to make. Sometimes I’m logged into a development server that’s hosted elsewhere. While on that dev box, I need to connect to my git server. How can the dev box connect to the git server? By using the private key. And therein lies trouble. This scenario requires I put a private key on a server that is hosted elsewhere, which is potentially dangerous.

Now a further scenario: What if I were to use a single key to log into multiple servers? If an intruder got hold of this one private key, he or she would have that private key and gain access to the full virtual network of servers, ready to do some serious damage. Not good at all.

And that, of course, brings up the other question: Should I really use the same key for those other servers? That’s could be dangerous because of what I just described.

In the end, this sounds messy, but there are some simple solutions. Let’s get organized.

(Note that there are many places you need to use keys besides just logging into servers, but I’m presenting this as one scenario to show you what you’re faced with when dealing with keys.)

Regarding Passphrases

When you create your keys, you have the option to include a passphrase that is required when using the private key. With this passphrase, the private key file itself is encrypted using the passphrase. For example, if you have a public key stored on a server and you use the private key to log into that server, you’ll be prompted to enter a passphrase. Without the passphrase, the key cannot be used. Alternatively, you can configure your key without a passphrase to begin with. Then all you need is the key file to log into the server.

Generally going without a passphrase is easier on the users, but one reason I strongly recommend using the passphrase in many situations is this: If the private key file gets stolen, the person who steals it still can’t use it until he or she is able to find out the passphrase. In theory, this will save you time as you remove the public key from the server before the attacker can discover the passphrase, thus protecting your system. There are other reasons to use a passphrase, but this one alone makes it worth it to me in many situations. (As an example, I have VNC software on an Android tablet. The tablet holds my private key. If my tablet gets stolen, I’ll immediately revoke the public key from the server it logs into, rendering its private key useless, with or without the passphrase.) But in some cases I don’t use it, because the server I’m logging into might not have much valuable data on it. It depends on the situation.

Server Infrastructure

How you design your infrastructure of servers will impact how you manage your keys. For example, if you have multiple users logging in, you’ll need to decide whether each user gets a separate key. (Generally speaking, they should; you don’t want users sharing private keys. That way if one user leaves the organization or loses trust, you can revoke that user’s key without having to generate new keys for everyone else. And similarly, by sharing keys they could log in as each other, which is also bad.) But another issue is how you’re allocating your servers. Do you allocate a lot of servers using tools such as Puppet, for example? And do you create multiple servers based on your own images? When you replicate your servers, do you need to have the same key for each? Different cloud server software allows you to configure this how you choose; you can have the servers get the same key, or have a new one generated for each.

If you’re dealing with replicated servers, it can get confusing if the users need to use different keys to log into two different servers that are otherwise similar. But on the other hand, there could be security risks by having the servers share the same keys. Or, on the third hand, if your keys are needed for something other than logging in (such as mounting an encrypted drive), then you would need the same key in multiple places. As you can see, whether you need to use the same keys across different servers is not a decision I can make for you; there are trade offs, and you need to decide for yourself what’s best.

In the end, you’re likely to have:

– Multiple servers that need to be logged into

– Multiple users logging into different servers, each with their own key

– Multiple keys for each user as they log into different servers.

(If you’re using keys in other situations, as you likely are, the same general concepts will apply regarding how keys are used, how many keys are needed, whether they’re shared, and how you handle private and public parts of keys.)

Method of safety

Knowing your infrastructure and unique situation, you need to put together a key management plan that will help guide you on how you distribute and store your keys. For example, earlier I mentioned that if my tablet gets stolen, I will revoke the public key from my server, hopefully before the tablet can be used to access the server. As such, I can allow for the following in my overall plan:

1. Private keys are okay on mobile devices, but they must include a passphrase

2. There must exist a way to quickly revoke public keys from a server.

In your situation, you might decide you just don’t want to use passphrases for a system you log into regularly; for example, the system might be a test machine that the developers log into many times a day. That’s fine, but then you’ll need to adjust your rules a bit. You might include a rule that that machine is not to be logged into from mobile devices. In other words, you need to build your protocols based on your own situation, and not assume one size fits all.

Software

On to software. Surprisingly, there aren’t a lot of good, solid software solutions for storing and managing your private keys. But should there be? Consider this: If you have a program storing all your keys for all your servers, and that program is locked down by a quick password, are your keys really secure? Or, similarly, if your private keys are sitting on your hard drive for quick access by the SSH program, is a key management software really providing any protection?

But for overall infrastructure and creating and managing public keys, there are some solutions. I already mentioned Puppet. In the Puppet world, you create modules to manage your servers in different ways. The idea is that servers are dynamic and not necessarily exact duplicates of each other. Here’s one clever approach that uses the same keys on different servers, but uses a different Puppet module for each user. This solution may or may not apply to you.

Or, another option is to shift gears altogether. In the world of Docker, you can take a different approach, as described in this blog regarding SSH and Docker.

But what about managing the private keys? If you search, you’re not going to find many software options, for the reasons I mentioned earlier; the private keys are sitting on your hard drive, and a management program might not provide much additional security. But I do manage my keys using this method:

First, I have multiple Host entries in my .ssh/config file. I have an entry for hosts that I log into, but sometimes I have more than one entry for a single host. That happens if I have multiple logins. I have two different logins for the server hosting my git repository; one is strictly for git, and the other is for general-purpose bash access. The one for git has greatly restricted rights on that machine. Remember what I said earlier about my git keys living on remote development machines? There we go. Although those keys can log into one of my servers, the accounts used are severely limited.

Second, most of these private keys include a passphrase. (For dealing with having to type the passphrase multiple times, considering using ssh-agent.)

Third, I do have some servers that I want to guard a bit more carefully, and I don’t have an entry into my Host file. This is more a social engineering aspect, because the key files are still present, but it might take an intruder a bit longer to locate the key file and figure out which machine they go with. In those cases, I just type out the long ssh command manually. (It’s really not that bad.)

And you can see that I’m not using any special software to manage these private keys.

One Size Doesn’t Fit All

We occasionally get questions at linux.com for advice on good software for managing keys. But let’s take a step back. The question actually needs to be re-framed, because there isn’t a one-size-fits-all solution. The questions you ask should be based on your own situation. Are you simply trying to find a place to store your key files? Are you looking for a way to manage multiple users each with their own public key that needs to be inserted into the authorized_keys file?

Throughout this article, I’ve covered the basics of how all this fits together, and hopefully at this point you’ll see that how you manage your keys, and whatever software you look for (if you even need additional software at all), should happen only after you ask the right questions.

What You Need to Know About Fedora’s Switch From Yum to DNF

If you’re a fan of Fedora, and you’ve upgraded to release 22, you might have noticed a major change under the hood. The familiar (and long-standing) Yum package manager is gone. In its place is the much more powerful and intelligent Dandified Yum (DNF).

Instead of just marching forward, as if DNF were there all along, let’s take a look at why this happened, why it’s a good thing, and how the new package management system is used.

Before we do this, understand those of you who never touch the command line (which on a Fedora system might be a bare minimum) will not see anything different. The GUI front-end remains (pretty much) the same—it’s all still point-and-click goodness. If, however, you are a fan of installing software from the command line, you will notice a quick flash of new text when you go to issue the command:

yum install PACKAGENAME

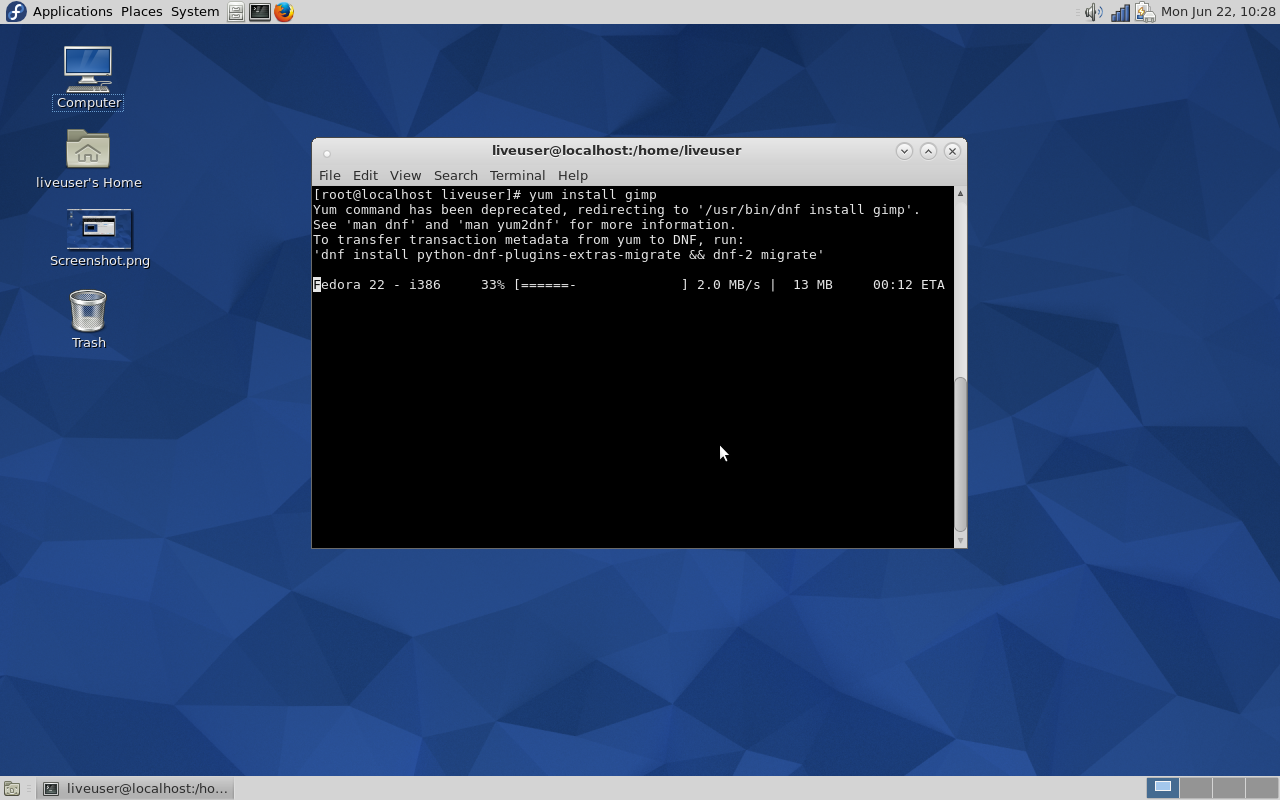

Instead of seeing a list of dependencies, you’ll first get a friendly warning (Figure 1) and then the package will install per normal. The reason the process will continue on is that the developers have created a wrapper that links yum to dnf—this was done to make the transition easier for end-users—so issuing the yum command will still work (at least during the transition).

But not every feature of Yum has already made the transition. Although it is the goal of the creators of DNF to get each and every Yum plugin in working order, some are not yet ready for prime time. For example, Tim Lauridsen is hard at work bringing the Yum Extender to DNF. He currently has a pre-release ready to test.

But the big question (for those that have yet to dive into DNF) is why did this happen?

Why?

There were ultimately three major reasons why Yum was forked into DNF. These reasons were long-standing and serious enough that Yum simply had to go. The reasons were:

-

An undocumented API—this meant more work for developers. In order for developers to do what they needed, it was often necessary to browse through the Yum code base just to be able to write a call. This meant development was very slow.

-

Python 3—Fedora was about to make the shift to Python 3 and Yum wouldn’t survive this change, whereas DNF can run using either Python 2 or 3.

-

Broken dependency solving algorithm—this has been an Achilles heel of the Fedora package manager for a long time. DNF uses a state-of-the-art satisfiability (SAT)-based dependency solver. This is the same type of dependency solver used in SUSE’s and openSUSE’s Zypper.

To put it simply, Yum was outdated and couldn’t stand up to the rigors of the modern Fedora distribution.

Why is this a good thing?

You have to look at this from two different perspectives: The end-user and the developer. If you’re an end-user, the switch from Yum to DNF means one very simple thing: a more reliable experience. Where this reliability comes in is DNFs superior dependency solving. It will now be a very rare occasion that you go to install a package and the system cannot resolve a dependency. The system is simply smarter. Yum’s dependency algorithm was, for all intents and purposes, broken. DNF’s SAT-based dependency solver fixes that issue.

End-users will also see much less memory usage during package installation. Installations and upgrades will also go much faster. That last bit should be of special importance. Running upgrades with the Yum tool was starting to grow unacceptably slow (especially when compared to the likes of apt-get and zypper).

If you’re a developer, the shift to DNF means you’ll be able to work much more efficiently and reliably. All exposed APIs are documented. Another plus for developers is that C will be implemented. The developers have created hawkeye and librepo (C and Python libraries for downloading packages and metadata from repositories). They will also be releasing even more C-based APIs in the future. Considering C is still such a widely-used language (it currently sits at No. 2 on the TIOBE index), this should be a welcome change to developers.

How DNF is used

This is where the good news for end-users falls into place. Migrating from Yum to DNF will only challenge your memory and muscle memory. When you open up a terminal window to run a command-line installation, the inclination will be to issue the command yum install PACKAGENAME. That is no longer the case. That same dandified command will be dnf install PACKAGENAME.

See how simple?

There will be hiccups along the way. Yes, DNF is a drop-in replacement for Yum; and if you weren’t a Yum power user, chances are you won’t experience a single issue in the migration. However, there are issues to be found. Let’s examine the old yum update –skip-broken command. When used, this command would run the update but skip all packages with broken dependencies. DNF, on the other hand, skips these broken packages, silently, by default. To that end, there is no need to include the –skip-broken flag. If you need to have DNF report broken packages, you have to run dnf update and then run dnf check-update. Some users aren’t happy with this behavior, as it adds extra steps to a once simple process. To power users, DNF could wind up requiring more work than Yum. For standard users, on the other hand, DNF will be little more than exchanging the letters yum for dnf.

The migration from Yum to DNF might be a bit awkward for a while—especially when unwitting users open up a command line and attempt to use Yum. But even then, you are issued a warning:

Yum command has been deprecated, use dnf instead.

See 'man dnf' and 'man yum2dnf' for more information.

To transfer transaction metadata from yum to DNF, run 'dnf migrate'

It becomes quite clear that something has changed. I do think, however, the developers could have been much more clear in their warning than to just go old school and say see ‘man dnf’. Instead, something more along the lines of:

The Yum command is no longer in use. Please replace ‘yum’ with ‘dnf’ in your command to make use of the newer system.

Yeah, it’s too simplistic for real-world use, but you get the idea. And, in the end, that’s all end users need to know—replace the word ‘yum’ with ‘dnf’ and you’re good to go.

Has the Fedora team gone in the right direction with the migration from Yum to DNF? If not, how should they have approached this transition?

Q&A: Zipcar Founder Robin Chase on Open Source and the Collaboration Economy

Robin Chase is a transportation entrepreneur known for founding the transportation related companies such as Zipcar, Buzzcar and Veniam. She wears many hats and is an inspiration to women all around the globe. She is also a strong supporter of Open Source and Open Collaborative technologies. She recently authored a book called Peers Inc: How People and Platforms Are Inventing the Collaborative Economy and Reinventing Capitalism. Chase will be delivering a keynote at the upcoming LinuxCon event.

In this email interview with ITworld’s Swapnil Bhartiya, Chase talks about her new book, her LinuxCon talk, and the economy-shifting movement brought on by the power of collaboration.

Read more at IT World.

Interview with Linus Torvalds on Slashdot

Last Thursday you had a chance to ask Linus Torvalds about programming, hardware, and all things Linux. You can read his answers to those questions below. If you’d like to see what he had to say the last time we sat down with him, you can do so here.

Productivity

by DoofusOfDeath

You’ve somehow managed to originate two insanely useful pieces of software: Linux, and Git. Do you think there’s anything in your work habits, your approach to choosing projects, etc., that have helped you achieve that level of productivity? Or is it just the traditional combination of talent, effort, and luck?

Linus: I’m sure it’s pretty much always that “talent, effort and luck”. I’ll leave it to others to debate how much of each…

I’d love to point out some magical work habit that makes it all happen, but I doubt there really is any. Especially as the work habits I had wrt the kernel and Git have been so different.

With Git, I think it was a lot about coming at a problem with fresh eyes (not having ever really bought into the traditional SCM mindset), and really trying to think about the issues, and spending a fair amount of time thinking about what the real problems were and what I wanted the design to be. And then the initial self-hosting code took about a day to write (ok, that was “self-hosting” in only the weakest sense, but still).

And with Linux, obviously, things were very different – the big designs came from the outside, and it took half a year to host itself, and it hadn’t even started out as a kernel to begin with. Clearly not a lot of thinking ahead and planning involved ;). So very different circumstances indeed.

Read more at Slashdot.