I usually don’t dig into new distros, unless they have something new to offer. The reason is because there are so many distros that are released everyday that it’s challenging, and to some extent, pointless to track them all.

I was not very excited when I decided to download Deepin as I assumed it to be yet another distro. I was wrong. It turned out to be an extremely polished, robust and easy-to-use distribution targeted at traditional Windows or Mac users. So what makes this OS so special? Almost everything.

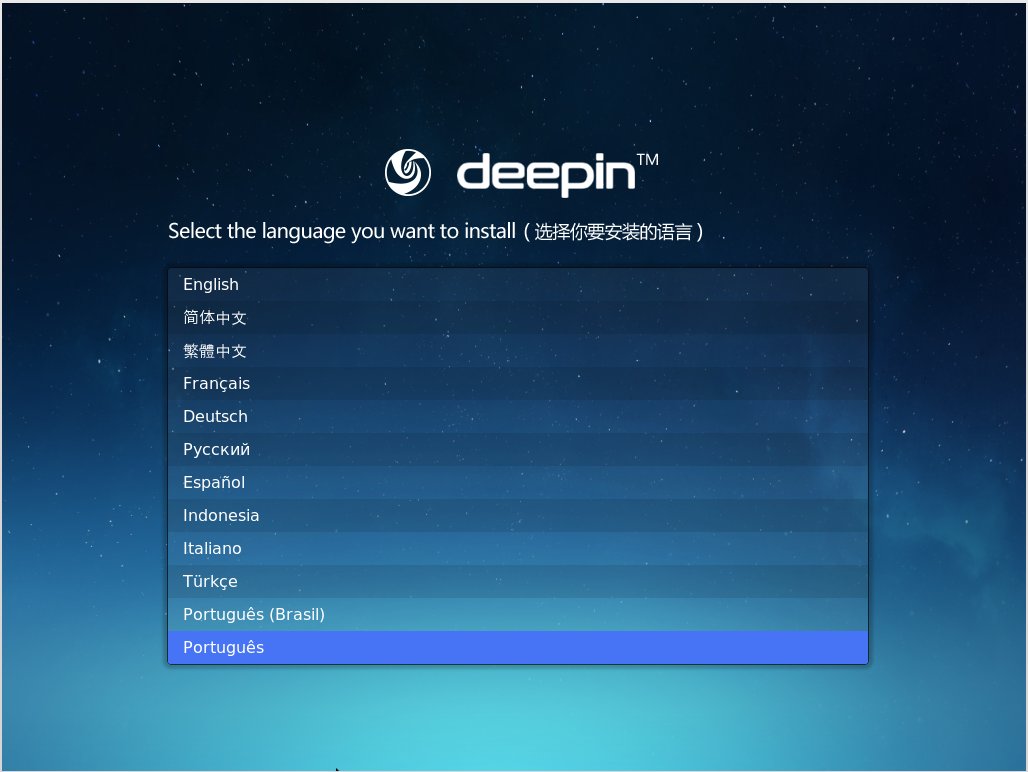

One of the most pleasant installers

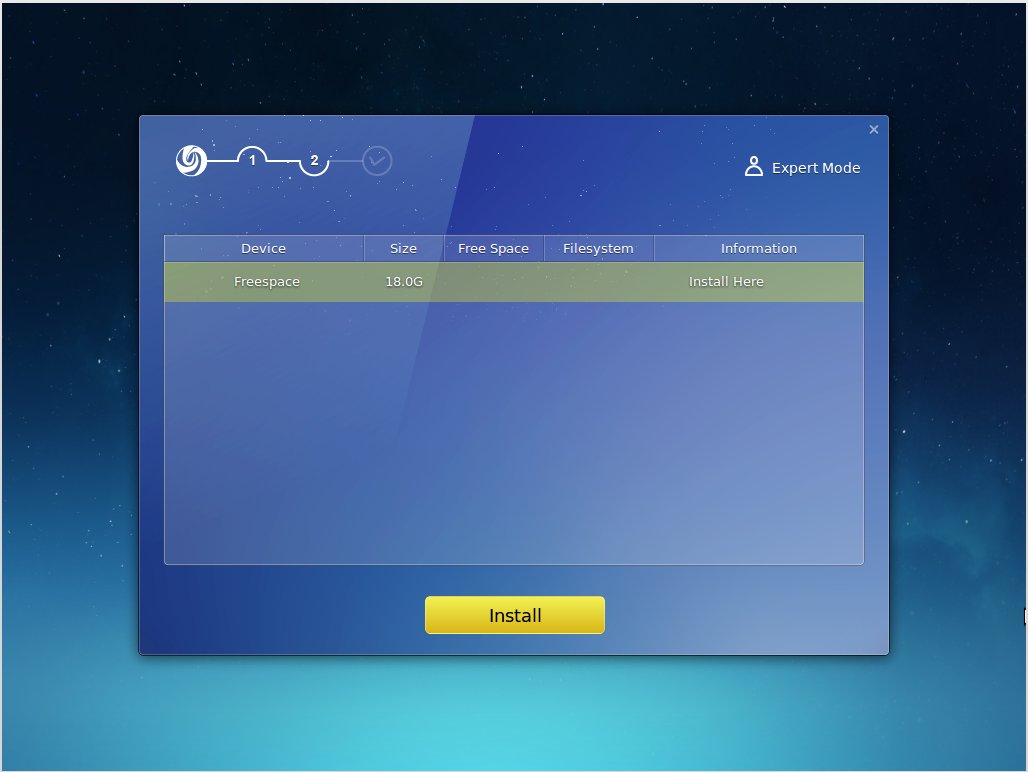

I have used almost all major GNU/Linux based distributions and Arch Linux is my default OS. That also means I have been through the installation of all these distributions. Based on that experience I can say that Deepin seems to have the simplest installation procedure. Not only is it simpler for a new user, it’s also quite pleasant.

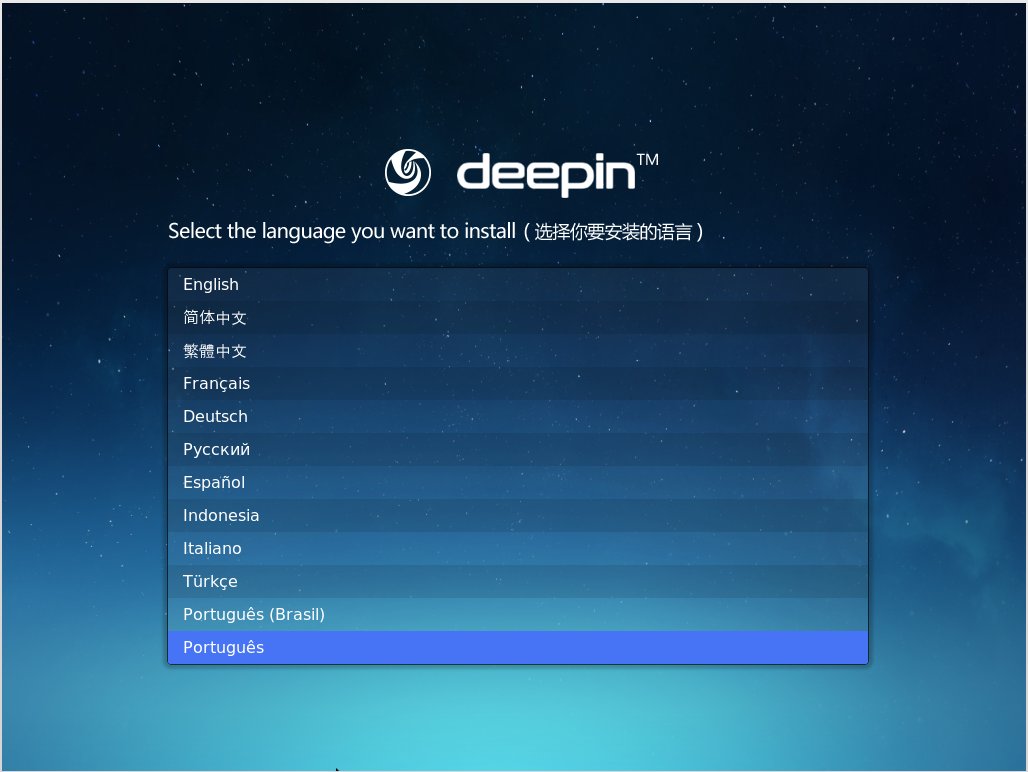

You need to download the multi language iso image of Deepin otherwise you may be stuck with Chinese. Inside the installer, the first screen shows language options and since it’s a Chinese distribution, don’t forget to choose English.

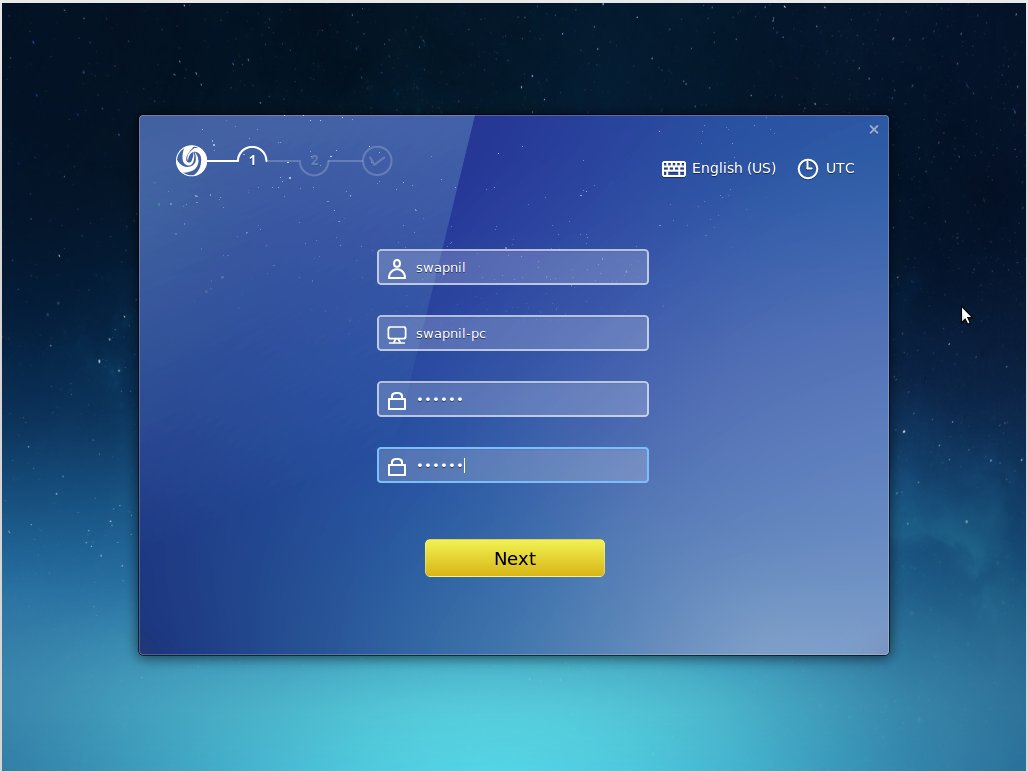

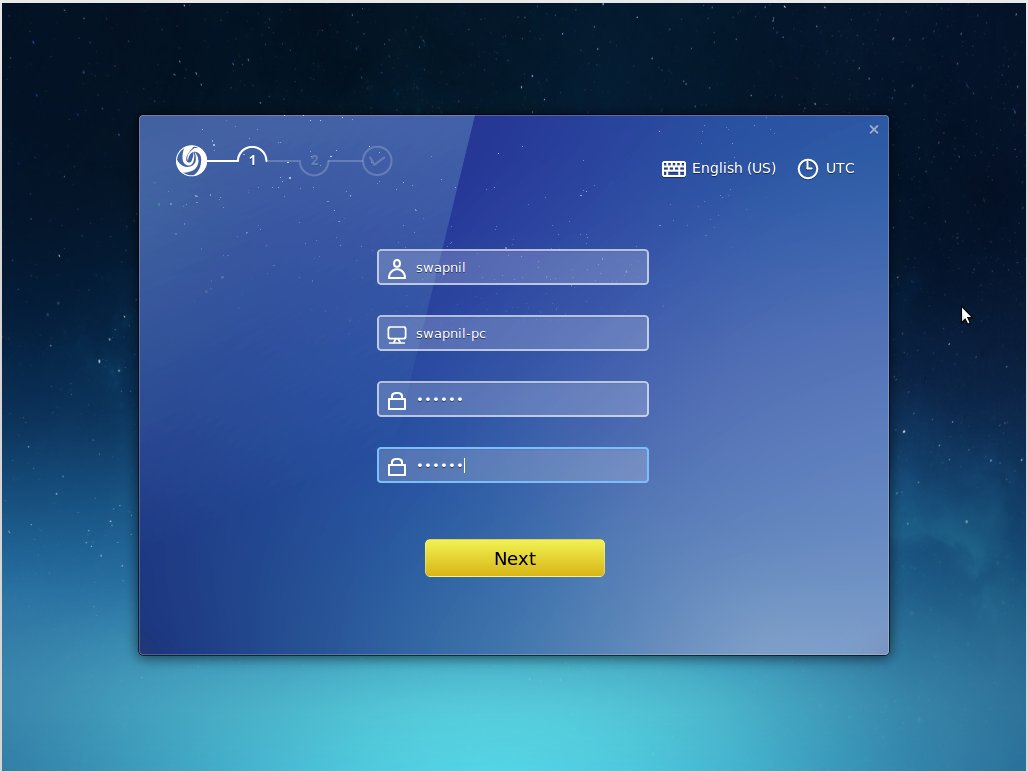

The next window asks you to create a username and password for the system user.

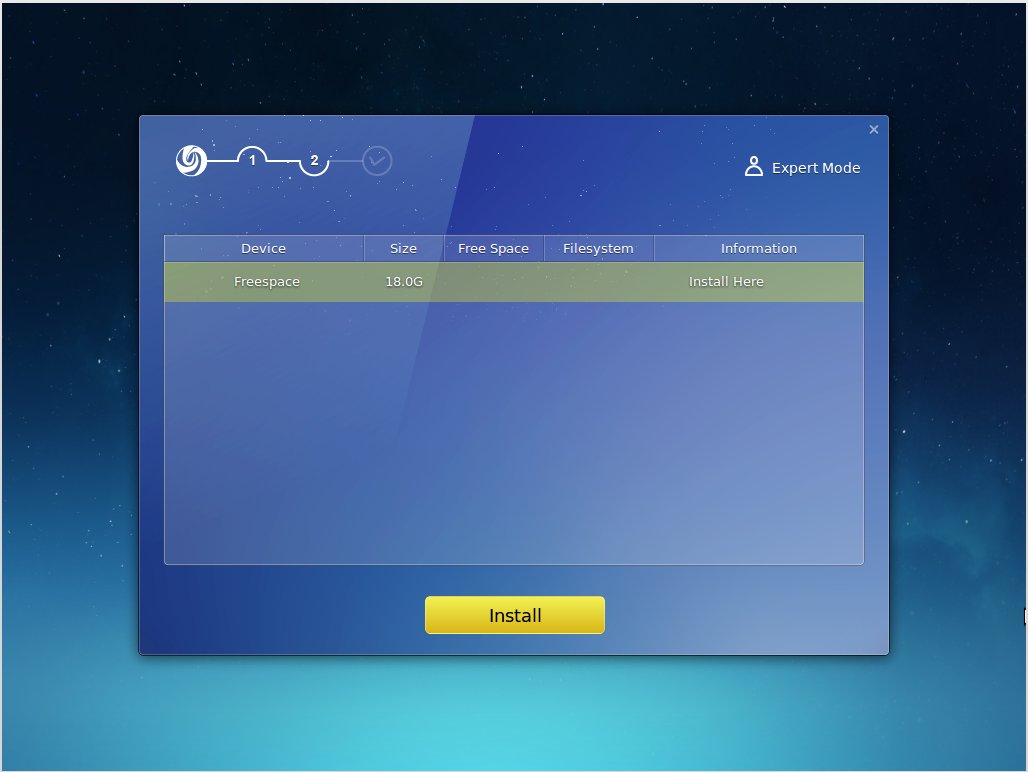

The third screen will let you partition the drive, where you can create root and swap (if needed) partitions. I don’t bother with “home” partition anymore and just give more space to the root partition. You should mount your other partitions or hard drives during installation itself to avoid the frustration of creating fstab entries for those partitions after installation.

Once you click on “install” Deepin gives you a demo of the system while it copies files to your drive and configures the OS. Once the installation is finished, unlike Fedora, it tells you to reboot your system so you can start using your brand new Deepin desktop. I have not come across a simpler installer on Linux for quite some time; it was refreshing. I think Deepin has done an incredible job at making the installation process as easy as it is to install Mac OSX or Windows.

Welcome to the demo shop

Once you reboot into your new system, Deepin will give you an animated demo of the system features and options. This is similar to what you see when you boot into your new Android; Google gives you a walkthrough of the system. That’s really neat because Deepin’s UI (user interface) is different from traditional PCs and a user does need a bit of help. Once again Deepin has done an incredible job at helping out new users.

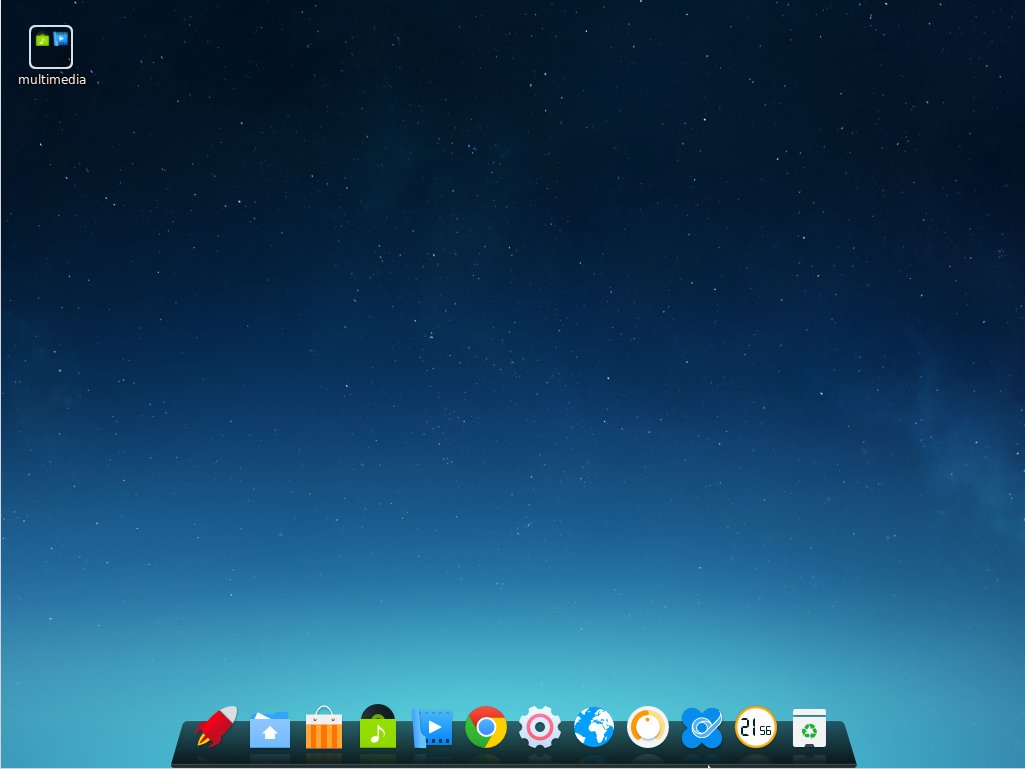

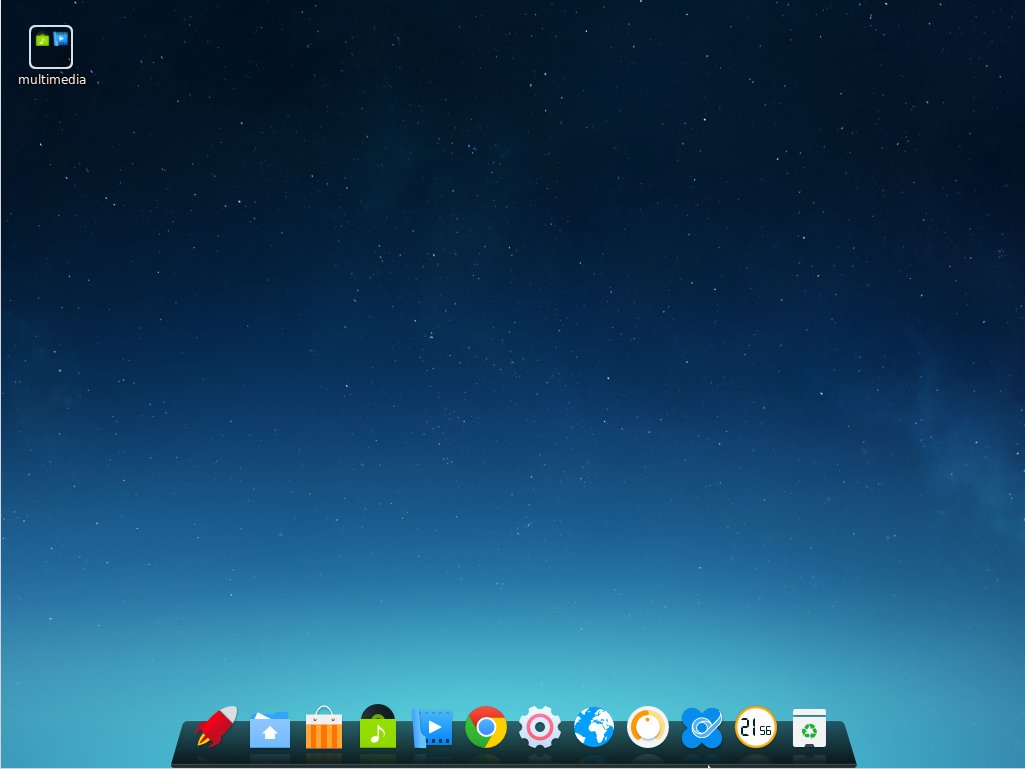

Skin deep beauty

Deepin clearly has a very clean and clutter-free interface. All you see is a clean desktop, a folder on the top left and launcher at the bottom. The choice of icons is also refreshing and modern compared to the ancient looking default icons of Ubuntu and Gnome.

The desktop has four hot corners, which means if you take your mouse to these corners you will be able to access certain features. The top left corner opens the applications launcher, the bottom left brings you back to the desktop and the bottom right opens the control center.

You can change/configure each corner. Just right-click on the desktop and choose ‘corner navigation’ from the context menu. This will show each active corner with a gear and you can choose the desired action for that corner.

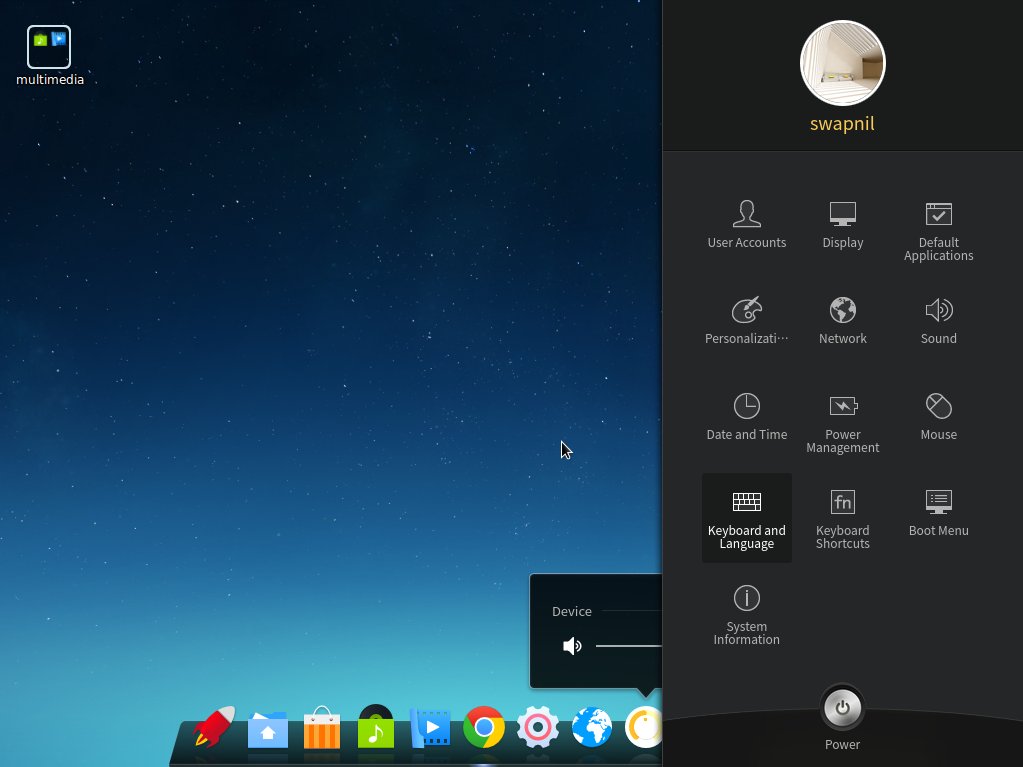

Houston, we are in control

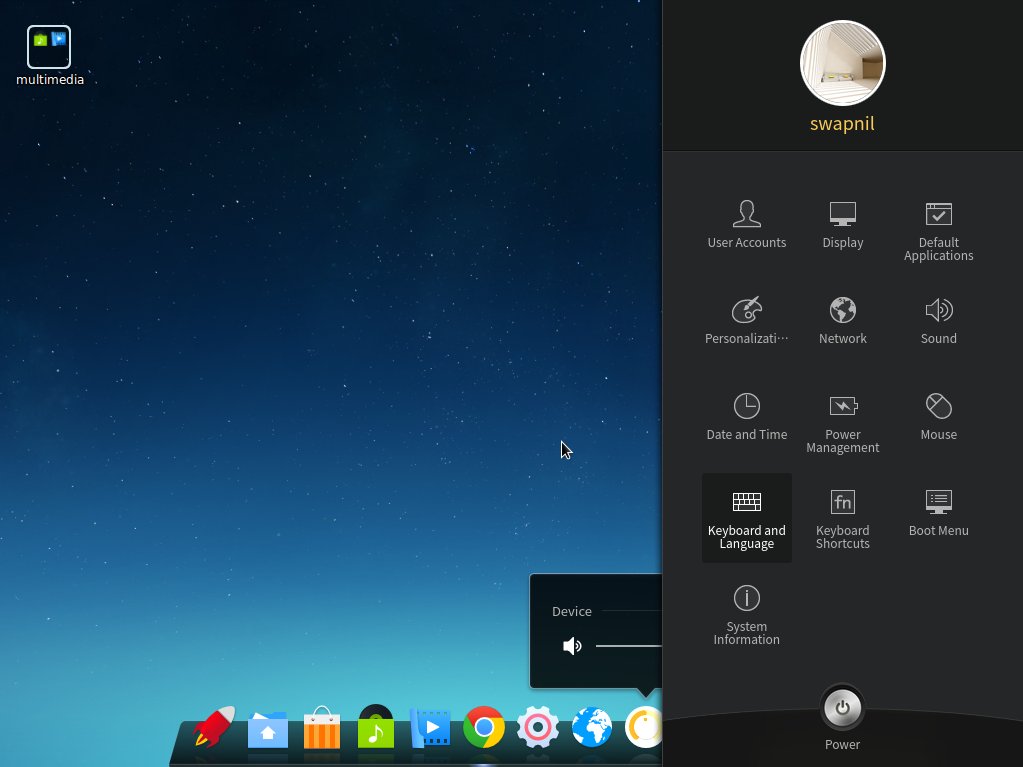

Deepin has adopted a different approach to the desktop. All system settings appear as a right panel, instead of a window as seen in other OSes. The control panel allows you to manage users, monitors, default applications, among many other things. The personalization option allows one to change themes, windows, icons, cursors, wallpapers, fonts etc.

There is also a clever option to manage the boot menu. You can easily customize the background image for the boot menu. Choosing the default OS, if you have more than one installed, is also extremely easy from the Control Center. It’s reminiscent of the good old Linux days, of the 3D cube, where you could change almost every aspect of your computer, contrary to the dumbed down approach adopted by many contemporary distributions.

Everything that glitters is not Gnome

Even if it looks like Gnome Shell, Deepin is not using the shell. They started off with the Shell, but encountered many problems as they tried to customize it to their needs (that could be one of the many reasons there are so many forks or alternatives to Gnome Shell: Cinnamon, Mate, Elementary OS’ Pantheon, and Unity, among many others).

Similar to other projects Deepin went ahead and developed their own Shell which was simply called Deepin Desktop Environment. DDE is based on HTML5 and WebKit and uses a mix of QML and Go Language for different components. Core components of DDE include the desktop itself, the brand new launcher, bottom Dock, and the control center.

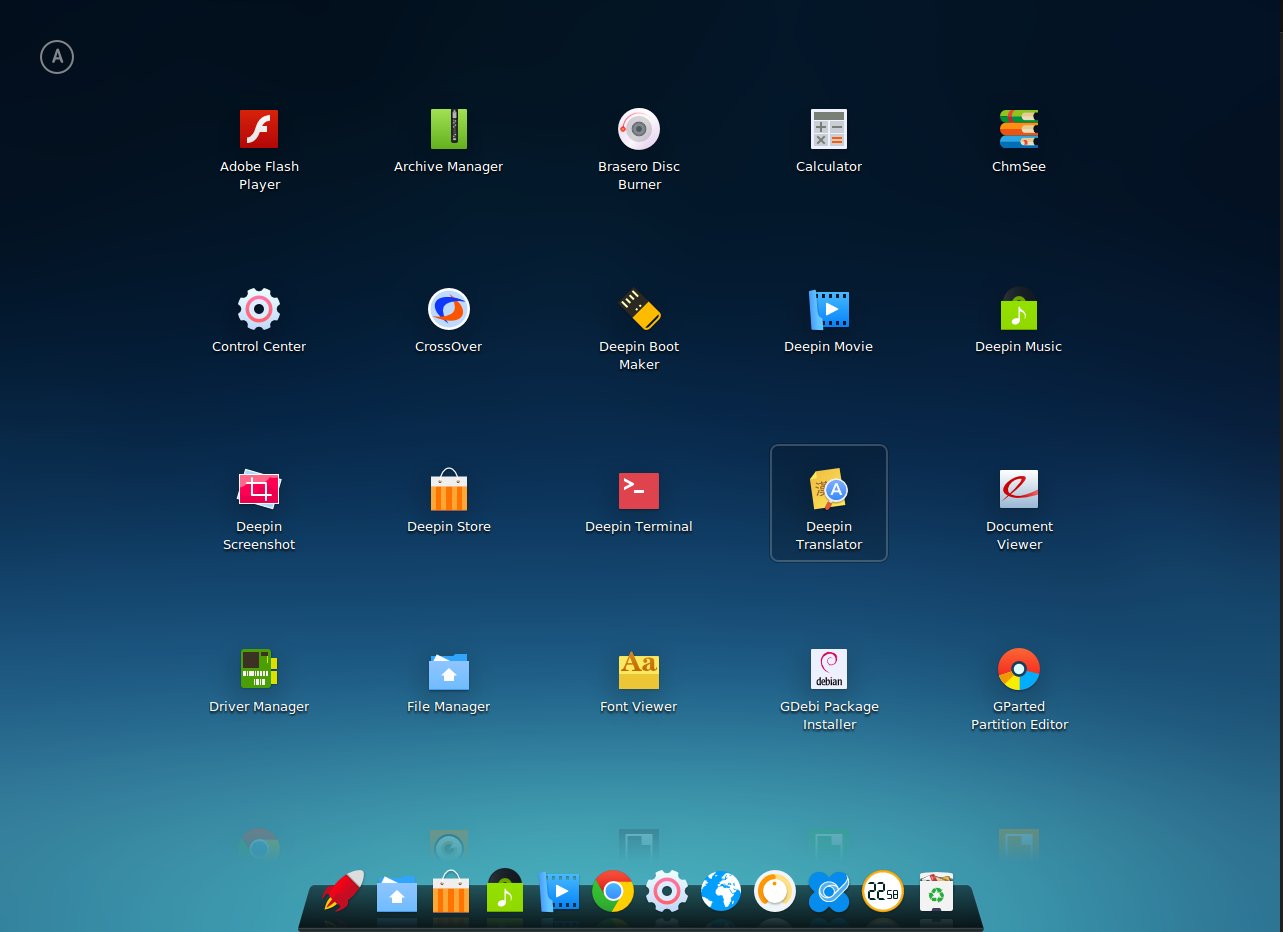

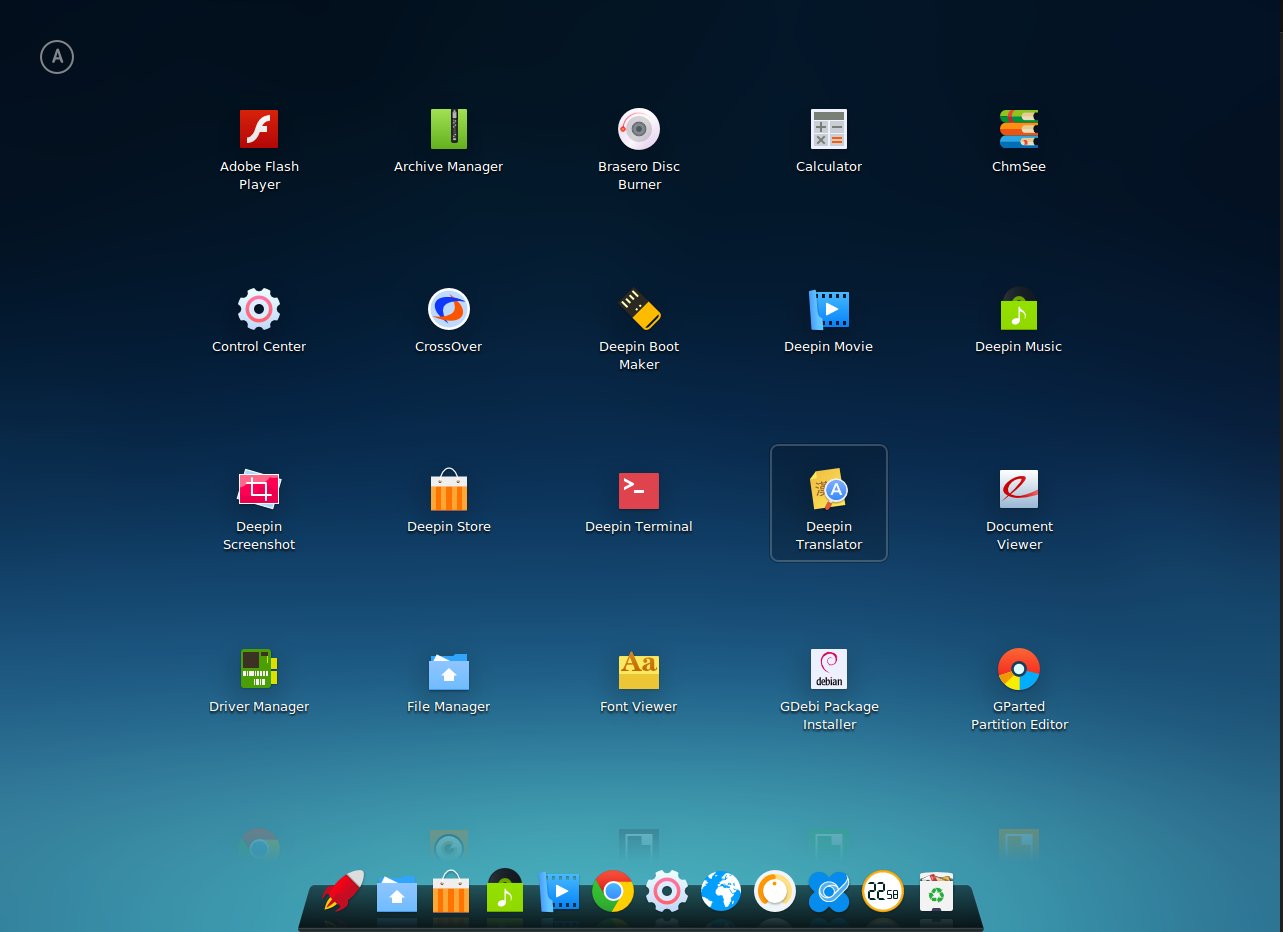

What’s in the box

Deepin comes with a decent set of applications pre-installed so you can get to work immediately. It comes with Chrome, Files (nautilus), LibreOffice suite, Deepin Music and Movie player, Deepin USB Creator, PDF viewer, and Gparted, among many others.

It also comes pre-installed with Adobe Flash Player, which you may not need if you are using Chrome, as Google has implemented Pepper plugin API for Flash support on Chrome. Most of the default apps are developed by the team and branded ‘Deepin’.

Since it’s based on Ubuntu, you can install every single app that’s available for Ubuntu using apt-get, launchpad or by simply downloading the .deb binaries. Deepin comes with an App Store similar to the Ubuntu Software Center which makes it easier to install and remove applications, for those who don’t want to deal with the terminal.

Need to use that legacy Windows app? No worries, it comes with CrossOver which enables installation of supported Windows programs. That alone makes life much easier for a Windows user planning to migrate to Linux.

Setting up printers and non-free drivers

Deepin comes with Jockey which assists a user in detecting any proprietary hardware, such as Nvidia GPUs, and then offers non-free and free drivers to be easily installed by a user. Installing and configuring a printer or scanner is also extremely easy, like it is on any other Ubuntu-based distributions. Just open “printers” from the Launcher and follow the instructions.

Conclusion

I am a loyal Arch Linux/openSUSE user, however I am also a distro hopper (even if I have been using Linux for over a decade now). I keep hunting for new distros just for the sake of freshness and often install them on my second machine after playing with them on VirtualBox. I must admit that Deepin is really getting me excited and if I have to migrate any user from Windows or Mac to Linux I will certainly give Deepin a try. The reasons are simple: It’s easy to install and use, it looks modern and very well polished.

One criticism is that there are some inconsistencies in apps. While Deepin’s own apps don’t have any menu bar, Gnome apps such as Evince have menu bars, which makes it look like patchwork. I assume future updates might blur these differences and all, at least pre-installed, apps will look consistent across the OS.

Other than that I am also not certain about the upgrade path as I have not tried it (upgrading) yet. Since Deepin has diverged way too much from Ubuntu, just like Linux Mint, I can’t really comment on how smooth will the upgrade be. There is no one-click or one-command path. Deepin provides a package to help users in upgrading to the new release and it does take some extra work. So yes, it’s not as easy as running distro-upgrade.

That said, Deepin has all the bells and whistles on top of the simplicity and ease of use. If you have not tried Deepin yet, you certainly should. And if you have tried Deepin let us know what you think about it in the comments below.

Storage industry technologies are undergoing a major shift and operating systems must evolve to keep pace with the change. That’s one reason why Micron Technology, a global leader in advanced semiconductor systems including DRAM, NAND and NOR Flash, recently joined the Linux Foundation as a corporate member.

Storage industry technologies are undergoing a major shift and operating systems must evolve to keep pace with the change. That’s one reason why Micron Technology, a global leader in advanced semiconductor systems including DRAM, NAND and NOR Flash, recently joined the Linux Foundation as a corporate member.

The Linux Foundation original video, “How Linux Was Built,” reached a huge milestone in 2014, surpassing 1 million views on YouTube. The video, one of the ten most popular on the Linux Foundation YouTube channel last year, illustrates how thousands of software developers from all over the world contribute collectively to the Linux kernel codebase. It’s the kind of video you can show to your parents and friends that will help them understand what makes Linux such an amazing software project. And its popularity also illustrates just how mainstream Linux and open source software have become.

The Linux Foundation original video, “How Linux Was Built,” reached a huge milestone in 2014, surpassing 1 million views on YouTube. The video, one of the ten most popular on the Linux Foundation YouTube channel last year, illustrates how thousands of software developers from all over the world contribute collectively to the Linux kernel codebase. It’s the kind of video you can show to your parents and friends that will help them understand what makes Linux such an amazing software project. And its popularity also illustrates just how mainstream Linux and open source software have become.  I gave a talk at

I gave a talk at