The company wrote in a blog post that while 128-bit architecture might have a place in the mobile space in years to come, for now, 64-bit is all that’s needed. [Read more]

ARM: Who Needs 128-bit Processors When We Already Have 64-bit?

8 Habits of High-Performing IT Teams

Accenture says higher-performing IT teams are more open to new projects, cloud and virtualization.

Red Hat Enterprise Linux 6.5 arrives

Red Hat’s newest update for its flagship enterprise Linux, Red Hat Enterprise Linux 6.5, is ready to go.

NVIDIA GeForce GTX 780 Ti Steams Ahead On Linux

As some good news for the Linux graphics community after discovering the AMD Radeon R9 290 is currently a big disappointment on Linux (likely due to the Linux Catalyst driver not being kept up as well as the Windows Catalyst version), I was testing the GeForce GTX 780 Ti along with some other new NVIDIA GPUs and it’s been a breeze. The GeForce GTX 780 Ti in particular has been a beauty on Linux and is the focus of today’s Linux hardware review.

DRM Gets More Linux 3.13 Updates; VMware DRI3

For those keeping track of Linux 3.13 kernel activity, another DRM subsystem pull update was submitted during this merge window…

Heads up Apple, Here Comes 64-Bit Android on Intel

Chip giant demonstrates a 64-bit Android platform running on its latest Atom processors at an investor conference. [Read more]

Phoronix Test Suite 4.8.5 Pushes Linux Benchmarking

The latest Phoronix Test Suite 4.8 “Sokndal” point release is now available with the latest fixes and minor improvements to the cross-platform open-source benchmarking software…

Setting Up a Multi-Node Hadoop Cluster with Beagle Bone Black

Learning map/reduce frameworks like Hadoop is a useful skill to have but not everyone has the resources to implement and test a full system. Thanks to cheap arm based boards, it is now more feasible for developers to set up a full Hadoop cluster. I coupled my existing knowledge of setting up and running single node Hadoop installs with my BeagleBone cluster from my previous post to create my second project. This tutorial goes through the steps I took to set up and run Hadoop on my Ubuntu cluster. It may not be a practical application for everyone to learn but currently distributed map/reduce experience is a good skill to have. All the machines in my cluster are already set up with Java and SSH from my first project so you may need to install them if you don’t have them.

Set Up

The first step naturally is to download Hadoop from apache’s site on each machine in the cluster and untar it. I used version 1.2.1 but version 2.0 and above is now available. I placed the resulting files in /usr/local and named the directory hadoop. With the files on the system, we can create a new user called hduser to actual run our jobs and a group for it called hadoop:

sudo addgroup hadoop sudo adduser --ingroup hadoop hduser

With the user created, we will make hduser the owner of the directory containing hadoop:

sudo chown -R hduser:hadoop /usr/local/hadoop

Then we create a temp directory to hold files and make the hduser the owner of it as well:

sudo mkdir -p /hadooptemp sudo chown hduser:hadoop /hadooptemp

With the directories set up, log in as hduser. We will start by updating the .bashrc file in the home directory. We need to add two export lines at the top to point to our hadoop and java locations. My java installation was openjdk7 but yours may be different:

# Set Hadoop-related environment variables export HADOOP_HOME=/usr/local/hadoop # Set JAVA_HOME (we will also configure JAVA_HOME directly for Hadoop later on) export JAVA_HOME=/usr/lib/jvm/java-7-openjdk-armhf # Add Hadoop bin/ directory to PATH export PATH=$PATH:$HADOOP_HOME/bin

Next we can navigate to our hadoop installation directory and locate the conf directory. Once there we need to edit the hadoop-env.sh file and uncomment the java line to point to the location of the java installation again:

export JAVA_HOME=/usr/lib/jvm/java-7-openjdk-armhf

Next we can update core-site.xml to point to the temp location we created above and specify the root of the file system. Note that the name in the default url is the name of the master node:

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>/hadooptemp</value>

<description>Root temporary directory.</description>

</property>

<property>

<name>fs.default.name</name>

<value>hdfs://beaglebone1:54310</value>

<description>URI pointing to the default file system.</description>

</property>

</configuration>

Next we can edit mapred-site.xml:

<configuration>

<property>

<name>mapred.job.tracker</name>

<value>beaglebone1:54311</value>

<description>The host and port that the MapReduce job tracker runs

at.</description>

</property>

</configuration>

Finally we can edit hdfs-site.xml to list how many replication nodes we want. In this case I chose all 3:

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

<description>Default block replication.</description>

</property>

</configuration>

Once this has been done on all machines we can edit some configuration files on the master to tell it which nodes are slaves. Start by editing the masters file to make sure it just contains our host name:

beaglebone1

Now edit the slaves file to list all the nodes in our cluster:

beaglebone1 beaglebone2 beaglebone3

Next we need to make sure that our master can communicate to its slaves. Make sure hosts file on your nodes contains the names of all the nodes in your cluster. Now on the master, create a key and copy it to the authorized_keys:

ssh-keygen -t rsa -P "" cat $HOME/.ssh/id_rsa.pub >> $HOME/.ssh/authorized_keys

Then copy the key to other nodes:

ssh-copy-id -i $HOME/.ssh/id_rsa.pub hduser@beaglebone2

Finally we can test the connections from the master to itself and others using ssh.

Starting Hadoop

The first step is to format HDFS on the master. From the bin directory in hadoop run the following:

hadoop namenode -format

Once that is finished Now we can start the NameNode and JobTracker daemons on the master. We simply need to execute these two commands from the bin directory:

start-dfs.sh start-mapred.sh

To stop them later we can use:

stop-mapred.sh stop-dfs.sh

Running an Example

With Hadoop running on the master and slaves, we can test out one of the examples. First we need to create some files on our system. Create some text files in a directory of your chosing with a few words in each. We can then copy the files to hdfs. From the main hadoop directory, execute the following:

bin/hadoop dfs -mkdir /user/hduser/test bin/hadoop dfs -copyFromLocal /tmp/*.txt /user/hduser/test

The first line will call mkdir on HDFS to create a directory /user/hduser/test. The second line will copy files I created in /tmp to the new HDFS directory. Now we can run the wordcount sample against it:

bin/hadoop hadoop-examples-1.2.1.jar wordcount /user/hduser/test /user/hduser/testout

The jar file name will vary based on what version of hadoop you downoaded. Once the job is finished, it will output the results in HDFS to /user/hduser/testout. To view the resulting files we can do this:

bin/hadoop dfs -ls /user/hduser/testout

We can then use the cat command to show the contents of the output:

bin/hadoop dfs -cat /user/hduser/testout/part-r-00000

This file will show us each word found and the number of times it was found. If we want to see proof that the job ran on all nodes, we can view the logs on the slaves from the hadoop/logs directory. For example, on the beaglebone2 node I can do this:

cat hadoop-hduser-datanode-beaglebone2.log

When I examined the file, I could see messages at the end showing the jobname and data received and sent, letting me know that all was well.

Conclusion

If you through all of this and it worked, congratulations on setting up a working cluster. Due to the slow performance of the BeagleBone’s SD card, it is not the best device for getting actual work done. However, these steps are applicable to faster arm devices as they come along. In the meantime, the BeagleBone Black is a great platform for practice and learning how to set up distributed systems.

How to Bulletproof Linux for Mad Experimentation

Everyone knows that keeping regular backups of our data is the No. 1 best insurance against mishaps. The No. 2 best insurance is smart partitioning on your Linux PC that puts your data on a different partition from the root filesystem. Having a single separate data partition is especially useful for distro-hoppers, and for multi-booting multiple distros; all your files are in one place, and protected from mad installation frenzies. And why not distro-hop and multi-boot random distros? Unlike certain inexplicably popular expensive fragile, low self-esteem proprietary operating systems it’s easy and fun. No hoops to jump, no blurry eleventy-eight digit registration numbers, no mother-may-I, no phoning your activities home to the mother ship: just download and start playing.

Just to keep it simple let’s start with a clean new empty hard disk. Thanks to SATA and USB adding new hard drives is dead-easy, which I know is totally obvious, but we should regularly take time out to be thankful for cool things like SATA and USB. Because adding new hard disks in the olden days was not easy, and we made do with megabytes. That’s right, not giga- and terabytes. Oh, the hardships.

But I digress. So here we are with our new hard disk all ready to be populated with Linuxes and reams of data files. (Why not reams of data? We still dial our phones.) The first thing to do is to write a new partition table to your hard disk with GPT, the GUID partition table. This is the new replacement for the creaking and inadequate old MS-DOS partition table. So how do you install a new GUID partition table? Gparted provides a pleasant graphical interface, and command-line commandos might enjoy GPT fdisk. Use a nice bootable rescue distro to format your new drive, like SystemRescue, or use the partitioning tool in the installer of whatever Linux you are installing.

You may also elect to stick with the musty old MS-DOS partition table if you prefer; the point is to use a partitioning scheme that puts your data files off in their own little separate world.

Partitioning Scheme

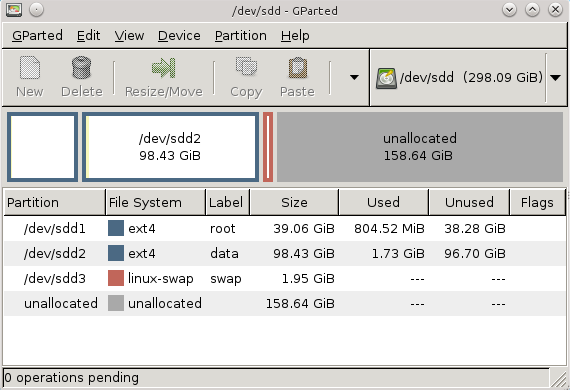

You could do it this simply: root, data, and swap (figure 1).

Using labels helps you know what’s on each partition. This particular partitioning scheme is simple: just map your home directory to the data partition. Your home directory could even be on a separate hard disk. How do you do this? Do it with the installer’s partitioning tool when you install a new distro. Or do it post-installation in /etc/fstab, which thankfully is the same as it’s always been and has not been “improved” to the point that only a kernel hacker understands how to use it. Like this example:

# /home on sdb4 UUID=89bc6f52-fa07-45a9-b443-25bb65279d6a /home ext4 defaults

Now you can muck with the root filesystem all you want and it won’t touch /home. This has one flaw, and that is dotfiles are stored in the same place as your data files. This has the potential to create a configuration mess when you have even slightly different versions of the same desktop environment, whether it’s on a multi-boot setup or installing a different distro with the same DE. Another potential problem to look out for is your mail store– some mail clients default to putting your messages in a dotfile. I recommend creating a normal, not-hidden directory for your mail store.

Clever Partitioning Scheme

So here is my clever tweak to avoid dotfile hassles, and that is to keep /home in the root filesystem. Then create a symlink from your homedir to your data partition, which contains only your data files and no dotfiles. This creates an extra level in your filepaths, which is a bit of an inconvenience, but then you get the best of all worlds: your personal dotfiles in /home, and the root filesystem cleanly separated from your data files.

But, you say, I want the same configs in multiple distros! No worries, just copy your dotfiles to your different homedirs. Though the reason for not sharing them in the first place is to avoid mis-configurations and conflicts, so don’t say I didn’t warn you.

More Clever Partitioning

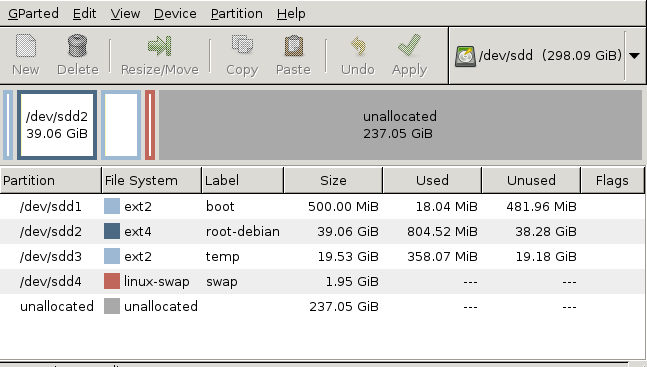

You can share /boot, /tmp and swap on a multiboot system (figure 2). Just remember, when you install a new Linux, to map it to these partitions. It’s nice to have /tmp on its own partition in case some process goes nuts and fills it. /var is also a good candidate to have its own partition, but you can’t share it– each Linux installation must have its own /var.

I give my /boot partition 500MB to a gigabyte on a multi-boot system. The Linux kernel and System.map run around 3-5MB, but initrd on some of my systems hits 30MB and up. I’m not interested in finicky housekeeping and keeping old /boot files cleaned up, so it hits 150MB for a single distro easily.

But What If

What if you already have a separate /home partition, complete with dotfiles, and you want to overwrite your root partition with a different Linux, or install some new distros to multi-boot, and still share your homedir? Easy peasey, though a bit of work: first move all your dotfiles into a new directory in your homedir. Rename your original /home directory to something that doesn’t conflict with the root filesystem like /data or /myfiles or whatever. Then install your new Linux or Linuxes and keep /home in the root directory, rather than putting it on a separate partition. Then symlink/data inside your new homedir, like this:

$ ln -s /data /home/carla/data

You’ll want to create an entry in /etc/fstab to make sure your /data partition is mounted at boot, like this example:

# /data on sdc3 UUID=3f84881f-507a-4676-8431-7771a6bc6d39 /data ext4 defaults

When you install a new Linux it automatically installs a set of default dotfiles, and also when you install new applications. If there is anything you need from your original set of dotfiles just copy them to wherever you need them.

What if everything is in your root filesystem and you don’t have a separate /home partition? Again all you do is create new partitions, symlinks, and appropriate entries in /etc/fstab.

Be sure to consult du Know How Big Your Linux Files Are? and Linux Tips: The Misunderstood df Command for cool ways to manage filesystems and see what’s going on in them, and GPT, the GUID partition table to learn more about GPT and UUIDs.

A Summer Spent on the LLVM Clang Static Analyzer for the Linux Kernel

As a kid, and some ten years before he started using Linux, Eduard Bachmakov dreamed of one day being involved in open source software. He didn’t really know how code worked, but thought the idea of collaborative global development, free of corporate interests, was cool. He started by playing around with virtual machines and dual boot, but didn’t make the full switch to Linux until he got to college, he said.

Now a dual-degree major in computer engineering, astrophysics and astronomy in his senior year at Villanova University in Pennsylvania, Bachmakov is doing some real programming. And this past summer, through his Google Summer of Code internship with The Linux Foundation, he worked on Linux for the first time.

Now a dual-degree major in computer engineering, astrophysics and astronomy in his senior year at Villanova University in Pennsylvania, Bachmakov is doing some real programming. And this past summer, through his Google Summer of Code internship with The Linux Foundation, he worked on Linux for the first time.

Bachmakov contributed to the LLVM Clang Static Analyzer for the Linux kernel with LLVM project lead Behan Webster and Linux Foundation trainer Jan-Simon Moeller as one of 15 GSoC interns with the Linux Foundation this summer. The analzyer is a userspace tool used at compile time to find bugs in a patch before it’s submitted, Bachmakov said. It was an appealing project to him, not only from a technical perspective but because it could have an impact on a larger group of people as support for the Clang compiler for the kernel grows, he said.

“People have heard about it, and some are using it a little bit, but no one is even close to using it near its full potential,” Bachmakov said. “So ideally if this tool for development would be developed further, a lot of sleepless nights would no longer occur.”

Say, for example, you’re allocating memory and for whatever reason end up freeing it twice, Bachmakov says. “A compiler can’t find that, but through a static analyzer you can write a checker that keeps track of where you’ve allocated memory and find paths through your program to find where you’d done it twice.”

Much work toward creating a static analyzer for the Linux kernel had already been done as part of the LLVM project. One of the goals of Bachmakov’s internship was to demonstrate how the analzyer works through a tool that traces where errors come from and creates a report. (See an example of his checker tool, here.) He also set out to make a selection of checkers that make sense within the kernel.

“A lot (of checks) while technically correct, don’t apply. Many checks are just omitted because it’s understood that this would never happen,” Bachmakov said. “These are issues that can’t be read from the code. These are things you have to know, so there were a lot of false positives.”

While his main concern now is prepping job and graduate school applications, Bachmakov says he’ll continue to contribute to open source projects. He’s playing around with a small Snapdragon development board to see if he can build a kernel for it and compile it with Clang.

He doesn’t quite feel ready to be a kernel developer, he says, but “whatever my job ends up being I’ll try to incorporate as much Linux and open source as I possibly can.”

Editor’s note: Stay tuned in coming weeks for our series of profiles on Linux Foundation GSoC interns. If you’re interested in learning more about Google Summer of Code internships in 2014 please visit: http://www.google-melange.com/gsoc/homepage/google/gsoc2014

The next round of applications starts Feb. 3, 2014.