The Linux world abounds in monitoring apps of all kinds. We’re going to look at my three favorite service monitors: Apachetop, Monit, and Supervisor. They’re all small and fairly simple to use. apachetop is a simple real-time Apache monitor. Monit monitors and manages any service, and Supervisor is a nice tool for managing persistent scripts and commands without having to write init scripts for them.

Monit

Monit is my favorite, because provides the perfect blend of simplicity and functionality. To quote man monit:

monit is a utility for managing and monitoring processes, files, directories and filesystems on a Unix system. Monit conducts automatic maintenance and repair and can execute meaningful causal actions in error situations. E.g. Monit can start a process if it does not run, restart a process if it does not respond and stop a process if it uses too much resources. You may use Monit to monitor files, directories and filesystems for changes, such as timestamps changes, checksum changes or size changes.

Monit is a good choice when you’re managing just a few machines, and don’t want to hassle with the complexity of something like Nagios or Chef. It works best as a single-host monitor, but it can also monitor remote services, which is useful when local services depend on them, such as database or file servers. The coolest feature is you can monitor any service, and you will see why in the configuration examples.

Let’s start with its simplest usage. Uncomment these lines in /etc/monit/monitrc:

set daemon 120

set httpd port 2812 and

use address localhost

allow localhost

allow admin:monit

Start Monit, and then use its command-line status checker:

$ sudo monit $ sudo monit status The Monit daemon 5.16 uptime: 9m System 'studio.alrac.net' status Running monitoring status Monitored load average [0.17] [0.23] [0.14] cpu 0.8%us 0.2%sy 0.5%wa memory usage 835.7 MB [5.3%] swap usage 0 B [0.0%] data collected Mon, 04 Sep 2017 13:04:59

If you see the message “/etc/monit/monitrc:289: Include failed — Success ‘/etc/monit/conf.d/*'” that is a bug, and you can safely ignore it.

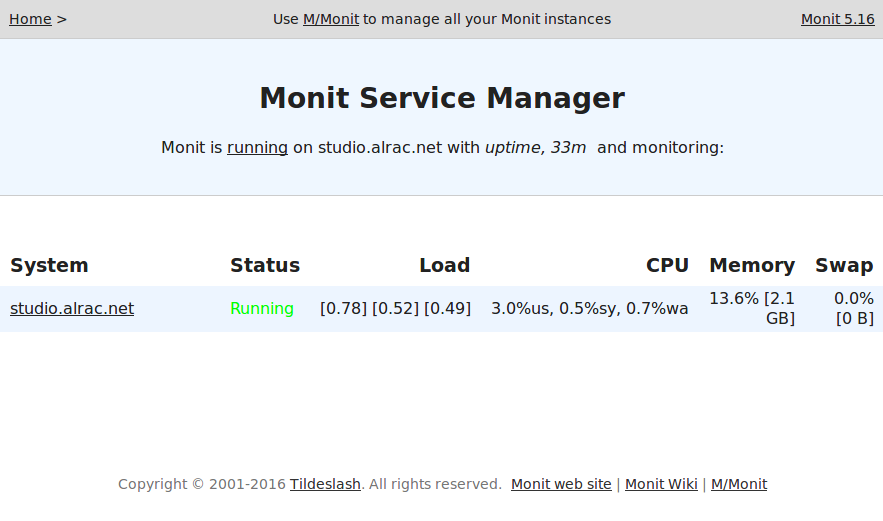

Monit has a built-in HTTP server. Open a Web browser to http://localhost:2812. The default login is admin, monit, which is configured in /etc/monit/monitrc. You should see something like Figure 1 (below).

Click on the system name to see more statistics, including memory, CPU, and uptime.

That is fun and easy, and so is adding more services to monitor, like this example for the Apache HTTP server on Ubuntu.

check process apache with pidfile /var/run/apache2/apache2.pid

start program = "service apache2 start" with timeout 60 seconds

stop program = "service apache2 stop"

if cpu > 80% for 5 cycles then restart

if totalmem > 200.0 MB for 5 cycles then restart

if children > 250 then restart

if loadavg(5min) greater than 10 for 8 cycles then stop

depends on apache2.conf, apache2

group server

Use the appropriate commands for your Linux distribution. Find your PID file with this command:

echo $(. /etc/apache2/envvars && echo $APACHE_PID_FILE)

The various distros package Apache differently. For example, on Centos 7 use systemctl start/stop httpd.

After saving your changes, run the syntax checker, and then reload:

$ sudo monit -t Control file syntax OK $ sudo monit reload Reinitializing monit daemon

This example shows how to monitor key files and alert you to changes. The Apache binary should not change, except when you upgrade.

check file apache2

with path /usr/sbin/apache2

if failed checksum then exec "/watch/dog"

else if recovered then alert

This example configures email alerting by adding my mailserver:

set mailserver smtp.alrac.net

monitrc includes a default email template, which you can tweak however you like.

man monit is well-written and thorough, and tells you everything you need to know, including command-line operation, reserved keywords, and complete syntax description.

apachetop

apachetop is a simple live monitor for Apache servers. It reads your Apache logs and displays updates in realtime. I use it as a fast easy debugging tool. You can test different URLs and see the results immediately: files requested, hits, and response times.

$ apachetop

last hit: 20:56:39 atop runtime: 0 days, 00:01:00 20:56:56

All: 12 reqs ( 0.5/sec) 22.4K ( 883.2B/sec) 1913.7B/req

2xx: 6 (50.0%) 3xx: 4 (33.3%) 4xx: 2 (16.7%) 5xx: 0 ( 0.0%)

R ( 30s): 12 reqs ( 0.4/sec) 22.4K ( 765.5B/sec) 1913.7B/req

2xx: 6 (50.0%) 3xx: 4 (33.3%) 4xx: 2 (16.7%) 5xx: 0 ( 0.0%)

REQS REQ/S KB KB/S URL

5 0.19 17.2 0.7*/

5 0.19 4.2 0.2 /icons/ubuntu-logo.png

2 0.08 1.0 0.0 /favicon.ico

You can specify a particular logfile with the -f option, or multiple logfiles like this: apachetop -f logfile1 -f logfile2. Another useful option is -l, which makes all URLs lowercase. If the same URL appears as both uppercase and lowercase it will be counted as two different URLs.

Supervisor

Supervisor is a slick tool for managing scripts and commands that don’t have init scripts. It saves you from having to write your own, and it’s much easier to use than systemd.

On Debian/Ubuntu, Supervisor starts automatically after installation. Verify with ps:

$ ps ax|grep supervisord 7306 ? Ss 0:00 /usr/bin/python /usr/bin/supervisord -n -c /etc/supervisor/supervisord.conf

Let’s take our Python hello world script from last week to practice with. Set it up in /etc/supervisor/conf.d/helloworld.conf:

[program:helloworld.py] command=/bin/helloworld.py autostart=true autorestart=true stderr_logfile=/var/log/hello/err.log stdout_logfile=/var/log/hello/hello.log

Now Supervisor needs to re-read the conf.d/ directory, and then apply the changes:

$ sudo supervisorctl reread $ sudo supervisorctl update

Check your new logfiles to verify that it’s running:

$ sudo supervisorctl reread helloworld.py: available carla@studio:~$ sudo supervisorctl update helloworld.py: added process group carla@studio:~$ tail /var/log/hello/hello.log Hello World! Hello World! Hello World! Hello World!

See? Easy.

Visit Supervisor for complete and excellent documentation.

Learn more about Linux through the free “Introduction to Linux” course from The Linux Foundation and edX.