This blog is about a deep learning system I’ve created, called DeepSPADE (alias DeepSmokey) and how it’s being used to build better Internet communities.

To begin, what is DeepSPADE, and what does it do?

DeepSPADE stands for Deep Spam Detection, and the basic point is for machine learning to do a Natural Language Classification task to differentiate between spam and non-spam posts on public community forums.

One such website is Stack Exchange (SE), a network of over 169 different web forums for everything ranging from programming, to artificial intelligence, to personal finance, to Linux, and much more!

Stack Overflow (SO), a community forum part of SE that’s dedicated to general programming, is the world’s most popular forum site for coders. With over 14,500,000 questions asked during the seven years it’s been up, and 6,500,000 of those questions answered, you can see how popular it truly is.

However, like any public website, Stack Overflow is cluttered with garbage. While most members of this community are legitimately interested in sharing their knowledge or getting help from others, there are some who seek to spam the website. In fact, there are more than 30 spam posts everyday on SO, on average.

To combat this, the SmokeDetector system was designed and developed by a group of programmers, called Charcoal SE. SmokeDetector uses massive RegEx to try and find spam messages based on their content.

Once I, a big supporter of Machine Learning, found out they used RegEx for their spam classification, I immediately shouted “Why not Deep Learning?!?” This idea was welcomed by the Charcoal Community; in fact, the reason they hadn’t incorporated it earlier was that they didn’t have anybody who worked with machine learning. I joined the Charcoal Community and began developing DeepSPADE to contribute towards their mission.

The DeepSPADE Model

DeepSPADE uses a combination of Convolutional Neural Networks (CNNs) and Gated Recurrent Units (GRUs) to run this classification task. The word-vectors it uses to actually understand the natural language that it’s given are word2vec vectors trained before the actual model’s training starts. However, during model training, the vectors are fine-tuned to achieve optimal performance.

The Neural Network (NN) is designed in Keras with a Tensorflow (TF) back end (TF provides significant performance gains over Theano), and Figure 1 shows a very long diagram of the model itself:

As you can see, the model I’ve designed is very deep. In fact, not only is it deep, it’s a parallel model.

Let’s start off with a question that a lot of people have: Why are you using CNNs and GRUs? Why not just either one of those layers?

The answer lies deep within the actual working of these two layers. Let’s break them down:

CNNs understand patterns in data that aren’t time-bound. This means that the CNN doesn’t look at the natural language in any specific order, it just looks at the Natural Language like an array of data without order. This is helpful if there is a very specific word that we know is almost always related to spam or non-spam.

GRUs (or RNNs – Recurrent Neural Networks – in general) understand patterns in data that are very specifically arranged in a time-series. This means that the RNN understands the order of words, and this is helpful because some words may convey entirely different concepts based on how they work.

When these two layers are combined in a specific way to highlight their advantages, the real magic happens!

In fact, to explain why the combination is so powerful, take a look at the following “evolution” of the accuracy of the DeepSPADE system on 16,000 testing rows:

-

65% – Baseline accuracy with Convolutional Neural Networks

-

69% – With deeper Convolutional Neural Networks

-

75% – With introduction of higher quantity & quality of data

-

79% – With small improvements to model

-

85% – With LSTMs introduced along with CNN model (no parallelism)

-

89% – With higher embedding size, deeper CNN and LSTM

-

96% – With GRUs instead of LSTMs, more Dropout, more Pooling, and higher embedding size

-

98.76% – With Parallel model & higher embedding size

The answer, again, lies in how the CNN itself works: It has a very strong ability to filter out noise and look at the signal of some content – plus, the performance (training/inference time) is much greater compared to that of an RNN.

So, the three Conv1D+Dropout+MaxPool groups in the beginning act as filters. They create many representations of the data with different angles of the data portrayed in each. They also work to decrease the size of the data while preserving the signal.

After that, the result of those groups splits into two different parts:

-

It goes into a Conv1D+Flatten+Dense.

-

It goes into a group of 3 GRU+Dropout, and then a Flatten+Dense.

Why the parallelism? Because again, both networks try and find different types of data. While the GRU finds ordered data, the CNN finds data “in general”.

Once the opinion of both Neural Nets is collected, the opinions are concatenated and fed through another Dense layer, which understands patterns and relationships as to when each Neural Network’s results or opinions are more important. It does this dynamic weighting and feeds into another Dense layer, which gives the output of the model.

Finally, this system can now be added to SmokeDetector, and its automatic weighting systems can begin incorporating the results of Deep Learning!

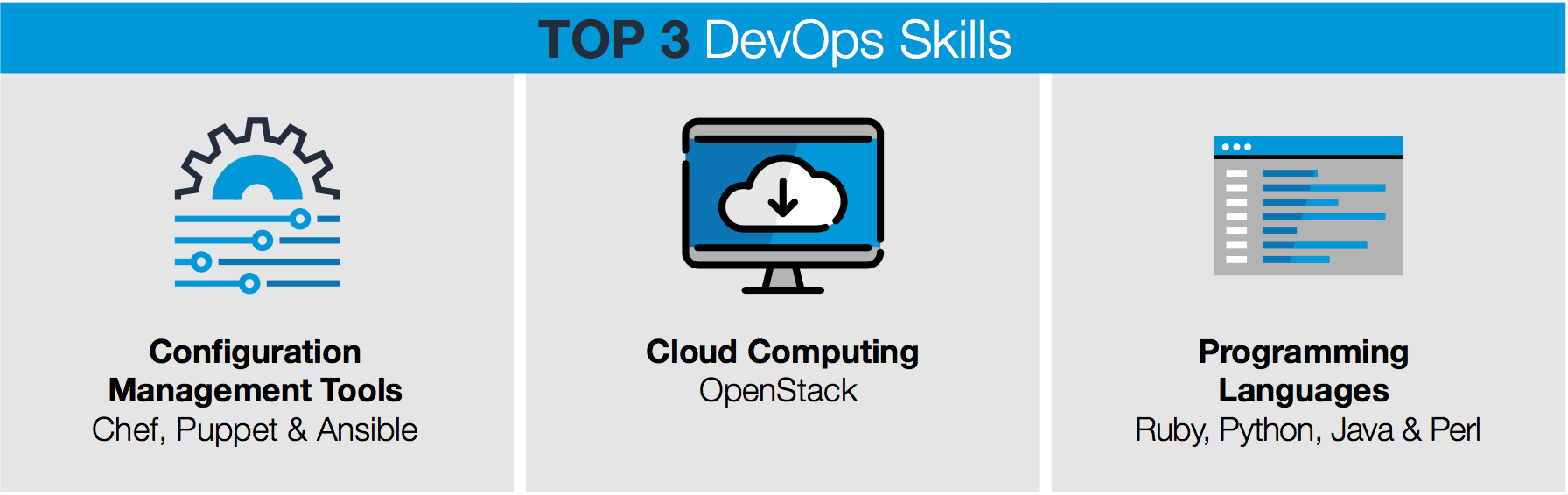

Plus, this system is trained, tested, and used entirely on Linux servers! Of course, Linux is an amazing platform for such software, because the hardware constraints are practically nil, and because most great development software is supported primarily on Linux (Tensorflow, Theano, MXNet, Chainer, CUDA, etc.).

I love open source software – doesn’t everyone? And, although this project isn’t open source just yet, there is a great surprise awaiting all of you soon!

Tanmay Bakshi, 13, is an Algorithm-ist & Cognitive Developer, Author and TEDx Speaker. He will be presenting a keynote talk called “Open-Sourced Inspiration – The Present and Future of Tech and AI” at Open Source Summit in Los Angeles. He will also present a BoF session discussing DeepSPADE.

Check out the full schedule for Open Source Summit here. Linux.com readers save on registration with discount code LINUXRD5. Register now!