With the Internet of Things, the realms of embedded Linux and enterprise computing are increasingly intertwined, and serverless computing is the latest enterprise development paradigm that device developers should tune into. This event-driven variation on Platforms-as-a-Service (PaaS) can ease application development using ephemeral Docker containers, auto-scaling, and pay-per execution in the cloud. Serverless is seeing growing traction in enterprise applications that need fast deployment and don’t require extremely high performance or low latency, including many cloud-connected IoT applications.

At the recent Embedded Linux Conference, IBM IoT/Mobile software engineer Kalonji Bankole and IBM Cloud & Watson developer Prashant Khanal detailed Big Blue’s spin on serverless, called IBM Bluemix OpenWhisk. Their presentation — built around a demo of a DIY, voice-enabled Raspberry Pi home automation gizmo that activates a WeMo smart light switch — shows how OpenWhisk integrates with IBM Watson, and discusses Watson interactions with MQTT and IFTTT (see video below).

Like commercial serverless frameworks such as Amazon’s AWS Lambda, Microsoft’s Azure Functions, and Google Functions, the open source OpenWhisk provides a “function as a service” approach to app development. The most immediate benefit of serverless is that it frees developers from the hassles of managing a server.

The term serverless is something of a misnomer, as there are still servers processing the code. However, the developer doesn’t need to worry about it.

“Serverless saves you from spending all your time firefighting and fixing a lot of DevOps issues like dealing with crashes, scaling, updates, and networking issues,” said Bankole, who covered the serverless part of the presentation. “Instead you can just focus on your code.”

PaaS platforms such as Cloud Foundry and Heroku promise something similar, but with a key difference. “PaaS platforms can also handle all the dependencies, scaling, and hosting, but once the application is deployed, it’s always up and waiting for requests — and charging your account for that uptime,” said Bankole. “By contrast, serverless allows us to spin up portions of an application on demand in an ephemeral Docker container, and the contents are deleted when you’re done. This supports a microservices approach where you only get charged based on when the code is running.”

With serverless platforms, the developer writes a series of stateless decoupled functions and uploads them to a serverless engine. “The function can then be called by an HTTP request or a change in a service such as a database or social networking service,” said Bankole.

OpenWhisk, which is also available as Apache OpenWhisk, is currently the only open source serverless platform, said Bankole. “That means you can run it at home or in your own data center,” he added. The modular, event-driven framework makes it easier for teams to work on different pieces of code simultaneously and to dynamically respond to the rapid scaling that is typical of many mobile end-user scenarios.

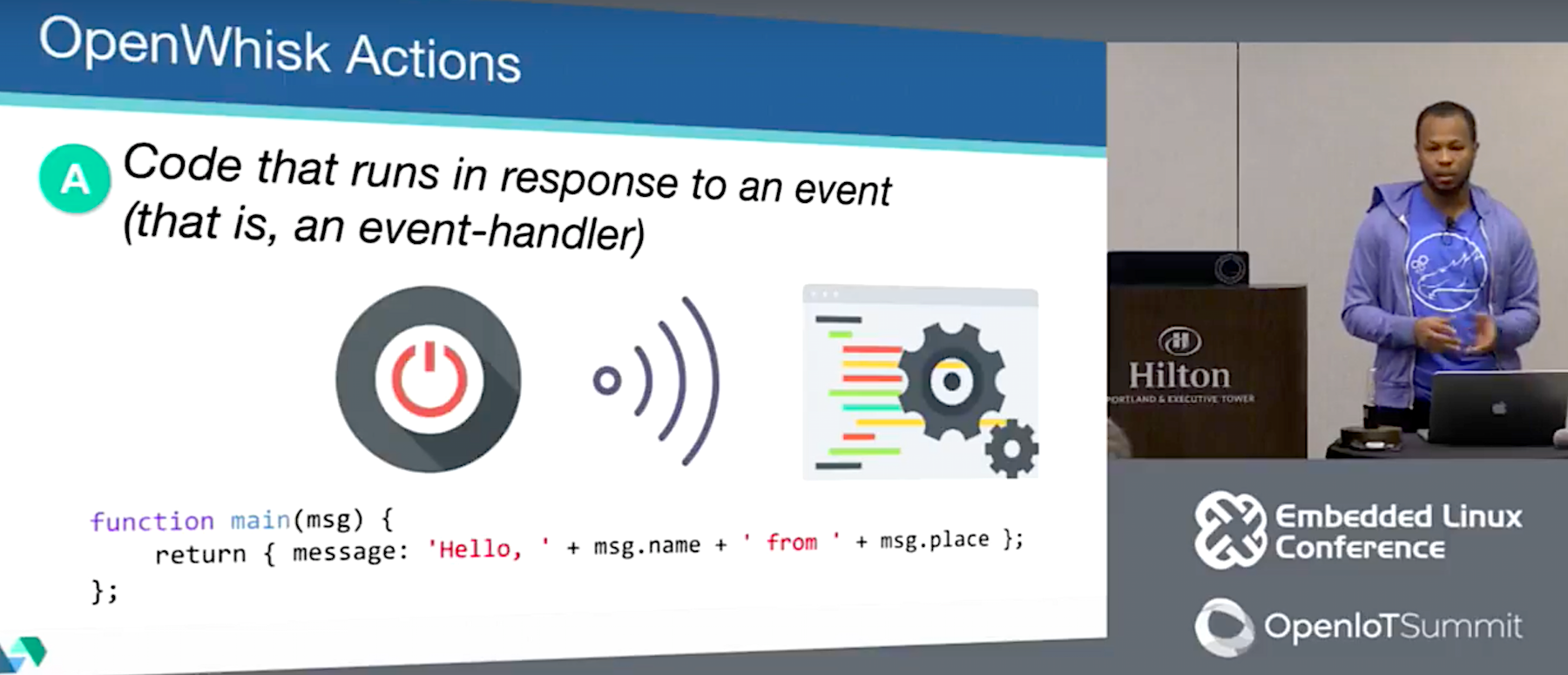

OpenWhisk comprises triggers, actions, rules, and packages, which are combined as services. Developers associate actions to handle events via rules, and packages are used to bundle and distribute sets of actions. All these components can be published publicly or privately.

Triggers “define which events OpenWhisk should pay attention to,” said Bankole. “They can be a web hook, changes to a database, incoming tweets, or a change of hash tags to social media account. Triggers can be data coming in from IoT devices or messages coming in to specific MQTT channels.”

The logic that responds to the triggers is called an action. These snippets of code, which can also be considered as “functions,” are “uploaded to the OpenWhisk action pool,” explained Bankole. OpenWhisk currently supports Node.js, Python, and Apple’s Swift, which Bankole singled out for praise. Other platforms will be added in the future.

Actions are executed in Docker containers, and the results are returned to the user. They can also be forwarded to other actions in a process called chaining. “This lets you reuse pieces of code and combine them in different sequences,” said Bankole.

Rules define a relationship between triggers and actions. A single trigger can set off multiple actions, or a single action can be triggered by multiple rules. This flexibility is well suited to IoT applications, such as home automation and security. For example, a rule can be set up so that when a trigger goes off based on a sensor, the action sends out several alert texts. The trigger could also be set up to kick off multiple actions in parallel, such as locking doors, flashing lights, and activating a siren.

Each function presented to the system is run as a customized REST implant. This in turn initiates an HTTP request that can be emitted by any device with Internet connectivity.

As an alternative to HTTP/REST requests, you can use “feeds,” which monitor services such as a database or message bus like MQTT. “If a message comes in to a certain topic on a MQTT broker or a new record is added to a database, the action can be triggered in response,” said Bankole.

Watson, MQTT, and IFTTT

The OpenWhisk IoT demo integrated the IBM Watson cognitive SaaS platform. For the demo, Bankole and Khanal specifically tapped Watson’s speech-to-text and natural language classifier services. Joined together, these provide a voice agent technology much like that of Alexa or Google Assistant.

In the IoT demo, Watson’s natural language classifier interpreted the speech-to-text output to find the intent. “Watson can tell us what device the request is trying to control, and what kind of control command is being sent,” said Khanal. The speech-to-text service, which supports eight languages, uses either HTTP or WebSocket interfaces to transcribe speech.

Like other Watson cognitive services such as machine learning and visual recognition, these voice services are configurable to run on a customer’s training model. For example, once you train the natural language classifier, it can identify the classes from text, from which you can then determine intent. You can automate the training process with REST and CLI.

To communicate with various devices, Bankole and Khanal used the Watson IoT Platform, which is an MQTT broker for Watson. Watson can integrate with other MQTT brokers, as well.

“Watson IoT provides REST and real-time APIs, mostly to communicate with devices,” said Khanal. “But Watson IoT can also be extended to read and store the state and device events so you can add analytics.”

Khanal also explained how you could connect the serverless/Watson based application to other home automation and smart appliance devices using IFTTT (If This Then That). IFTTT “makes it easier to connect to the many IFTTT-registered services and devices already out there,” said Khanal.

IFTTT combines triggers and actions to control devices or web services. “If you receive a tweet that says shut down the fan, you can use that trigger to connect to vendor devices registered in IFTTT cloud,” said Khanal. “It’s easy to use IFTTT to extend your architecture to connect to devices like smart dishwashers and refrigerators.”

You can watch the complete video below.

Connect with the Linux community at Open Source Summit North America on September 11-13. Linux.com readers can register now with the discount code, LINUXRD5, for 5% off the all-access attendee registration price. Register now to save over $300!