Arch Linux has never been known as a user-friendly Linux distribution. In fact, the whole premise of Arch requires the end user make a certain amount of effort in understanding how the system works. Arch even goes so far as to use a package manager (aptly named, Pacman) designed specifically for the platform. That means all that apt-get and dnf knowledge you have doesn’t necessarily roll over.

Don’t get me wrong; Arch Linux is a fantastic distribution. However (and that “however” is significant), it’s certainly not a distribution for anyone even moderately new to the world of Linux. Case in point: When you boot up an ISO of Arch Linux, you wind up at a Bash prompt, where you then walk through the numerous steps (as outlined in the Installation guide) to get Arch Linux installed. In the end, you will be rewarded with a fine-tuned Linux distribution that will serve your needs well. On top of that, by the time you’ve installed Arch, you will know more about your operating system than you would have before.

But what about those who want the benefits of Arch Linux, but don’t want to have to go through the unwieldy installation? For that, you turn to a distribution like Manjaro. This take on Arch Linux makes the platform as easy to install as any operating system and equally as user-friendly to work with. Manjaro is suited for every level of user—from beginner to expert.

The big question, however, is why would you want to give Manjaro a try? With so many Linux distributions available, is there anything particularly compelling about this platform to woo you away from your current daily driver (or to simply test out what this Arch-based distribution is all about)? Let’s take a look.

32- and 64-bit friendly

While many distributions are dropping support for 32-bit architecture, Manjaro continues to support the aging platform. This means that all of your older hardware can still make use of this Arch-based operating system with the latest-greatest releases of software. This will become more crucial in the future, when more Linux distributions stop supporting 32-bit hardware.

Rolling Release

Manjaro (currently on its 17th iteration) is a rolling release distribution. What does that mean? For those that do not know, a rolling release distribution effectively means everything is updated frequently, even the core of the system, so that there is no need for point-based releases. This also means your machine will always have the latest-greatest stable software. Due to the frequency of the updates, they are also smaller. Some consider this a superior update delivery method, as there is less chance of software breakage.

Choose your desktop

At the moment, you can choose between the Xfce, KDE, or GNOME. All three editions follow similar design concepts and offer a very clean and professional look (Figure 1).

The Net edition provides a base installation without a pre-existing display manager, desktop environment, or any desktop software. With this particular release, you can customize it to perfectly meet your needs.

There are also community editions that include spins based on the following desktops:

The Manjaro developers have done a fantastic job of making Xfce, GNOME, and KDE versions look and feel the same. The biggest difference, for me, is that both the KDE and GNOME takes on the distribution are a bit more elegant and modern than Xfce (which might sway you one way or another).

Software

Beyond Manjaro’s ability to make Arch easy, one of the most impressive aspects to be found on this desktop Linux distribution is the collection of included software. Yes, you’ll find the standard productivity software:

-

LibreOffice

-

GIMP (XFCE version only)

-

Inkscape and Krita (KDE version only)

-

File managers and other standard desktop tools

-

Firefox (all three versions)

-

Thunderbird (KDE and XFCE versions)

-

Evolution (GNOME version)

But beyond the basics, you’ll also find the likes of:

-

Avahi SSH Server and Zeroconf Browser

-

Steam

-

Bulk Rename

-

Catfish File Search

-

Clipman

-

HP Device Manager

-

Orage Calendar

-

Htop

-

GParted

-

Yakuake (KDE version only)

-

Octopi CacheCleaner (KDE version only)

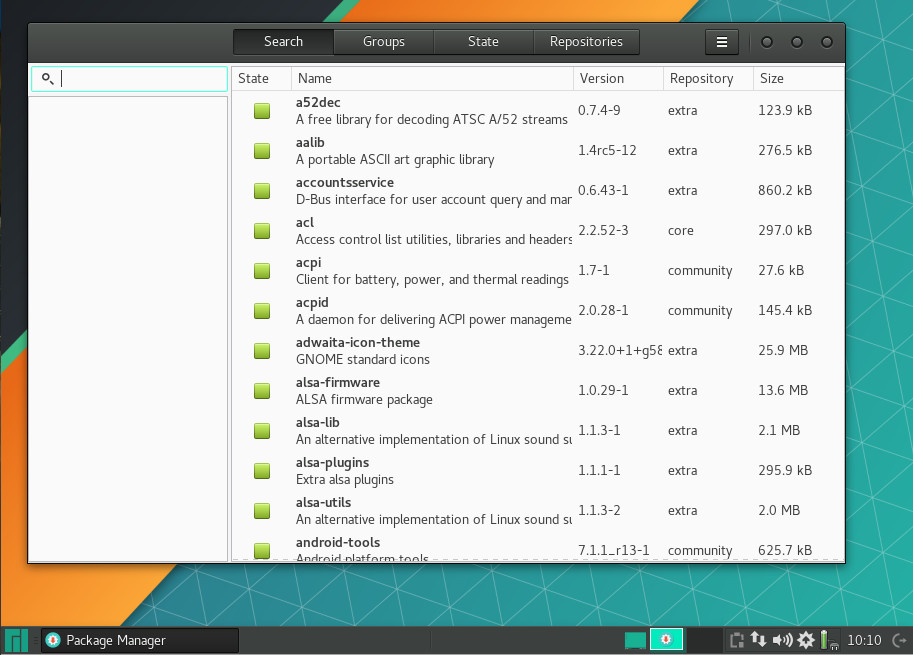

Along with those packages, Manjaro offers an easy to use Add/Remove Software tool (Figure 2) that allows you to install software from a vast collection of titles.

Understand, the pre-installed package listing will vary, depending on which desktop environment you’ve chosen to install. For example, the KDE version of Manjaro will lean heavy on KDE applications and the GNOME version will lean on GNOME software. You will find, however, that all three official desktop iterations do include LibreOffice, so your productivity is covered, regardless of environment.

The package manager GUI is as simple to use as any: Open the tool, search for what you want to install, select the software, and click Apply. Updates are just as easy. When an update has arrived, you will be notified in the system tray. Click the notification and okay the installation of the upgrades.

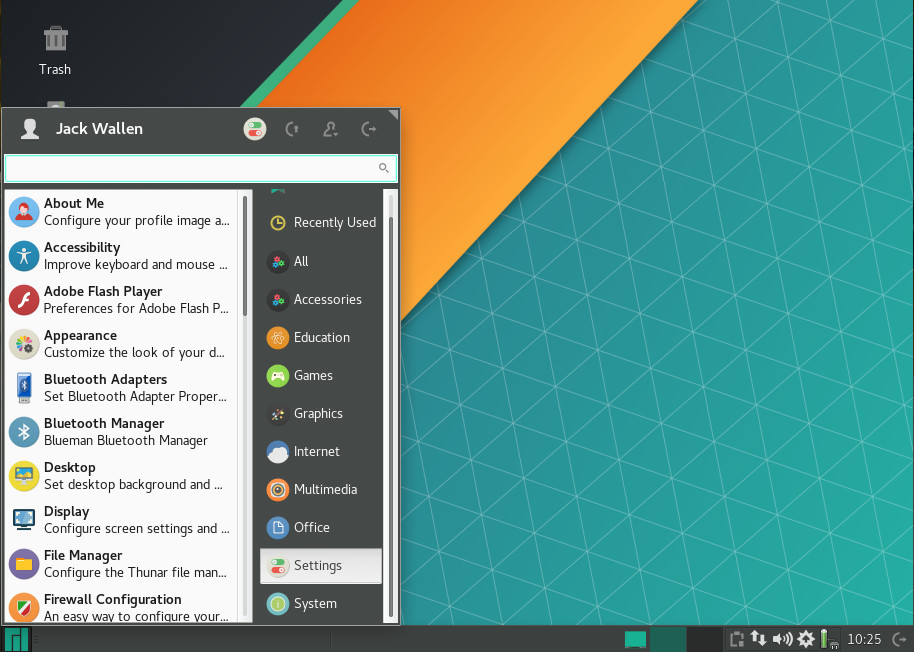

Settings Menu

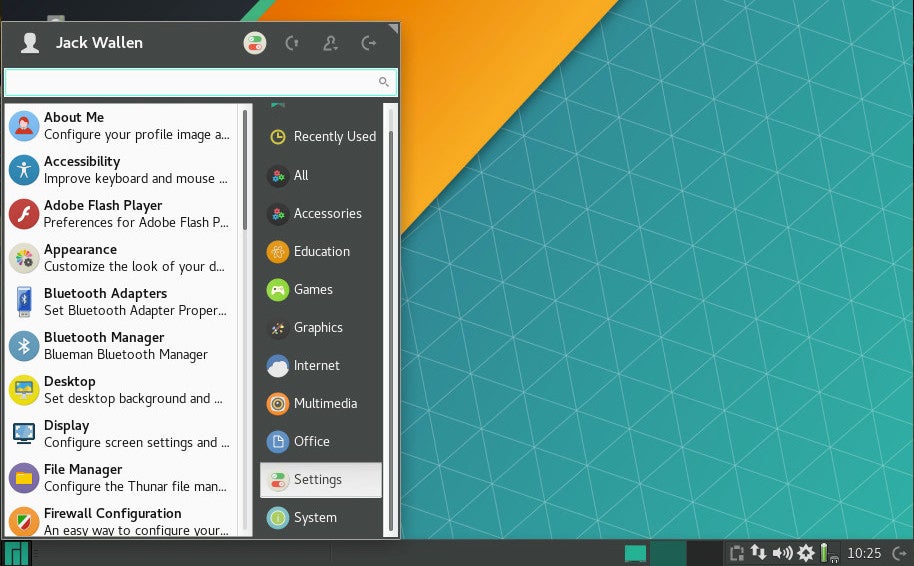

One nice touch for Xfce spin of Manjaro is the Settings menu. Click on the Main menu and then click Settings in the right side of the menu to reveal an impressive amount of options available to configure (Figure 3).

With the KDE and GNOME flavors of Manjaro, you work with the standard tools of that particular desktop environment, for a bit more cohesive feel. If you’ve used a recent releases of either KDE or GNOME, you’ll feel right at home. The GNOME iteration also includes the Dock To Dash extension, for those that prefer a more “dock-like” approach to the desktop.

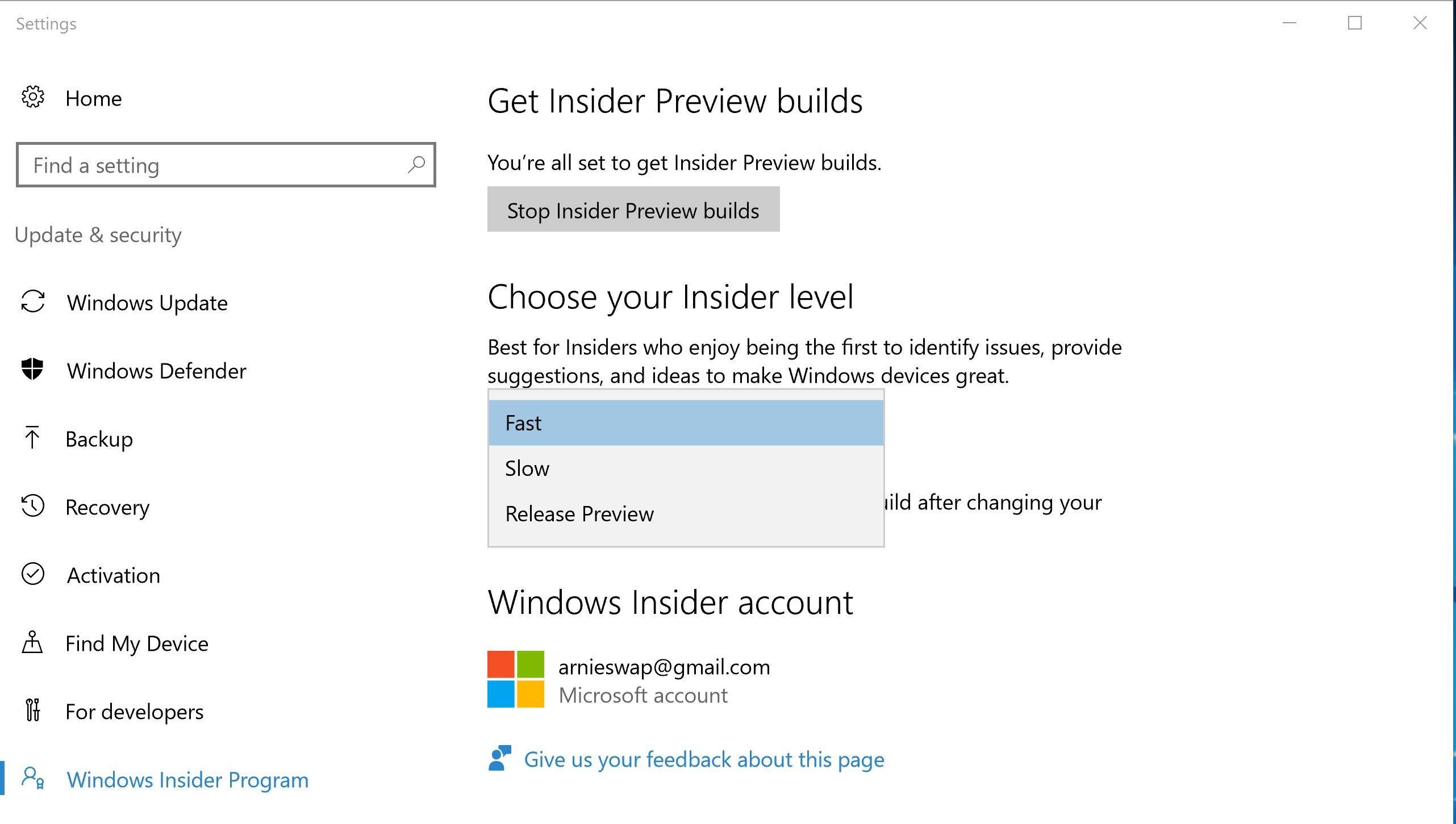

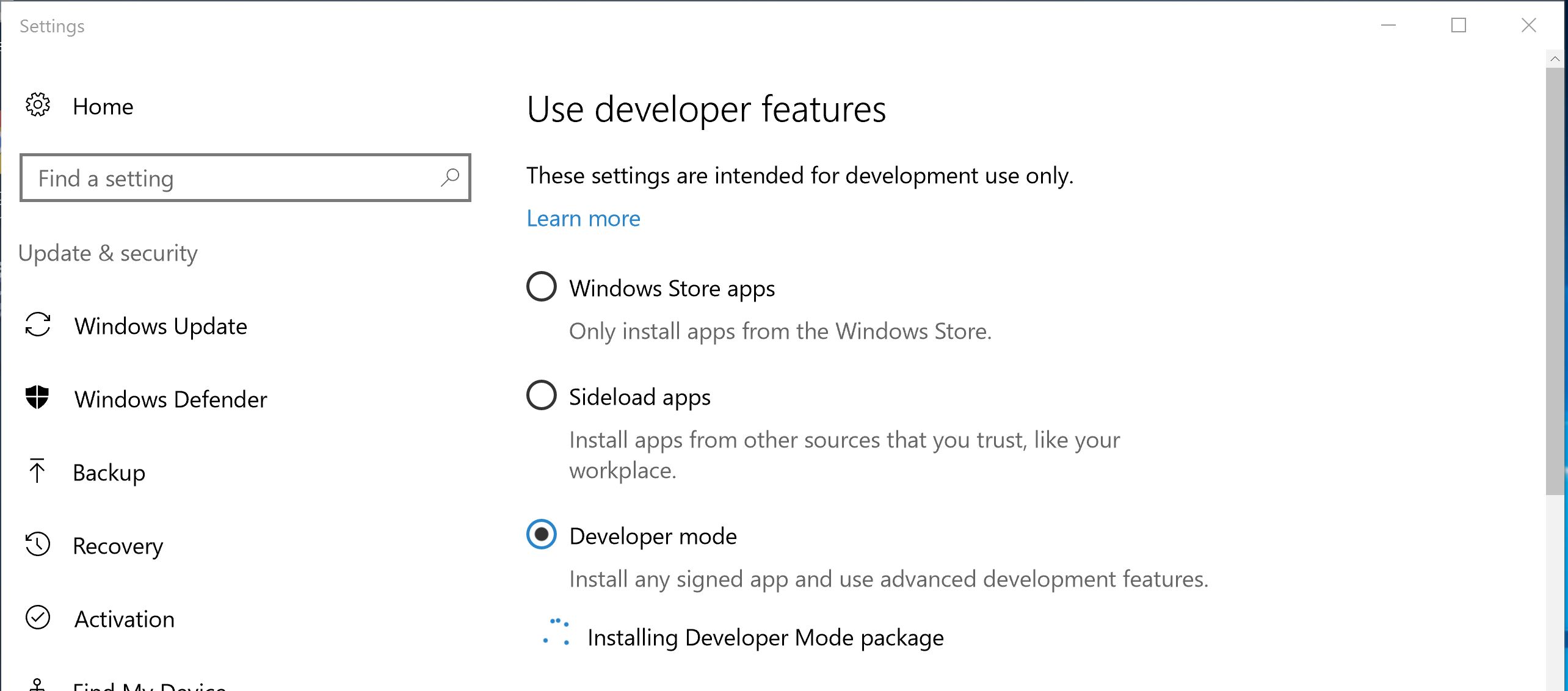

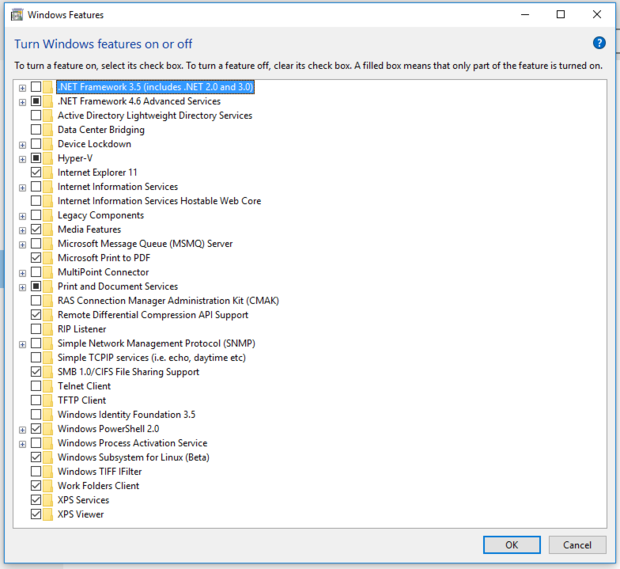

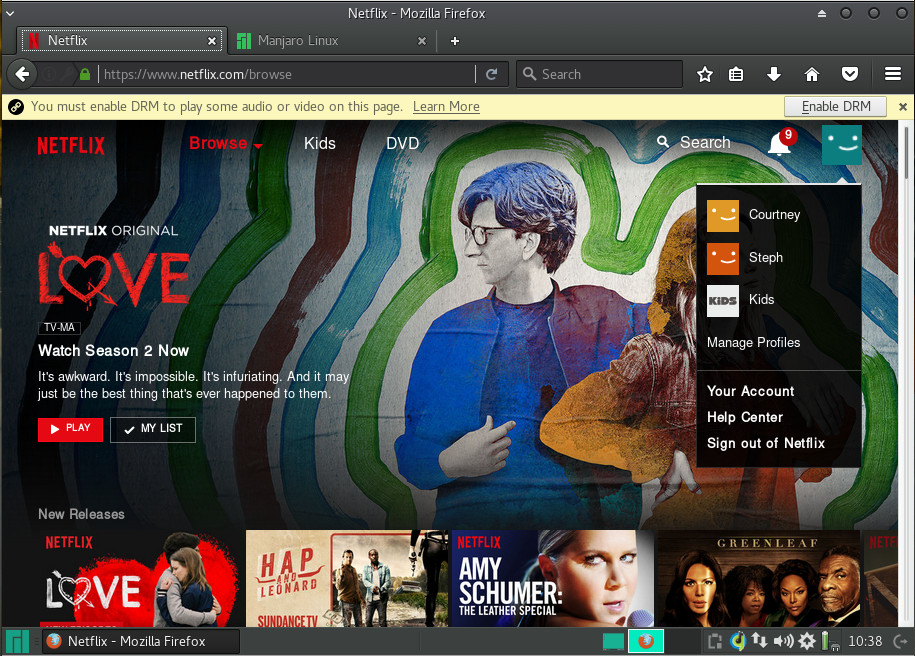

Media

I was pleasantly surprised that Manjaro was able to play MP3s out of the box with one of its media players. The Xfce edition of Manjaro ships with both Guayadeque and Parole media players. Of the two, only Guayadeque was able to play MP3 files out of the box. YouTube videos play without issue and Netflix only requires the enabling of DRM (Figure 4) and the installation of the Random Agent Spoofer extension.

Once you’ve taken care of those two issues, Netflix plays seamlessly (Figure 5).

Performance

As for performance, you can opt for any of the official editions of Manjaro and expect incredible speed. Running as a VirtualBox guest with 3GB of RAM, Manjaro ran as smoothly and quickly as the host Elementary OS Loki with a remaining 13GB of RAM available. That should tell you all you need to know about the performance of Manjaro. As a whole, there is absolutely nothing to complain about with regards to Manjaro performance. It’s quick, smooth, and reliable. The GNOME, KDE, and Xfce are flawless.

Who’s it for?

In the end, I think it’s safe to say that Manjaro Linux is a distribution that is perfectly capable of pleasing any level of user wanting a reliable, always up-to-date desktop. Manjaro has been around since 2011, so it’s had plenty of time to get things right… and that’s exactly what it does. If you’ve been looking for the ideal distribution to help you give Arch a try, the latest release of Manjaro is exactly what you’re looking for.

Learn more about Linux through the free “Introduction to Linux” course from The Linux Foundation and edX.