ChakraCore is the Core part of Microsoft Edge’s JavaScript Engine as used in Windows 10. It’s a standalone JavaScript engine in its own right but does not include bindings and the API for Windows because this is provided by a larger framework, Chakra. Arunesh Chandra, Senior Program Manager at Microsoft, introduced ChakraCore and how it fits into the larger Node ecosystem at Node.js Interactive.

ChakraCore is open source, distributed under the MIT license (it’s source code is available on GitHub) and, to a certain degree cross-platform, as it works on Windows, Linux, and MacOS, although only as an interpreter on the latter two for now. That said, Chandra’s team plan to provide JIT and High Performance Garbage Collection with ChakraCore on all three platforms soon.

Yet Another JavaScript Engine

One of the reasons that led Microsoft to develop ChakraCore is that, although Node.js runs almost everywhere, on x86, x64, and ARMv7, it did not run on ARM Thumb-2. This architecture is important for Microsoft because Thumb-2 instruction set is one of the main targets of Windows 10 IoT. Node ChakraCore brings Node.js to ARM Thumb-2.

To make Node.js run using ChakraCore, the team created a shim that binds with the V8 API and sits on the ChakraCore engine. In this scenario, ChakraCore serves all the calls, similar to how Mozilla’s SpiderMonkey does in SpiderNode.

After submitting a pull request to Node.js, ChakraCore has now been accepted into the project, albeit in a separate repo, and Node ChakraCore binaries are now available at Node’s nightly download site.

Time-Travel Debugging

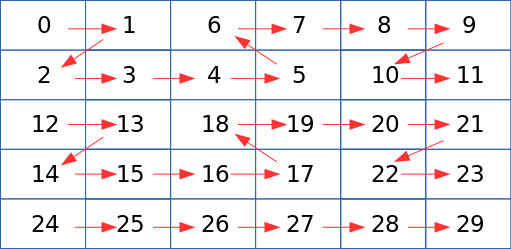

Chandra’s team have partnered with Microsoft Research to push the state of art in Node.js diagnostics, and one of the ways of doing that is bringing Time-Travel Debugging to Node.js ChakraCore. Time-Travel Debugging allows you not only to trace execution of your code forwards — as you run your program step by step from beginning to end — but also backwards, moving back in the state of your application to help you locate bugs after the application fails.

Time-Travel Debugging is already available as beta on Windows on VSCode and as a preview for Mac and Linux.

In other news, perfomance-wise, ChakraCore works well on Microsoft Edge, but it still needs work on other platforms, such as Chrome and Firefox.

NAPI / VM-Neutrality

Another thing Chandra’s team is working on is NAPI. The aim of having VM_Neutrality for Node is that it would allow Node.js to become a ubiquitous application platform, one that allows applications to run on any device and for any workload.

Chandra points out there is a trend in which different organizations fork Node to optimize it for specific scenarios. Samsung has iotjs, Microsoft has ChakraCore, Mozilla has SpiderNode. VM Neutrality developers envision creating an infrastructure for VM owners and authors to plug in their VMs in the existing ecosystem without having to fork Node.

Another layer of abstraction is the ABI Stable Node (or Node.js APi or NAPI). NAPI comes about because of the current issues with native modules. Native modules used to break when there was an upgrade to Node.js. Although modern native modules are protected thanks to the NAN project, they still need to be recompiled each time you switch Node.js version.

That is where NAPI comes in. NAPI is a layer between the Native Module and JavaScript Engine that aims to provide ABI compatibility guarantees across different versions of Node and Node VMs. It allows enabled native modules to work across different versions and flavors of Node.js without the need for recompilations.

In his demo, Chandra showed how using an app that depended on native modules didn’t break and the modules themselves didn’t need to be recompiled when he ran the app on different versions of Node and even when he switched engines to ChakraCore.

The Road Ahead

Although Chandra showed working demos of all the technologies he mentioned in his presentation, he admitted that, in many cases, they were still in early stages of development. His team is working on stabilizing all ChakraCore’s features and porting the new debugging tools to all platforms.

Watch the complete presentation below:

If you are interested in speaking or attending Node.js Interactive North America 2017 – happening in Vancouver, Canada next fall – please subscribe to the Node.js community newsletter to keep abreast with dates and time.