Thomas Di Giacomo, Chief Technology Officer at SUSE started his LinuxCon Europe keynote with a brief clip in the style of Mr. Robot where in 2016 even Evil Corp has gone open source.

Thomas Di Giacomo, Chief Technology Officer at SUSE started his LinuxCon Europe keynote with a brief clip in the style of Mr. Robot where in 2016 even Evil Corp has gone open source.

The 25th anniversary of Linux was a big milestone celebrated by many of us at LinuxCon events throughout the year, and it was a theme throughout many of the presentations. Thomas Di Giacomo, Chief Technology Officer at SUSE started his LinuxCon Europe keynote with a brief clip in the style of Mr. Robot where in 2016 even Evil Corp has gone open source and we have won. He says that “open source is seen as a technology savior. That’s why companies have been embracing it, because they have to, to remain viable.”

Giacomo compares the human brain at age 25 with Linux. He talks about how at 25 years old, neurologists say that our brains make a big leap in maturity when the prefrontal cortex becomes fully operational, which helps us focus, make more logical decisions, make more complex plans, be more organized, and be more disciplined. At 25, Linux is also maturing in all of these ways, which leads us to collaborate more together to achieve great things.

Going back a few centuries to around the time of Leonardo Da Vinci before science was fully mature, there were individual bright minds active in poetry, philosophy, and other arts and domains, who could cover most of their contemporary knowledge. Giacomo described them “as men who knew it all, people like Aristotle, Roger Bacon, Da Vinci, Kepler, Humboldt, and others.”

Technology today is much too complex to be understood by a single person. “The future of open source is about contributing together more and more, so that we can achieve more and more complex challenges. We should try to scale our individual brains into a much larger collective, connected, and functional brain,” Giacomo says.

This is similar to the Avengers. Individual super heroes acting alone couldn’t save the day, so they are teaming up to be stronger together and more powerful than the sum of their parts.

Giacomo suggests that “in our community too, we have gone from individual mighty Hulks to groups of Avengers, so … we have to work more and more together to keep fixing more and more challenging problems. … Simply having the code available is not enough to ensure long-term viability of open source. We also need to make sure we keep working on fostering inclusive environments where everyone can contribute, so that the open source momentum continues and grows for the next decades to come.”

This was a fun talk that can be best appreciated by watching the entire video!

Interested in speaking at Open Source Summit North America on September 11 – 13? Submit your proposal by May 6, 2017. Submit now>>

Not interested in speaking but want to attend? Linux.com readers can register now with the discount code, LINUXRD5, for 5% off the all-access attendee registration price. Register now to save over $300!

What are micro operating systems and why should individuals and organizations focused on the cloud care about them? In the cloud, performance, elasticity, and security are all paramount. A lean operating system that facilitates simple server workloads and allows for containers to run optimally can serve each of these purposes. Unlike standard desktop or server operating systems, the micro OS has a narrow, targeted focus on server workloads and optimizing containers while eschewing the applications and graphical subsystems that cause bloat and latency.

In fact, these tiny platforms are often called “container operating systems.” Containers are key to the modern data center and central to many smart cloud deployments. According to Cloud Foundry’s report “Containers in 2016,” 53 percent of organizations are either investigating or using containers in development and production. The micro OS can function as optimal bedrock for technology stacks incorporating tools such as Docker and Kubernetes.

The Linux Foundation recently released its 2016 report “Guide to the Open Cloud: Current Trends and Open Source Projects.” This third annual report provides a comprehensive look at the state of open cloud computing. You can download the report now, and one of the first things to notice is that it aggregates and analyzes research, illustrating how trends in containers, microservices, and more shape cloud computing. In fact, from IaaS to virtualization to DevOps, the report provides descriptions and links to categorized projects central to today’s open cloud environment.

In this series of posts, we will look at many of these projects, by category, providing extra insights on how the overall category is evolving. Below, you’ll find a collection of micro or “minimalist” operating systems and the impact that they are having, along with links to their GitHub repositories, all gathered from the Guide to the Open Cloud:

Project Atomic is Red Hat’s umbrella for many open source infrastructure projects to deploy and scale containerized applications. It provides an operating system platform for a Linux Docker Kubernetes (LDK) application stack, based on Fedora, CentOS and Red Hat Enterprise Linux. Project Atomic on GitHub

A lightweight Linux operating system designed for clustered deployments providing automation, security, and scalability for containerized applications. It runs on nearly any platform whether physical, virtual, or private/public cloud. CoreOS on GitHub

Photon OS is a minimal Linux operating system for cloud-native apps optimized for VMware’s platforms. It runs distributed applications using containers in multiple formats including Docker, Rkt, and Garden. Photon on GitHub

RancherOS is a minimalist Linux distribution for running Docker containers. It runs Docker directly on top of the kernel, replacing the init system, and delivers Linux services as containers. RancherOS on GitHub

Learn more about trends in open source cloud computing and see the full list of the top open source cloud computing projects. Download The Linux Foundation’s Guide to the Open Cloud report today!

This is the first entry in an insideHPC series that delves into in-memory computing and the designs, hardware and software behind it. This series, compiled in a complete Guide available here, also covers five ways in-memory computing can save money and improve TCO.

To achieve high performance, modern computer systems rely on two basic methodologies to scale resources. Each design attempts to bring more processors (cores) and memory to the user. A scale-up design that allows multiple cores to share a large global pool of memory is the most flexible and allows large data sets to take advantage of full in-memory computing. A scale-out design distributes data sets across the memory on separate host systems in a computing cluster. Although the scale-out cluster often has a lower hardware acquisition cost, the scale-up in-memory system provides a much better total cost of ownership (TCO) based on the following advantages:

Read more at insideHPC

‘DevOps’ is easy when you know your organization can adopt changes easily and have a right attitude to use some tools that make DevOps come true in your organization.

Tools you need to use in every stage of the DevOps cycle, here is my article on ‘How to choose right set of DevOps tools‘

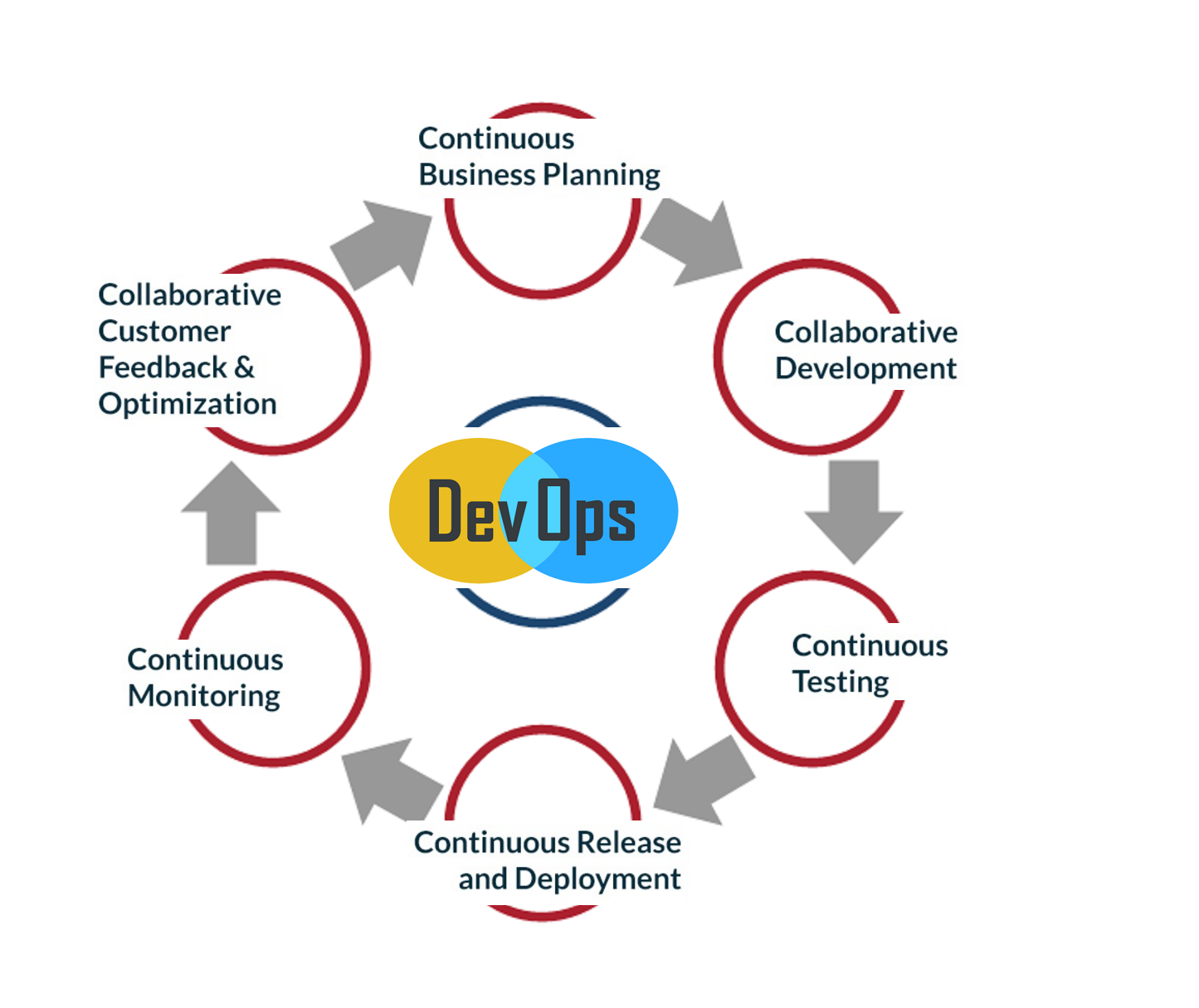

Along with the tools, you need to know the DevOps cycle and here it is,

What are the 6 C’s of DevOps?

1. Continuous business planning: Starts with identifying the skills, outcomes, and resources needed.

2. Collaborative development: Starts with development sketch plan and programming.

3. Continuous testing: Unit and integration testing to help increase the efficiency and speed of the development.

4. Continuous release and deployment: Non-stop CD pipeline to help you implement code reviews and developer check-ins easily.

5. Continuous monitoring: To monitor the changes and address the errors/mistakes spontaneously whenever they happen.

6. Customer feedback and optimization: This allows the immediate response of your customers for your product and its features and helps you modify accordingly.

Taking care of these 6 stages will make you a good DevOps organization. BTW, this is not the must have a model but a more sophisticated model out there. This will give you a fair idea on the tools to use at different stages to make this process more lucrative for a software powered organization.

CD pipeline, CI tool and Containers make things easy and when you want to practice DevOps, having a microservices architecture makes more sense.

From Day One of eBay’s cloud journey, the e-commerce company has focused on keeping its developers happy, according to Suneet Nandwani, eBay’s senior director of cloud infrastructure and platforms. That’s led to several challenges and innovations at the company, the latest of which is the development of TessMaster — a management framework to deploy Kubernetes on OpenStack.

With the emergence of Docker, it became clear that containers are “a technology which developers love,” Nandwani told ZDNet.

Read more at ZDNet

Let’s look at the Agile development process – again focusing on Scrum from the Ops perspective. Let’s review the places where we intervene: in which points in the process do we intervene? How do we help development? How do we help QA? And what about ourselves, Ops? How do we help ourselves? And despite our previous statement, we’ll also say a few words about the mindset. Let’s ask the obvious first question on the way to involvement:

Are Ops part of the Scrum team or not?

We have made the testers part of the team. After all, the whole idea behind Scrum is independently organized multidisciplinary teams. So what will it be? Ops in the team or outside of it?

Read more at AgiloPedia

Web analytics is nothing but the measuring web traffic. It is not limited to measuring web traffic. It includes:

Google Analytics is the most widely used cloud-based web analytics service. However, your data is locked into Google Eco-system. If you want 100% data ownership, try the following open source web analytics software to get information about the number of visitors to your website and the number of page views. The information is useful for market research and understanding popularity trends on your website.

Read more at *nixCraft

A number of interesting and notable legal developments in open source took place in 2016. These seven legal news stories stood out:

1. Victory for Google on fair use in Java API case

In 2012 the jury in the first Oracle v. Google trial found that Google’s inclusion of Java core library APIs in Android infringed Oracle’s copyright. The district court overturned the verdict, holding that the APIs as such were not copyrightable (either as individual method declarations or their “structure, sequence and organization” [SSO]).

Read more at Opensource.com

The first day of 2017 starts off for Linux users with the release of the second RC (Release Candidate) development version of the upcoming Linux 4.10 kernel, as announced by Linus Torvalds himself.

As expected, Linux kernel 4.10 entered development two weeks after the release of Linux kernel 4.9, on Christmas Day (December 25, 2016), but don’t expect to see any major improvements or any other exciting things in RC2, which comes one week after the release of the first RC, because most of the developers were busy partying.

Read more at Softpedia