I recently caught up with Joyent CEO Scott Hammond at LinuxCon in Seattle. Joyent has been a leader in supporting the growth and diversity of the Node.js community and was a founding member of the Node.js Foundation. I was interested to learn more about Scott and his work at Joyent, as well as more about the company’s contributions to Linux and open source. Below I include a Q&A with him on these topics. I’ll also be sharing a video interview with Scott a little later this fall.

I recently caught up with Joyent CEO Scott Hammond at LinuxCon in Seattle. Joyent has been a leader in supporting the growth and diversity of the Node.js community and was a founding member of the Node.js Foundation. I was interested to learn more about Scott and his work at Joyent, as well as more about the company’s contributions to Linux and open source. Below I include a Q&A with him on these topics. I’ll also be sharing a video interview with Scott a little later this fall.

Can you describe Joyent’s business?

Joyent is a cloud and infrastructure software company. We are big believers in containers and, along with Google, pioneered running container-based infrastructure at scale. Containers deliver bare metal performance, workload density, and web scale economics, far beyond what is possible with virtual machines.

Joyent’s Triton Elastic Infrastructure is the best place to run containers, making container ops simple and scalable with enterprise-grade security, software-defined networking and bare-metal performance. Triton is available for on-premises deployments or through the Joyent Triton Elastic Container Service on the Joyent Public Cloud.

Why is open source so important to the company?

Open source is the only way infrastructure software is being developed today. No meaningful proprietary infrastructure software has been built in the last 10 years. Open source has some significant advantages over the proprietary model. First, we get to engage the user community directly to collaborate on innovation, let them participate in the technical direction, and extend the software to address their unique requirements. We saw a great example of that recently as someone in the community built an OpenStack Heat template that allows you to use OpenStack to deploy containers directly on Triton instead of a VM.

Open source is also the best model to engage with customers since many have adopted an “open-first” policy where they look first for an open source solution before they evaluate proprietary products. Due to open access to source code, documentation, expertise, and support, organizations can evaluate, deploy, and utilize open source software without enduring a judo match with an overbearing proprietary sales rep.

Customers have witnessed community development delivering rapid innovation. Open source also allows them to de-risk their projects, avoid vendor lock-in, and steer clear of budget-crippling license agreements. You can see the effects of the switch from proprietary to open on the recent quarterly announcements of the large proprietary software companies.

How would you describe Joyent’s open source strategy thus far?

So far, we have utilized open source as a model to innovate quickly and engage with customers and a broad developer community. SmartOS and Node.js are open source projects we have run for a number of years. In November of last year we went all in when we open sourced two of the systems at Joyent’s core: SmartDataCenter and Manta Object Storage Service. The unifying technology beneath both SmartDataCenter and Manta is OS-based virtualization and we believe open sourcing both systems is a way to broaden the community around the systems and advance the adoption of OS-based virtualization industry-wide.

We’re also getting involved with the larger open source community through initiatives like The Open Container Initiative (OCI) and the Cloud Native Computing Foundation (CNCF). Last month, we joined the newly formed CNCF as a charter member because we believe it is a foundation with a clear mission that aligns with our values: accelerating innovation and adoption of open source, container-based cloud computing.

In addition to those more recent open source milestones for Joyent, we’ve of course been heavily involved in the Node.js project since its inception half a decade ago. I wasn’t at Joyent then, but the team fell in love with Node.js as a new platform on which to build its cloud management software. Joyent really believed in the project, so the company hired Ryan Dahl and became the project steward until the formation of the Node.js Foundation earlier this year.

What about Node.js drove Joyent to get so involved?

We immediately recognized just how important Node.js could become. It is a low latency, event-driven platform that has broad application in fast growing markets such as robotics, IoT, mobile, and the web. Joyent wanted to make sure Node.js flourished and ended up supporting the project through years of incredible adoption and growth.

What led to the decision to found the Node.js foundation?

Our goals for the project were for it to be a production-grade platform. To ensure that the code was highly performant, highly available, and high quality, we felt it was important to support Ryan Dahl’s wishes to tightly control the project through a BDFL model. The project became massively popular and attracted a passionate group of developers and tens of thousands of production deployments. Over the years, the project became a victim of its own success. The vendor ecosystem that sprung up around Node demanded a neutral playing field so they could monetize Node, the developers insisted on a louder voice in the technical direction, and the customers wanted to de-risk the project. I feel very strongly that for a project to succeed, the needs of all constituents (developers, users, and vendors) must be balanced. It became pretty clear that the project had transcended the needs of any one company and despite TJ Fontaine’s efforts to relax the constraints of the BDFL model, we needed to move to a new governance model. That’s why I decided to form the Node.js Advisory Board, which brought together a representative group of project constituents to work on governance issues, IP issues, community concerns, etc. We were all trying to avoid a fork, which would ultimately fracture the community, but obviously io.js forked in November. In the end, Joyent and everyone involved with Node.js wanted a single, unified project to succeed and grow under an open governance model. The Foundation gives us that and is the path to a long future.

How has the foundation functioned thus far?

I think we’re moving in a very positive direction. You can see exactly what we’re up to by checking out the public meeting notes and documents. Transparency is a major ingredient of this succeeding and we’re committed to keeping this open. Our mission is to drive widespread adoption and accelerate development of the project. If that is to happen, we need to avoid falling into corporate anti-open source patterns. When deciding to form the foundation, I talked a lot with Jim Zemlin from the Linux Foundation to see how we could set up a foundation that addressed the unique needs of the Node community and let the community dictate the technical direction. Whereas other foundations have fallen into pay-to-play situations driven by corporate desires, we set up an independent technical committee with good representation from the user community. I think we got it right and I’m confident the Node.js Foundation is on the path to long-term sustainability — particularly given the reunification with io.js, I think we’re well on our way.

What do you hope to see from Node.js in the next 10 years? What do you think Joyent’s involvement will be in the long run?

Joyent is going to stay very involved. We’ve built our core solutions on Node.js and poured resources into it for years. We plan to stay involved in the Foundation, make technical contributions to the project, and offer Node.js technical support. We’re in it for the long run. In terms of what I hope to see, I am optimistic about increased adoption and significant technical development over the next 10 years. There’s a lot of work ahead, and open governance by itself does not guarantee long-term success. All of us — the vendors, contributors, users — will need to balance our needs and encourage an open ecosystem.

What makes foundations a good model for open source technology? Do you think they will continue to be the preferred model?

Foundations allow for greater collaboration, transparency and accountability. They also are a neutral structure that provides the best vehicle to balance the needs of the developers, the users, and the vendors. Those are good things for all the reasons I’ve detailed above. But like I’ve pointed out, a foundation does not in and of itself guarantee technological success. As our CTO Bryan Cantrill describes so well, many foundations in the past have underestimated the complexities and restrictions of running a non-profit. Opening up ownership of a project can also lead to the loss of strong leadership. And, finally, some foundations — despite initial intentions — have fallen into the pay-to-play pattern of catering only to the needs of the largest donors.

So yes, I do think foundations are overall a good model for open source technology, but not without reserve. When an open source technology has reached a certain level of popularity and adoption that brings innumerable players and constituents into the fray, only a foundation can provide the necessary neutrality. I think foundations will continue to serve this purpose, but we need to all be diligent about maintaining that neutrality and the ability to think bigger than your own organization. That’s part of the reason we’re so excited about the CNCF. At its core, the new foundation’s goals extend beyond any single technology or the needs of one company. Rather, it’s part of the new era of open source foundations, one in which corporate neutrality, transparency and innovation are the guiding values. We hope this foundation will be a model for open source moving forward.

What’s next for Joyent and open source?

We’re excited to witness the result of open sourcing SmartDataCenter and Manta. Already, we’ve seen organizations using the technologies in innovative ways and we’re committed to supporting open source in the future. Open source is an approach that works, and we’re sticking to it.

We are also excited about the potential impact of foundations like the CNCF. Foundations have historically been used as a steward for projects. The CNCF is playing a different role. It is a steward for a new model of computing. It brings together a cadre of projects and companies to define use cases, reference architectures, API’s, and PoC’s that will de-risk and accelerate a new model of computing. We are breaking new ground, and it is rife with challenges, but I am optimistic about the impact we can have.

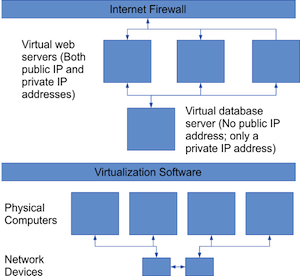

Not too long ago, software development was done a little differently. We programmers would each have our own computer, and we would write code that did the usual things a program should do, such as read and write files, respond to user events, save data to a database, and so on. Most of the code ran on a single computer, except for the database server, which was usually a separate computer. To interact with the database, our code would specify the name or address of the database server along with credentials and other information, and we would call into a library that would do the hard work of communicating with the server. So, from the perspective of the code, everything took place locally. We would call a function to get data from a table, and the function would return with the data we asked for. Yes, there were plenty of exceptions, but for many application-based desktop applications, this was the general picture.

Not too long ago, software development was done a little differently. We programmers would each have our own computer, and we would write code that did the usual things a program should do, such as read and write files, respond to user events, save data to a database, and so on. Most of the code ran on a single computer, except for the database server, which was usually a separate computer. To interact with the database, our code would specify the name or address of the database server along with credentials and other information, and we would call into a library that would do the hard work of communicating with the server. So, from the perspective of the code, everything took place locally. We would call a function to get data from a table, and the function would return with the data we asked for. Yes, there were plenty of exceptions, but for many application-based desktop applications, this was the general picture.

The X.Org Foundation, through Keith Packard, announced the immediate availability for download of the first Release Candidate (RC) build towards the X.Org Server 1.18 open-source implementation of the X Window System.

The X.Org Foundation, through Keith Packard, announced the immediate availability for download of the first Release Candidate (RC) build towards the X.Org Server 1.18 open-source implementation of the X Window System. The Linux Foundation is no stranger to the world of open source and free software — after all, we are the home of Linux, the world’s most successful free software project. Throughout the Foundation’s history, we have worked not only to promote open-source software, but to spread the collaborative DNA of Linux to new fields in hopes to enable innovation and access for all.

The Linux Foundation is no stranger to the world of open source and free software — after all, we are the home of Linux, the world’s most successful free software project. Throughout the Foundation’s history, we have worked not only to promote open-source software, but to spread the collaborative DNA of Linux to new fields in hopes to enable innovation and access for all.