By all metrics, it has been a good year for Node.js. During his keynote at Node.js Interactive in November, Rod Vagg, Technical Steering Committee Director at the Node.js Foundation talked about the progress that the project made during 2016.

Node.js. Foundation is now sponsored by nearly 30 companies, including heavyweights such as IBM, PayPal, and Red Hat. The community of developers is also looking healthy. Within the Technical Group, currently 90 core collaborators have commit access to the Node.js repository; 48 of these core collaborators were active in the last year. Since 2009, when Node.js was born, the total number contributors — that is, the number of people who have made changes in the Node Git repository — has grown over time. In fact, 2016 saw twice as many people per month contributing to the code base as in 2015.

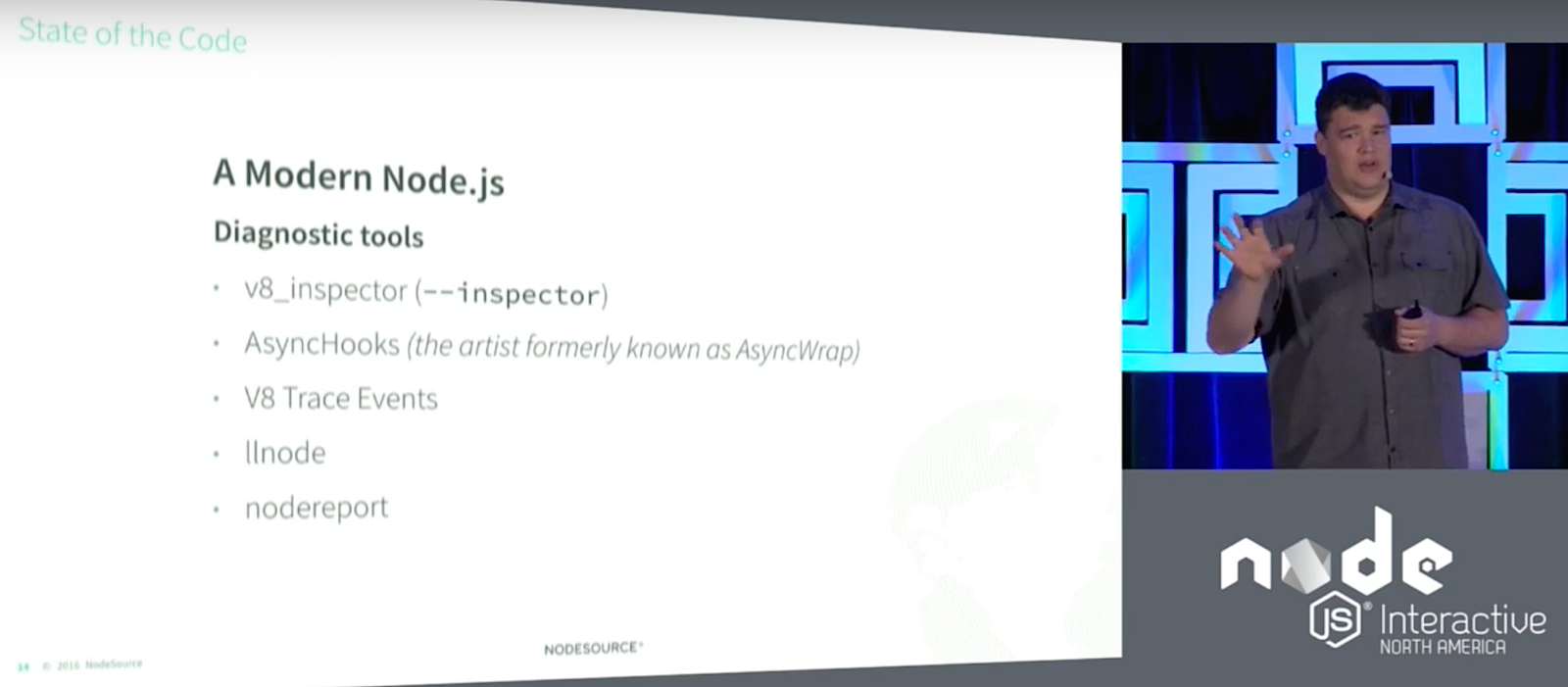

State of the Core Code

In 2016, the number of commits increased 125 percent relative to 2015, said Vagg. Despite this, the core stayed more or less stable. 37 percent of JavaScript and C++ code received minor changes in the src/ and lib/ directories. 58 percent of the test code was also tweaked. However, the majority of commits went into documentation. More than 90 percent of the lines in the API documents were changed. Vagg thinks documentation is probably the easiest way to get into contributing to Node and is therefore acting as a gateway for first-time contributors.

Vagg said developers can now count on more tools to help them with their tasks if they decide to tackle programming issues. Node has been traditionally hard to debug, but new utilities, such as the V8_inspector extension for Chrome allows a developer to attach Chrome’s DevTools to your application. This extension will probably supersede the old debugger in the near future. Other tools, such as AsyncHooks (previously AsyncWrap), V8 Trace Events, llnode, and nodereport also contribute to making Node.js applications easier to debug.

This hard work is paying off. Version 6, an LTS version, now implements 96 percent of EcmaScript 6/2015, for example. And, it works both ways: Node now has contributors representing the community in the Ecma technical committee that evolves and regulates the development of JavaScript (TC39), so Node.js will have a say in the future of the base language, too.

State of Releases & LTS

To understand how Node releases and versions work, Vagg explained that in 2016 there were 63 releases covering four different versions: 0.12, 4, 5, and 6. From version 4 onwards, versions with even numbers are LTS and are supported for three years. Versions with odd numbers are supported for 3 months. Hence, Vagg recommended that shops with large deployments always implement even numbered versions.

Version 0.10, what Vagg described as the “Windows XP of Node.js,” is still being used “because it was the first ‘ready’ version. However 0.10 received no support in 2016, having reached its end of life in 2015. Version 0.12 reached its end of life in 2016. Hence, Vagg urged people using either of these versions to update to something more current, such as version 4 (code named “Argon“).

Argon is an LTS version and will be maintained until April 2018. Version 5, however, as a non-LTS version, reached its end of life in June 2016. The most current non-LTS at the moment of writing is version 7, which will be maintained until April 2017. There is also another LTS version apart from version 4 available and which is also current: version 6 (code name “Boron“). Boron started life in April 2016 and will be maintained until 2019. A new LTS version, version 8, will be coming out in April 2017 and will be maintained until 2020.

Ever since version 4, Vagg says upgrading has been pretty painless. Currently, a whole crew of release managers guarantees a smooth transition between versions. If you are using Node in a large environment, Vagg recommends implementing a migration strategy to avoid “getting stuck” on an unsupported version.

State of the Build

Vagg used the State of the Build segment of his presentation to mention the companies and individual users that make development possible within Node.js. Digital Ocean and Rackspace, for example, have donated resources and funds from the very beginning. The foundation also counts on an ARM cluster made up largely by Raspberry Pis, many of which have been donated by individuals.

These resources are configured to test Node.js core, libuv, V8, full release builds and more. the cluster itself contains 141 build, text and release cluster nodes connected full-time. Each build is compiled for 25 different operating systems and eight different architectures. Every build is painstakingly tested before releasing a new version.

State of Security

In discussing the state of security, Vagg said that security reports should be sent to security@nodejs.org; it is the task of the CTC and domain experts to discuss and solve issues. When an issue is confirmed, it is notified to the nodejs.org and nodejs-sec Google Groups, following Node.js’ “full disclosure” policy. LTS release lines receive as few changes as possible to ensure the platform remains stable. Overall, there were seven security releases during 2016, none of which were severe.

The Node.js Foundation is also working on a new Node security project. The project implements a public working group, made up by professionals from ^lift and other interested parties. The idea is to facilitate the creation of a healthy ecosystem of security service and product providers that work together to bring more rigor and formality to the core and open source ecosystem and its security handling.

Membership is also open to individuals, communities, and other companies. Vagg encouraged anyone who would like to join to visit the workgroup’s site on GitHub.

Watch the complete video below: