If you’ve ever gone to an event that required a ticket, chances are you’ve done business with Ticketmaster. The ubiquitous ticket company has been around for 40 years and is the undisputed market leader in its field.

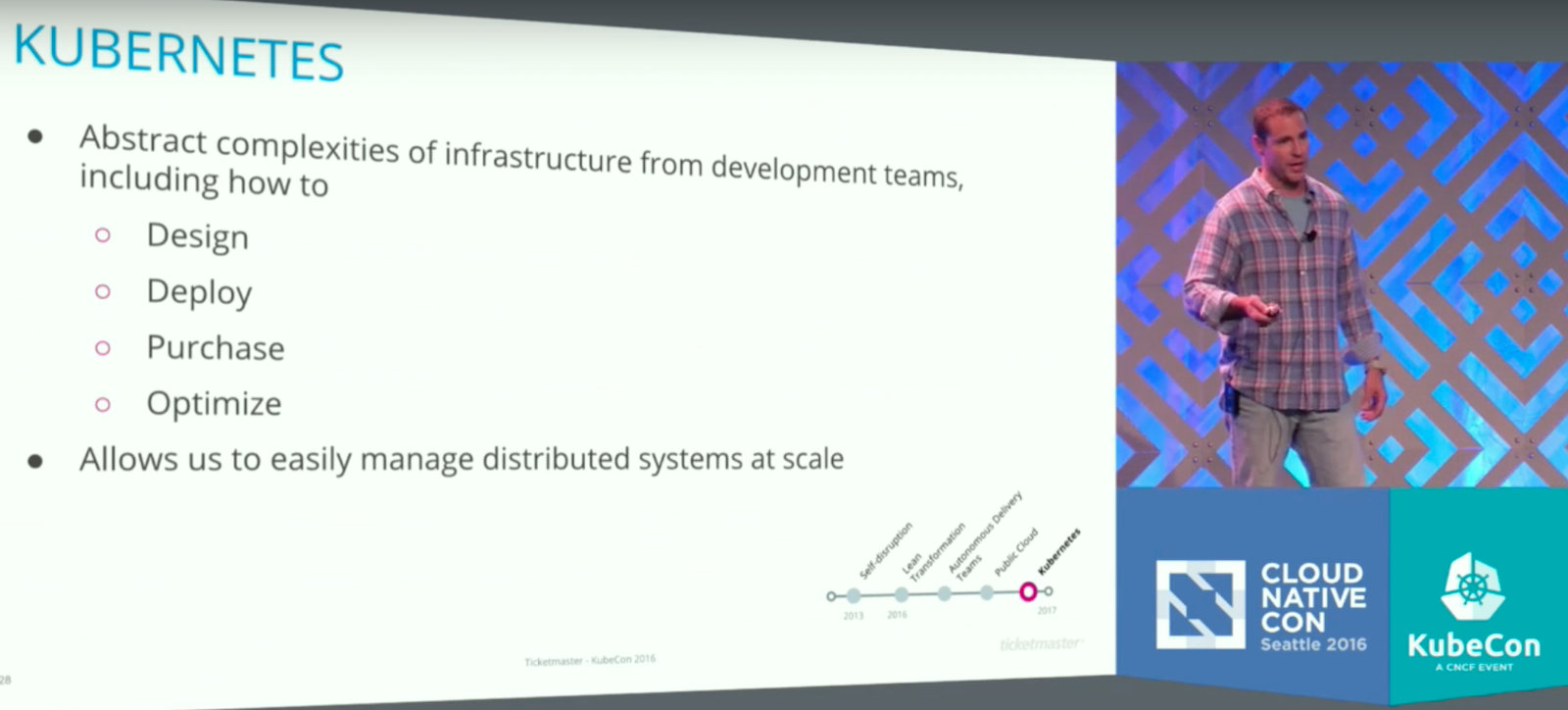

To stay on top, the company is trying to ensure its best product creators can focus on products, not infrastructure. The company has begun to roll out a massive public cloud strategy that uses Kubernetes, an open source platform for the deployment and management of application containers, to keep everything running smoothly, and sent two of its top technologists to deliver a keynote at the 2016 CloudNativeCon in Seattle explaining their methodology.

Continuous Self-Disruption

The company was the first to disrupt the ticket industry when it was founded in 1976 at Arizona State University, and its leaders are perfectly aware that as a ubiquitous market leader, Ticketmaster is ripe to be disrupted itself. So, since 2013, the company has undergone a continuous process of “self-disruption” in an effort to stay ahead of any competition.

“It is great to be the market leader but it is also a terrifying place to be,” said Justin Dean, Ticketmaster’s SVP of Platform and Technical Operations, during the keynote. “Ticketmaster, our ecosystem, has a huge surface area. One little piece of that surface area, could be an entire business for a start up or for a small company. For us, what we have to do as a company, is really optimize for speed and agility.”

This approach has included a shift from a private cloud implementation, with over 22,000 virtual machines across seven global data centers, to the public cloud and AWS. It also means a major commitment to containerization. Dean and his co-presenter, Kraig Amador, both joked that they have every version of every piece of software created over the past 40 years running somewhere inside the company, including an emulated version of VAX software from the 1970s, which runs Ticketmaster’s original groundbreaking system.

Dean said the company has 21 different ticketing systems and more than 250 unique products. As part of their transformation, Ticketmaster has created more than 65 cross-functional software product teams, and they need a system that lets those teams focus on creating new products. This is where Kubernetes comes in.

Let the Makers Make

“Our goal of all of this is let the makers make,” Dean said. “We have an amazing company of makers, creators, visionaries, innovators: people who can focus on delivering products to market, and that is where they should figure out the next big thing, to power our business and make it better. We do not want to burden them with also having to figuring out how to deploy infrastructure to support their software.”

Amador, Ticketmaster’s Senior Director of Core Platform, is leading the effort to fully implement Kubernetes at the company. He said their work is far from over, but early returns have been very promising.

Amador explained that after an extensive internal product audit and team evaluation, Ticketmaster’s DevOps team has built tools to gauge the health of each piece of code and help make sure all these different products are running smoothly and independently.

Independence is of major importance; Amador said. Ticketmaster essentially invites a DDOS attack on its servers every time a popular concert or event has tickets go on sale. So, when a service gets overwhelmed — it happens all the time, he said — Kubernetes is there to get things running again.

“By putting in the Kubernetes and leveraging the pod health checks, Kubernetes can catch that for us and bring it back up for us,” Amador said. “We don’t have to go in there and manually manage it anymore, it just kind of does its own thing.”

Dean said Ticketmaster was very deliberate in its move to public cloud; the company needs to build a system that can not only do $25 billion in commerce every year but also have room to grow seamlessly.

“We have to ensure we have the right strategies,” Dean said. “One of those is really ensuring that we are betting big in the right communities. We definitely feel that the Kubernetes community is the community that we want to be a part of. And we want to encourage others to join us along the journey and add more anchors of big companies into the community so that it can continue to thrive, grow so that some of these problems get solved and we divide and conquer.”

Watch the complete video below:

Do you need training to prepare for the upcoming Kubernetes certification? Pre-enroll today to save 50% on Kubernetes Fundamentals (LFS258), a self-paced, online training course from The Linux Foundation. Learn More >>