Let’s Encrypt was awarded a grant from The Ford Foundation as part of its efforts to financially support its growing operations. This is the first grant that has been awarded to the young nonprofit, a Linux Foundation project which provides free, automated and open SSL certificates to more than 13 million fully-qualified domain names (FQDNs).

The grant will help Let’s Encrypt make several improvements, including increased capacity to issue and manage certificates. It also covers costs of work recently done to add support for Internationalized Domain Name certificates.

“The people and organizations that Ford Foundation serves often find themselves on the short end of the stick when fighting for change using systems we take for granted, like the Internet,” Michael Brennan, Internet Freedom Program Officer at Ford Foundation, said. “Initiatives like Let’s Encrypt help ensure that all people have the opportunity to leverage the Internet as a force for change.”

We talked with Brennan and Josh Aas, Executive Director of Let’s Encrypt about what this grant means for the organization.

Michael Brennan: The Ford Foundation believes that all people, especially those who are most marginalized and excluded, should have equal access to an open Internet, and enjoy legal, technical, and regulatory protections that promote transparency, equality, privacy, free expression, and access to knowledge. A system for acquiring digital certificates to enable HTTPS for websites is a fundamental piece of infrastructure towards this goal. As a free, automated and open certificate authority, Let’s Encrypt is a model for how the Web can be more accessible and open to all.

Linux.com: What is the problem that Let’s Encrypt is trying to solve?

Josh Aas: As the Web becomes more central to our everyday lives, more of our personal identities are revealed through unencrypted communications. The job of Let’s Encrypt is to help those who have not encrypted their communications, especially those who face a financial or technical barrier to doing so. Let’s Encrypt offers free domain validation (DV) certificates to people in every country in a highly automated way. Over 90% of the certificates we issue go to domains that were previously unencrypted or not otherwise not using publicly trusted certificates.

Linux.com: How does Let’s Encrypt further the goals of The Ford Foundation?

Michael Brennan: We think a lot about the digital infrastructure needs of the open Web. This is a massive area of exploration with numerous challenges, so how and where can the Ford Foundation make a meaningful impact? One of the ways we believe we can help is by supporting initiatives that broadly scale access to security and help introduce those efforts to civil society organizations fighting for social justice. Let’s Encrypt fits perfectly into this goal by both serving critical Web security needs of civil society organizations and doing so in a way that is massively scalable.

Linux.com: From your perspective at The Ford Foundation, what population of people is Let’s Encrypt serving?

Michael Brennan: The Internet Freedom team recently took on a trip to visit the Ford Foundation office in Johannesburg, South Africa. While we were there we met with a number of organizations leveraging the Internet to promote social justice. One of the organizations we met was building a tool to serve the needs of local communities. They were thrilled to hear we were supporting Let’s Encrypt because prior to its existence they could only afford to secure their production server, not their development or testing servers.

Let’s Encrypt is changing security on the Web on a massive scale so it can be easy to overlook small victories like this. The people and organizations that Ford Foundation serves often find themselves on the short end of the stick when fighting for change using systems we take for granted, like the Internet. Initiatives like Let’s Encrypt help ensure that all people have the opportunity to leverage the Internet as a force for change.

Linux.com: What can Let’s Encrypt users expect as a result of this grant?

Josh Aas: We will make several improvements through this grant, including our recently added support for Internationalized Domain Name certificates. We will also use these funds to increase capacity to keep up with the growing number of certificates we issue and manage.

Linux.com: What other fundraising initiatives are you pursuing?

Josh Aas: We run a pretty financially lean operation — next year, we expect to be managing certificates covering well over 20 million domains an operating cost of $2.9M. We have funding agreements in place with a number of sponsors, including Cisco, Akamai, OVH, Mozilla, Google Chrome, and Facebook. Some of those agreements are multi-year. These agreements provide a strong financial foundation but we will continue to seek new corporate sponsors and grant partners in order to meet our goals. We will also be running a crowdfunding campaign in November so individuals can contribute.

Linux.com: How can people financially support Let’s Encrypt today?

Josh Aas: We accept donations through PayPal. Any companies interested in sponsoring us can email us at sponsor@letsencrypt.org. Financial support is critical to our ability to operate, so we appreciate contributions of any size.

Linux.com: How can developers and website admins get started with Let’s Encrypt?

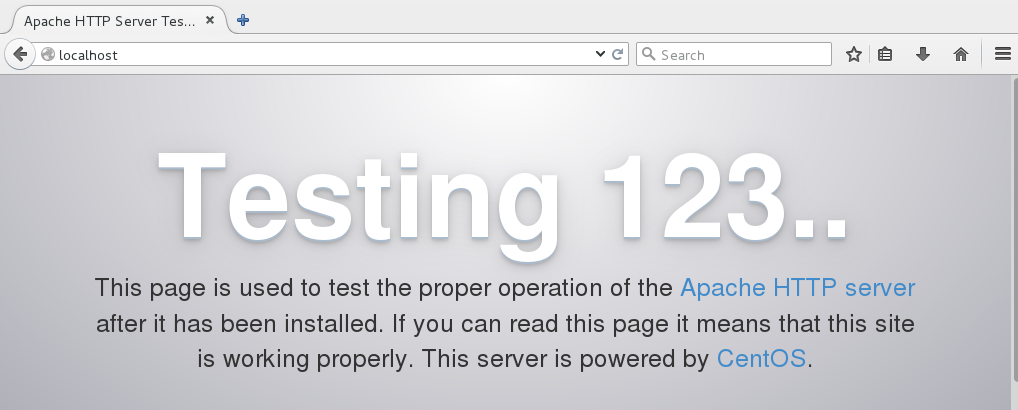

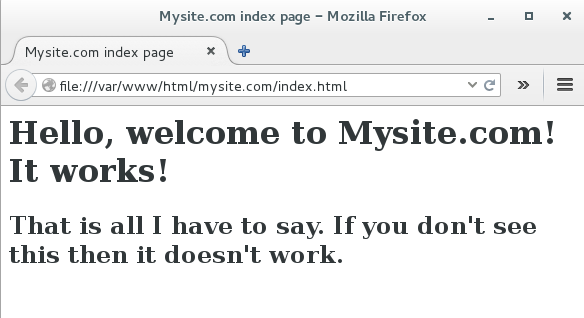

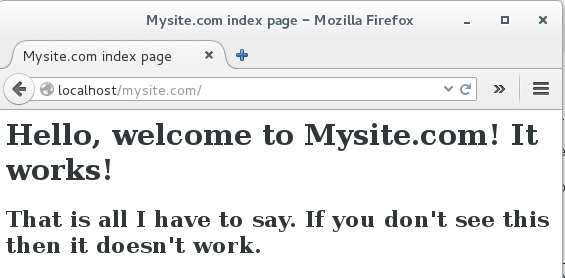

Josh Aas: It’s designed to be pretty easy. In order to get a certificate, users need to demonstrate control over their domain. With Let’s Encrypt, you do this using software that uses the ACME protocol, which typically runs on your web host.

We have a Getting Started page with easy-to-follow instructions that should work for most people.

We have an active community forum that is very responsive in answering questions that come up during the install process.