NVIDIA has kicked off CES week by announcing their latest Tegra ARM SoC, the Tegra K1. The NVIDIA Tegra K1 is a killer SoC with custom 64-bit design and a Kepler-derived graphics processor with 192 GPU cores…

LG Reveals Massive Plans for webOS TV Platform

LG hasn’t yet taken the stage for its CES keynote, but the company’s Korea division has already revealed the webOS TV. Palm’s mobile operating system has been resurrected as a TV interface that focuses on ease of use. And LG is putting its weight behind the effort: webOS will be used on over 70 percent of the company’s 2014 Smart TV lineup. webOS on the TV is very different from what you remember on mobile phones. It’s now based around three new features: simple connection, simple switching, and simple discovery.

Simple connection aims to help users properly set up the TV. When you first turn on the TV, an animated character called Bean Bird appears to help guide you through various options. Simple switching is reminiscent of the cards…

Belkin Shows Linux Powered Smart Slow Cooker at CES 2014

This crock-pot is able to be controlled remotely via the WeMo app for Android or iOS…

The post Belkin shows Linux powered smart slow cooker at CES 2014 appeared first on Muktware.

Munich Transition Documentary

Just in general, if someone has connections with anyone over in Munich, what would be the possibility that a documentary covering their decade long migration to Linux could be assembled for the rest of us to understand better all the trials and tribulations that had to be overcome to bring the complete transition to fruition?

The benefits of a well planned Virtualization

One of the biggest challenges facing IT departments today is to keep your work environment. This is due to the need to maintain IT infrastructure able to meet the current demand for services and applications, and also ensure, that in the critical situations of the company, is able to resume normal activities quickly. And here is where it appears the big problem .

Much of IT departments are working on their physical limit, logical and economical . Your budget is very small and grows on average 1% a year, while managing the complexity grows at an exponential rate. IT has been viewed as a cost center real and not as an investment, as I have observed in most of the companies for which I have passed.

With this reality, IT professionals have to juggle to maintain a functional structure. For colleagues working in a similar reality, recommend special attention to this topic Virtualization .

Instead of speculating, Virtualization is not an expensive process compared to its benefits . Believing that depending on the scenario, Virtualization can be more expensive than many traditional designs. To give you an idea, today over 70% of the IT budget is spent just to keep the system environment, while less than 30% of the budget is invested in innovation advantage, differentiation and competitiveness. This means that almost any IT investment is dedicated simply to “put out the fire” emergency solve problems and very little is spent on solving the problem.

I followed a very common reality in the daily lives of large companies where the IT department is so overwhelmed that you can not measure the time to think again. In several of them, we see two completely different scenarios. A before and after Virtualization / cloud computing. In the first case, what we see is a bottleneck with reality drastic and resources to the limit. In the second, a scene of tranquility, guaranteed safe management and scalability.

Therefore, consider the proposal of Virtualization and discover what you can do for your department and therefore, for your company.

Within this reasoning, we have two maxims. The first: “Rethinking IT. The second: “Reinventing the business.”

The big challenge for organizations is precisely this: rethink. What to do to transform technical consultants?

Increase efficiency and security

To the extent that the structure increases, so does the complexity of managing the environment. It is common to see data center dedicated to a single application. This is because the best practices for each service request that has a dedicated server. Obviously the metric is still valid, because without doubt this is the best option to avoid conflicts between applications, performance, etc.. Also, environments such as this are becoming increasingly detrimental as the processing capacity and memory are increasingly underutilized . On average, only 15% of the processing power is consumed by a server application, that is, over 80% of processing power and memory is actually no use.

Can you imagine the situation? We, first, we have virtually unused servers, while others need more resources, and ever lighter applications, the use of hardware is more powerful.

Another point that needs careful consideration is the safety of the environment. Imagine a database server with disk problem? What will be the difficulty of your company today? The time that your business needs to quote, purchase, receive, change and configure the environment to drop the item. During all this time, what was the problem?

Many companies are based in the cities / regions far from major centers and therefore may not think this hypothesis.

With Virtualization it does not, because we left the traditional scenario where we have a lot of servers, each hosting its own operating system and applications, and we go to a more modern and efficient.

In the image below, we can see the process of migrating the physical environment, where multiple servers to a virtual environment, where we have fewer physical servers or virtual servers hosting.

vmware1

By working with this technology and we have underutilized servers for different applications / services that are assigned to the same physical hardware, sharing CPU resources, memory, disk and network. This makes the average usage of this equipment can reach 85%. Moreover, fewer physical servers means less spending on supplies, memories, processors, means less purchasing power and cooling, and therefore fewer people to manage the structure.

Vmware2

At this point you should ask, but what about security? If now I have multiple servers running simultaneously on a single physical server I’m at the mercy of this server? What if equipment fails?

New thinking is not only the technology but how to implement this technology in the best way possible. Today VMware , the global leader in Virtualization and cloud computing, working with a technology cluster, enabling and ensuring high availability of their servers. Basically, if you have two or more servers that work together in the event of failure of any equipment, VMware identifies this fault and automatically restores all its services on another host. This is automatic, without IT staff intervention.

At runtime, the physical failure is simulated to test the high availability and security of the environment in the future, the response time is fairly quick. On average, each server can be restarted with 10 seconds, 30 seconds or up to 2 minutes between each server. In some scenarios, it is possible that the operating environment will restart in about 5 minutes.

Be ready quickly new services

In a virtualized environment, the availability of new services becomes a quick and easy task, since resources are managed by the Virtualization tool and not tied to a single physical machine. This way you can hire a virtual server resources only and therefore avoids waste. On the other hand, if demand is rapidly increasing daily can increase the amount of memory allocated to this server. This same reasoning applies to the records and processing.

Remember that you are limited by the amount of hardware present in the cluster, you can only increase the memory to a virtual server if this report is available in its physical environment. This ends underutilized servers, as it begins to manage their environment intelligently and dynamically, ensuring greater stability

Integrating resources through the cloud

Cloud computing is a reality, and there is no cloud without Virtualization. VMware provides a tool called vCloud with it is possible to have a private cloud using its virtual structure, all managed with a single tool.

Reinventing the Business

After rethinking, now is the time to change, now is the time to reap the rewards of having an optimized IT organization, we see that when we do a project structured high availability, security, capacity growth and technology everything becomes much easier in the benefits we can mention the following:

Respond quickly to expand its business

When working in a virtualized environment, you have to think in a professional manner to meet all your needs, you can meet the demand for new services, this is possible with VMware because it offers a new server configured in a few clicks, in five minutes and has offered a new server ready to use. Today becomes crucial, since the start time a new project is decreasing.

Increase focus on strategic activities

With the controlled environment, management is simple and it becomes easier to focus on the business. That’s because you get almost all the information and operational work is to have a thought of IT in business, and that is to transform a technical consultant. Therefore, a team will be fully focused on technology and strategic decisions, and not another team as firefighters, are dedicated to put out the fires caused.

Aligning the IT departments decision making

Virtualization allows IT staff have the metric reporting and analysis. With these reports have in their hands a professional tool that will lead to a fairly simple language and understand the reality of their environment. Often, this information supports a negotiation with management and, therefore, the approval of the budget for the purchase of new equipment.

Well folks, that’s all. I tried not to write too much, but it’s hard to say something as important in less lines, I promise that future articles will discuss in detail a little more about VMware and how it works.

Open Computing Accelerated Sharply in 2013

Editor’s Note: This is a guest blog post by Adam Jollans, Program Director of Linux and Open Virtualization Strategy at IBM.

Open computing has been steadily growing in enterprise acceptance and, in 2013, that trend accelerated sharply. Many factors contributed to the upward trajectory of open computing in the last year. However, there were three notable developments that, in retrospect, were the critical game-changers.

Here’s a look at the three key developments in open source in 2013:

KVM Momentum

At LinuxCon/CloudOpen Europe in October, it was announced that the Open Virtualization Alliance (OVA) would become a Collaborative Project under The Linux Foundation. The OVA was originally founded in May 2011 to help advance adoption of the Kernel-based Virtual Machine (KVM) hypervisor by providing education, best practices and nurturing a vibrant ecosystem around open virtualization. Today, hundreds of companies from all over the globe are members of the OVA – it is these members that will continue to contribute to and guide the OVA as a Collaborative Project at The Linux Foundation. KVM will also benefit from access to a wider ecosystem and synergy with Linux Foundation events and marketing activities.

Open virtualization with KVM offers a high degree of scalability. In fact, KVM holds the top results in both SPECvirt_sc2010 and SPECvirt_sc2013 benchmarks. In addition, KVM provides strong enterprise level security as a result of SELinux, which enables it to provide Mandatory Access Control and enforced isolation of virtual machines. A core component of the Linux kernel, KVM has gained wide acceptance. According to a recent IDC white paper, cloud providers are embracing KVM. Many prominent public clouds have been built on KVM, including the Google Compute Engine, HP Cloud, and IBM SmartCloud Enterprise.

Open virtualization with KVM offers a high degree of scalability. In fact, KVM holds the top results in both SPECvirt_sc2010 and SPECvirt_sc2013 benchmarks. In addition, KVM provides strong enterprise level security as a result of SELinux, which enables it to provide Mandatory Access Control and enforced isolation of virtual machines. A core component of the Linux kernel, KVM has gained wide acceptance. According to a recent IDC white paper, cloud providers are embracing KVM. Many prominent public clouds have been built on KVM, including the Google Compute Engine, HP Cloud, and IBM SmartCloud Enterprise.

As Jim Wasko, Director, IBM Linux Technology Center, pointed out in his keynote at SUSECon 2013, new applications – including big data, cloud, social and mobile – are placing greater demands on organizations’ IT infrastructures. Linux and other open technologies are critical to solving these challenges because they can provide agility, interoperability, and choice. Now that the OVA is a Collaborative Project under The Linux Foundation, KVM can be expected to extend its reach in the enterprise and get its open message out to many more users and partners.

Linux on Power

In September, IBM revealed its plans to invest $1 billion in new Linux and open source technologies for IBM’s Power Systems servers. This investment, which will be made over the next 5 years, is aimed at helping clients capitalize on big data and cloud computing with modern systems that can handle the new wave of applications emerging in the post-PC era.

![]()

The new pledge echoed IBM’s announcement more than 13 years ago that it would invest $1 billion in the Linux movement. Today, Linux is accepted and trusted within the majority of enterprise server environments, according to a study commissioned by enterprise Linux provider SUSE. Eighty-three percent of respondents said they are now running Linux in their server environments, and more than 40% are using Linux as either their primary server operating system or as one of their top server platforms.

The new pledge echoed IBM’s announcement more than 13 years ago that it would invest $1 billion in the Linux movement. Today, Linux is accepted and trusted within the majority of enterprise server environments, according to a study commissioned by enterprise Linux provider SUSE. Eighty-three percent of respondents said they are now running Linux in their server environments, and more than 40% are using Linux as either their primary server operating system or as one of their top server platforms.

New workloads like analytics and big data require powerful processors with multiple threads and high memory bandwidth. Building IBM’s Jeopardy!-winning cognitive computing solution Watson on Linux on POWER Systems, for example, has enabled IBM customers to run workload-optimized systems for everything from complex analytics to high performance computing in fields like Healthcare, Customer Service, and Finance.

New workloads like analytics and big data require powerful processors with multiple threads and high memory bandwidth. Building IBM’s Jeopardy!-winning cognitive computing solution Watson on Linux on POWER Systems, for example, has enabled IBM customers to run workload-optimized systems for everything from complex analytics to high performance computing in fields like Healthcare, Customer Service, and Finance.

We at IBM think there is a big opportunity for customers to benefit from open solutions on Linux on Power. The new $1 billion investment will make that possible – just as the similar investment in the early days helped propel Linux into the enterprise.

OpenStack

To ensure that innovation in cloud computing is not hampered by locking businesses into proprietary islands of insecure and difficult-to-manage offerings, in early 2013, IBM said that all of its cloud services and software will be based on an open cloud architecture supporting industry-wide open standards for cloud computing.

As its first step, IBM unveiled new private cloud offerings based on the open source OpenStack software. IBM SmartCloud Entry and IBM SmartCloud Provisioning speed and simplify managing an enterprise-grade cloud so that, for the first time, businesses have a core set of open  source-based technologies to build enterprise-class cloud services that can be ported across hybrid cloud environments. The OpenStack Project, an open source cloud platform, effectively provides the operating system for cloud, enabling the basis for interoperability and choice in terms of moving workloads from, to and between clouds, including public and private clouds.

source-based technologies to build enterprise-class cloud services that can be ported across hybrid cloud environments. The OpenStack Project, an open source cloud platform, effectively provides the operating system for cloud, enabling the basis for interoperability and choice in terms of moving workloads from, to and between clouds, including public and private clouds.

The majority of enterprise IT decision makers that responded to a recent Red Hat survey indicated that OpenStack is part of their organization’s future cloud infrastructure plans. The “2013 Path to an OpenStack-Powered Cloud” survey of 200 U.S. enterprise decision makers, commissioned by Red Hat through IDG Connect, found that internal development of private cloud platforms has left organizations with a host of challenges to address, including resource management, IT management; application management; and application migration. According to the survey results, as organizations seek to address these issues, they are moving or planning a move to OpenStack for private cloud initiatives. And, not quite surprising, a recent OpenStack User Survey indicated that 62% of OpenStack deployments use KVM as the hypervisor of choice.

The Open Path Forward

Taken together, the OVA becoming part of The Linux Foundation, IBM’s $1 billion commitment to Linux on Power, and growing industry support for the Open Stack-supported open cloud,  were the key developments that moved open technologies deeper into the enterprise. These developments will also form the foundation for further open source expansion in 2014.

were the key developments that moved open technologies deeper into the enterprise. These developments will also form the foundation for further open source expansion in 2014.

Adam Jollans is currently leading the worldwide cross-IBM Linux and open virtualization strategy for IBM. In this role he is responsible for developing and communicating the strategy for IBM’s Linux and KVM activities across IBM, including systems, software and services.

He is based in Hursley, England, following a two-year assignment to Somers, NY where he led the worldwide Linux marketing strategy for IBM Software Group. He has been involved with Linux since 1998, and prior to his U.S. assignment he led the European marketing activities for IBM Software on Linux.

Acer Launches 27-Inch All-In-One Android PC

The $1,099 monitor packs in a quad-core processor and a 2,560×1,440 pixel touch screen. [Read more]

Ubuntu 13.10 vs. Fedora 20 Benchmarks

To complement the many Ubuntu 13.10 Linux benchmarks I have published on Phoronix since the “Saucy Salamander” premiere in October, I’ve started several Fedora 20 benchmarks since December. So far I’ve shared Fedora 19 vs. Fedora 20 benchmarks and Wayland OpenGL benchmarks, besides using Fedora 20 as the base platform for unrelated tests, while today are some Ubuntu 13.10 vs. Fedora 20 performance benchmarks.

Samsung Chairman Urges Staff to Innovate ‘Non-Stop’ and Drop Hardware Focus

Samsung Electronics chairman Lee Kun-hee is calling for his company to forget its old business models and focus on a new wave of software innovation. As the Wall Street Journal reports, Lee used his annual new year speech to urge the company to “get rid of business models and strategies from five, ten years ago and hardware-focused ways.” To achieve that, he wants the company’s massive research and development centers to “work around the clock, non-stop.”

Lee’s speech comes just a day after Samsung’s market cap dropped by over $6 billion amid analyst forecasts that company profit growth will fall to under 10 percent this financial quarter. Samsung’s profits have grown spectacularly in recent years, and although the company is still…

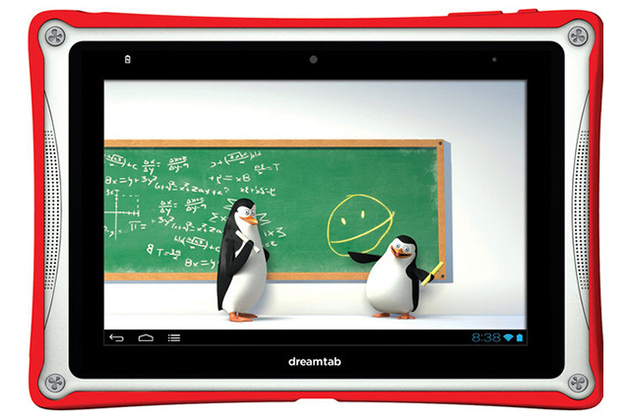

DreamWorks Will Release Its Own ‘Dreamtab’ Android Tablet This Spring

DreamWorks has partnered with Fuhu, the company that makes Toys R Us’ Nabi line of tablets, to produce an Android tablet for kids. The 8-inch Dreamtab will cost “under $300,” according to the New York Times, and will feature regularly updated original content based on DreamWorks characters. The content will be tailored for tablets, and will automatically arrive on Dreamtabs ready for consumption. Unlike many mobile games based on movie and TV, the content isn’t being created by a third-party, but instead is being produced in-house.