As shown in our recent open-source GPU driver comparison, the RadeonSI Gallium3D driver still has a long ways to mature to be more competitive against the R600g driver and the Catalyst binary driver. Fortunately, one of the major 3D performance-boosting features (HyperZ) appeared on Tuesday in mailing list patch form…

Chrome Apps Could Start Coming to Android and iOS as Early as Next Month

In September, Google told us that Chrome Apps would come to mobile operating systems as well — that packaged versions of web apps could even be sold in Google Play and the iOS App Store, once the company had worked the kinks out. At the time, the company cautioned that such an idea was a ways away, that Google wanted to target Windows desktops first. But it seems that Chrome apps could arrive on Android and iOSsooner than we thought.. Google has figured out a way to port apps to mobile using a new set of tools, and it hopes to release a beta version of those tools as soon as next month.

Several Monitoring Metrics for Virtualized Infrastructure

To build a virtualized cloud infrastructure, four major elements play the vital role in the way to design a virtual infrastructure from its physical counterpart. These four elements are at the level of processors, memory, storage and network. In the continuity of the design, there is the life of the infrastructure, that is to say the daily operations. Is it administering the daily virtual infrastructure as they do in a physical environment? Pooling many resources involves consideration of other parameters that we will see here.

Processors performance monitoring

Although the concept of virtual (vCPU) for a hypervisor (VMware or Microsoft Hyper-V) is close to the notion of the physical heart, a virtual processor is much less powerful than a physical processor. Noticeable CPU load on a virtual server can be absolutely more important than the physical environment. But nothing to worry about if the server has been sized to manage peak loads. In contrast, maintenance of indict alert threshold often makes more sense and it is wise to adjust.

By the way, all hypervisors are not necessarily within a server farm. The differences may be at the level of processors (generation, frequency, cache) or other technical characteristics. This is something to consider as a virtual server that can be migrated to warm hypervisor to another (vMotion in VMware, Microsoft Live Motion). After a trip to warm virtual server, the utilization of the processor can then vary.

Does the alert threshold define by taking into account the context changes?

The role of the scheduler in access to physical processors is per virtual servers. You may not be able to access it immediately, because there would be more virtual servers, more access and waiting time (latency) which is important to consider. In addition, performance monitoring is not limited solely to identify how power is used, but also to be able to detect where it is available. In extreme cases it is possible to visualize a virtual server with low CPU load. This indicator of processor latency for each virtual server is an indicative of a good use of the available power. Do not believe that the power increases by adding virtual processors to the server, it is actually more complex and it is often the opposite effect of what is required to happen. Usually, you need to send this problem by analyzing the total number of virtual processors on each hypervisor.

Monitoring the memory utilization

If the memory is shared between the virtual servers running within the same hypervisor, it is important to distinguish used and unused memory. The used memory is usually statically allocated to virtual server while the unused memory is pooled. Due to this reason it is possible to run virtual servers with a total memory which also exceeds the memory capacity of hypervisor. The memory over-provisioning of virtual servers on hypervisor is not trivial. It is a kind of risk taking, betting that all servers will not use their memory at the same time. There is no concern of the type “it is” or “this is wrong”, much depends on the design of virtual servers. However, monitoring will prevent it from becoming a source of performance degradation.

A first important result is that over provisioning prevents starting of all virtual servers simultaneously. Indeed, a hypervisor verifies that it can affect the entire memory of a virtual server before starting it. It is possible to start with a timer so that everyone releases its unused memory in a specific order.

The slower memory access is second consequence. VMware has implemented a method of garbage collection (called as a ballooning) with virtual servers. It occurs when the hypervisor is set to provide memory to a virtual server when available capacity is insufficient. The hypervisor strength release memory with virtual servers. This freed memory is then distributed to servers as needed. This mechanism is not immediate; there is some latency between the request for memory allocation and its effectiveness. This is the reason it slows down memory access. This undermines the good performance of the servers.

Another consequence of the ballooning, it can also completely change the behavior of the server’s memory. Linux systems are known to use available memory and keep it cached when it is released by the process (memory that does not appear to be free). The occupancy rate of memory of such a server is relatively stable and high. This rate will decrease with ballooning and will not be stable (frequent releases and increases).

Also there are cases where used memory can be pooled between virtual servers. This appears when the ballooning is not enough. Performance is greatly degraded when it is a situation to be avoided.

Are Cloud Operating Systems the Next Big Thing?

You may have heard the new buzz word “Cloud Operating System” a few times in the last few months. The term gained prominence when Cloudius Systems launched OSv at LinuxCon in September. Many people working on OSv – namely Glauber Costa, Pekka Enberg, Avi Kivity and Christoph Hellwig – are well known in the Linux community, due to their role in creating KVM. But the concept of a cloud operating system isn’t new. There are many cloud OSes from which to choose, including our own forthcoming MirageOS.

Contrary to popular belief, cloud operating systems do not threaten system security or reduce the importance of Linux in the cloud. And they provide many advantages over today’s application stack including portability, low latency and simplified management. In fact, they may just be the next big thing.

Cloud Operating Systems: A new incarnation of an older idea

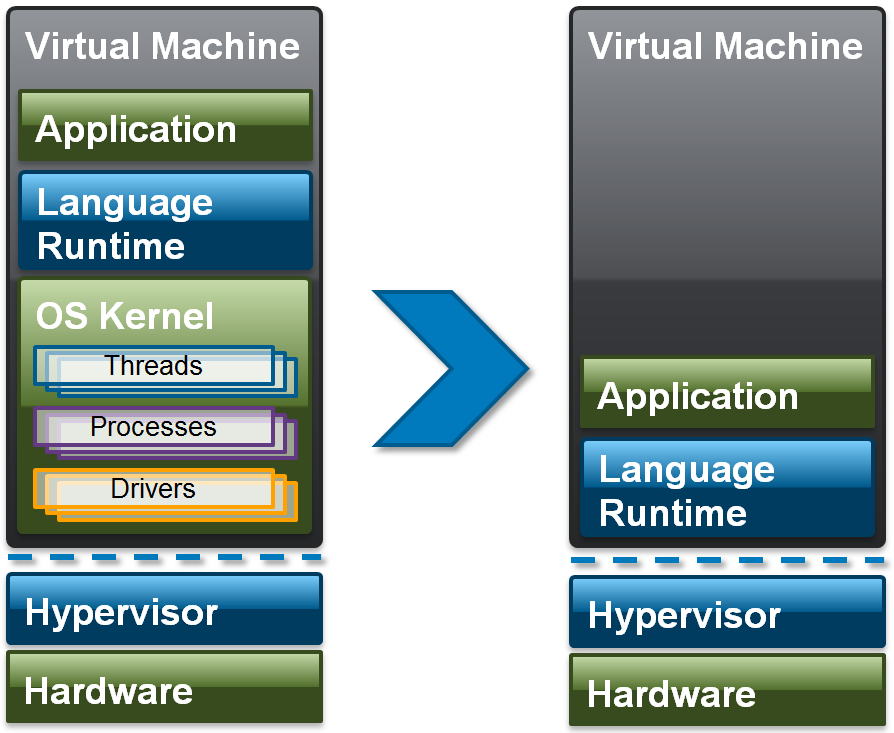

The approach taken by OSv (as well as others before OSv), revisits an old approach to operating system construction – the Library OS – and puts it in the context of cloud computing within a virtual machine. The basic premise of this approach is to simplify the application stack in the cloud significantly, removing layers of abstraction and offering the promise of less complexity, increased system security and simplified management of application stacks in the cloud. Figure 1 shows this approach in more detail.

As you can see, Cloud Operating Systems are designed to run a single application within a single Virtual Machine: thus much of the functionality in a general purpose operating system is simply removed. Of course this could be done directly on hardware, in effect requiring that every language runtime is ported to different hardware environments. This is an expensive proposition, which has limited adoption of the Cloud Operating System until now. However, hypervisors expose an idealized and tightly controlled hardware environment within the VM that can be used directly by a language runtime. Add to this the fact that your typical cloud application only needs access to disk and a network (graphics, sound, and other functionality is implemented on top of network protocols) and suddenly, the cost of porting a language runtime to a hypervisor is manageable.

As we are also only running one application per VM, the runtime and application can run in the same address space, simplifying things even more. In other words, the need for TLB flushes is removed and extra code and overhead eliminated. For some languages, such as OCaml and Haskell, the runtime and application can be statically linked, reducing footprint, dead code and increasing security even more. This single kernel and application image is being termed a “unikernel” to reflect its highly specialised nature.

Examples of Cloud Operating Systems

As stated earlier, OSv is not the first Cloud Operating System on the market. To the credit of OSv’s creators, it did put the technology on the map by creating lots of buzz. The table below shows what is available.

|

Cloud OS |

Targeted language |

Available for |

|

C++ |

Xen |

|

|

C |

Windows ”picoprocess” |

|

|

Erlang |

Xen |

|

|

Haskell |

Xen |

|

|

Java |

Xen |

|

|

C |

Xen |

|

|

OCaml |

Xen, kFreeBSD, POSIX, WWW/js |

|

|

C |

NetBSD, Xen, Linux kernel, POSIX |

|

|

Java |

KVM, Xen |

Of course, many of these projects are still under development and it will come down to how easy it will be to port existing applications to a Cloud Operating System for a specific language. This depends to a large degree on how rich and well defined language runtimes are.

Are Cloud Operating Systems bad for Linux and security?

When OSv was launched in September, the two overwhelming reactions by the Linux community were that OSv (and by extension Cloud Operating Systems) are bad for Linux and Security.

Let’s look at Linux first: if you run a Cloud Operating System on top of LXC, KVM or Xen you are still running Linux. In some sense, the Cloud Operating System approach is really only enabled by the wide hardware support that Linux provides and the dominance of Linux-based technologies in cloud computing. Admittedly, fewer Linux Kernels may be running in guests, but is this really an issue?

What about security? How secure a system is depends on two factors. 1. The amount of code in the system: more code => more potential exploits => less security. On the flip side: less code => fewer potential exploits => more security. Thus removing layers of code is a good thing from a security perspective. 2. The damage an exploit can do: in a typical cloud application, an attack always starts with input that would either lead to an attack of the underlying OS or application and will then try and get to some user data, take over your OS, another application or other resources in the system. In the Cloud Operating System case, we only have one application and a language runtime. In a nutshell, an attacker would not gain more than access to the already running application. As the Language runtime for a Cloud Operating System is smaller than in the case of a general purpose OS, an attack vector through the Language runtime is harder and less likely. You may argue, that such an attacker could then jump into another VM. However, that possibility exists today: if you trust an application stack running in a public cloud today, you would actually be better off in the new model.

So what about the Xen Project?

As I work for the Xen Project, I wanted to talk a little bit about Xen and Cloud Operating Systems. The first observation is that the majority of Cloud Operating Systems run on Xen. There are two primary reasons for this. First, Xen’s footprint in the Cloud: with AWS, Rackspace Public Cloud and many others running Xen, supporting Xen first makes sense. The second reason is technical: Xen Paravirtualization provides a very simple and idealized interface for I/O to the guest. In contrast, the KVM VIRTIO interface looks pretty much like the underlying hardware. As a consequence, it is easier to port a language runtime to Xen. It is also worth noting that Xen has also been using operating systems within its core functionality to implement advanced security features. It is possible to run device drivers, QEMU and other services within their own VM on top of MiniOS. Conceptually the approach taken by Xen to increase security is very similar to that used by Cloud Operating Systems. If you want to know more, watch George Dunlap’s LinuxCon presentation Securing Your Xen-Based Cloud.

The Xen Project also has been developing its own Cloud Operating System called MirageOS for some time. MirageOS, will have its first release shortly and is worth watching out for.

Are Cloud Operating Systems going to be the next Big Thing?

Certainly ErlangOnXen, HalVM, MirageOS and OSv show great potential. Due to their very small footprint and low latency, Cloud Operating Systems are also particularly suited to run on microservers. There is also great potential for OCaml (via MirageOS) and other languages that compile to non-x86 code such as ARM or even JavaScript, since the same libraries can work on embedded systems as well as web servers and the cloud. Portability of existing applications is less of an issue, as these can be written straight away in a portable way.

We are also seeing a new breed of Cloud Operating Systems which target very specific use cases. An example is ClickOS, which is designed to make the development of middlebox appliances such as NATs, Firewalls and SDN appliances very easy. This is an interesting development, which gels well with an increased interest in virtualization outside server and cloud. Potential applications we are starting to see are emerging in automotive, set-top boxes, mobile, networking and many embedded use cases. The Cloud OS approach has big potential for these type of applications (although for embedded use-cases it is probably more accurate to talk about Library Operating Systems).

Remaining challenges

One technical challenge, which could be resolved through cross-project collaboration, is to remove code duplication for bootloading (across open source hypervisors as well as CPU architectures) and possibly some other areas.

The biggest challenge for Cloud Operating Systems, however, is that today most cloud providers do not provide support for very small, high density VM deployments. Two things need to happen to resolve this. Hypervisors need to be able to run thousands of VMs on large hosts: this is something which is being addressed for Xen by increasing the numbers of Virtual Machines that can be run on hosts (see David Vrabel’s talk on Unlimited Event Channels). Cloud billing resolution would also need to adapt to allow charging for much smaller Virtual Machines that run for very short times (less RAM, less disk space, VM lifespan measured in seconds rather than hours). Whether this will happen, depends on whether this makes economic sense for cloud providers. On the other hand, being able to maximize resource utilization beyond where it is today may be a very attractive option for private clouds.

Firefox OS Phones Going Higher-End, Entering New Markets

There have been some interesting developments surrounding Mozilla’s Firefox OS platform and smartphones built on it. Alcatel had already delivered its popular OneTouch Fire phone based on the mobile operating system in countries ranging from Germany to Hungary and Poland. Now, the OneTouch Fire is going on sale at low prices in Italy via Telecom Italia. Meanwhile, Geeksphone has been discussing a high-end Firefox OS phone called Revolution that will purportedly run both Mozilla’s platform and Android (though users will need to choose one platform).

You can check out the Geeksphone Revolution homepage here. It’s light on details, but Geeksphone was among the first makers of Firefox OS phones and has made Android phones as well. CNET has reported on the company’s plans to deliver phones with high-end architecture giving users the choice to run Android or Firefox OS.

CentOS 6.5 Desktop Installation Guide with Screenshots

CentOS 6.5 released

Following with the release of RHEL 6.5, CentOS 6.5 has arrived on 1st Dec and its time to play with it. For those who want to update their existing 6.4 systems to 6.5 simply use the “yum update” command and all the magic would be done.

CentOS 6.5 has received some package updates as well as new features. Check out the release notes for detailed information.

Major updates

The Precision Time Protocol – previously a technology preview – is now fully supported. The following drivers support network time stamping: bnx2x, tg3, e1000e, igb, ixgbe, and sfc.

OpenSSL has been updated to version 1.0.1.

OpenSSL and NSS now support TLS 1.1 and 1.2.

KVM received various enhancements. These include improved read-only support of VMDK- and VHDX-Files, CPU hot plugging and updated virt-v2v-/virt-p2v-conversion tools.

Hyper-V and VMware drivers have been updated.

Updates to Evolution (2.32) and Libre Office (4.0.4).

Download

In this post we shall be installing it on the desktop. Head to either of the following urls

http://isoredirect.centos.org/centos-6/6.5/isos/

http://mirror.centos.org/centos/6.5/isos/

Select your machine architecture and it will then present a list of mirrors. Get into any mirror and then get the torrent file to download or the direct iso download link. There are multiple download options available like LiveCD, LiveDVD, Dvd1+2, Minimal and…

Read full post here

CentOS 6.5 desktop installation guide with screenshots

OpenDaylight Developer Spotlight: Su-Hun Yun

OpenDaylight is an open source project and open to all. Developers can contribute at the individual level just like any other open source project. This blog series highlights the people who are collaborating to create the future of Software Defined-Networking (SDN) and Network Functions Virtualization (NFV).

Su-Hun Yun is a Senior Manager at NEC Corporation of America and leads business development for NEC’s Software-Defined Networking (SDN) products and creating an OpenFlow-based SDN ecosystem. As a member of the original team working on the NEC ProgrammableFlow Networking Suite product line, he was instrumental in launching the world’s first production-ready SDN product in 2011. He also has more than 20 years of experience in carrier and enterprise networking.

How did you get involved with OpenDaylight? What is your background?

I have been involved in OpenDaylight from the founding phase, and have been active in OpenFlow since 2009, when I started the OpenFlow controller project in NEC. NEC has been a supporter of SDN research at Stanford from the beginning and has been a leader in the development of OpenFlow and in the SDN industry itself, shipping the first generally available SDN product, ProgrammableFlow® Networking Suite, in May of 2011.

I recognize the need to have an open source community for development of the SDN controller because of customers’ concerns regarding vendor lock-in and closed solutions. This support of open source is one of the reasons NEC became a founding member of the OpenDaylight community.

Read more at OpenDaylight Blog

It’s Now Even Easier Trying Out KDE Frameworks 5

Since the advent of Project Neon it’s been made very easy to try out KDE Frameworks 5 and Plasma 2 for Kubuntu Linux users, however, it’s now even easier…

Google Cloud Compute Engine Is Now Officially Live

Although it has been in test mode for a year and already serves well-known players including Red Hat and Snapchat, Google has now officially rolled out its IaaS (infrastructure-as-a-service) Google Compute Engine (GCE) as a commercial service that will compete with Amazon Web Services (AWS) and other platforms. There are new lower prices for using the GCE platform and Service Level Agreements (SLAs) guarantee close to 100 percent availability.

Although it has been in test mode for a year and already serves well-known players including Red Hat and Snapchat, Google has now officially rolled out its IaaS (infrastructure-as-a-service) Google Compute Engine (GCE) as a commercial service that will compete with Amazon Web Services (AWS) and other platforms. There are new lower prices for using the GCE platform and Service Level Agreements (SLAs) guarantee close to 100 percent availability.

There are some unique aspects to Google Compute Engine, including the fact that Google offers pay-as-you-go pricing billed in 10-minute increments. Google has lowered the price for standard instances by 10 percent. As an example, the price of a standard one core instance is now $0.104 per hour. There are also new 16-core instances for heavy computational needs.

Linux 3.9 Through Early Linux 3.13 Kernel Benchmarks

While I’m waiting for development activity on the Linux 3.13 kernel to settle down a bit more before delivering comprehensive benchmarks looking at the Linux 3.13 kernel performance changes across the various covered subsystems, up this morning are some early benchmarks of the Linux 3.13 Git kernel and benchmarking every major release going back to Linux 3.9.