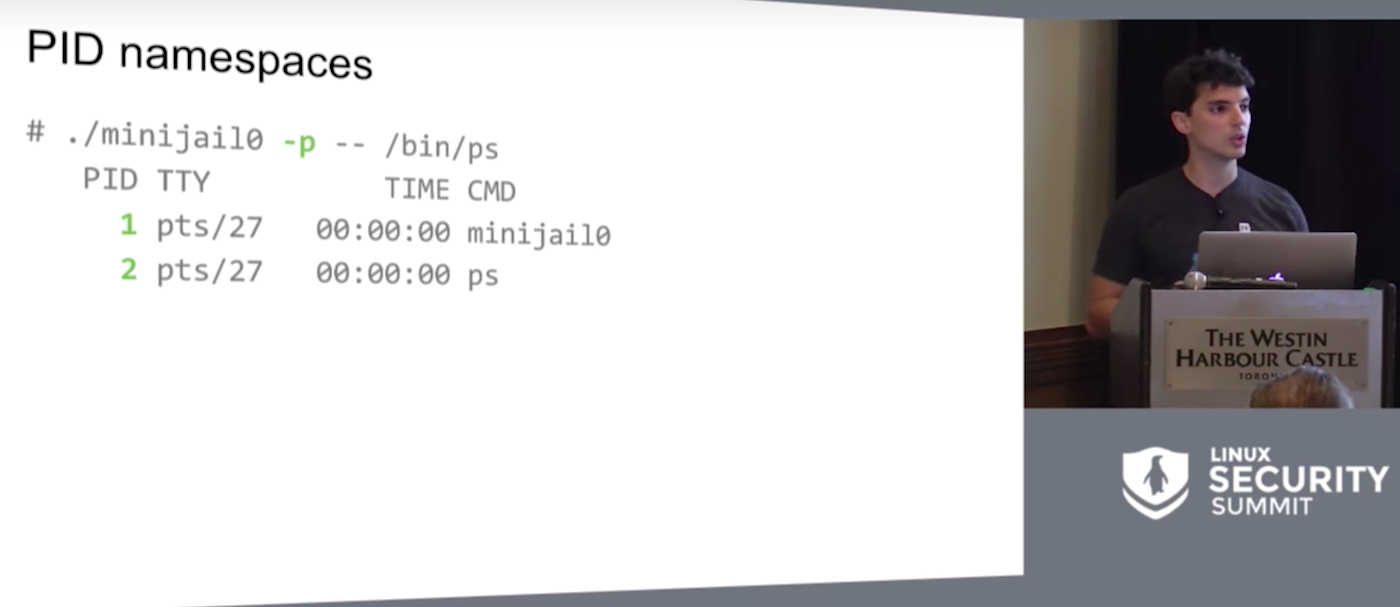

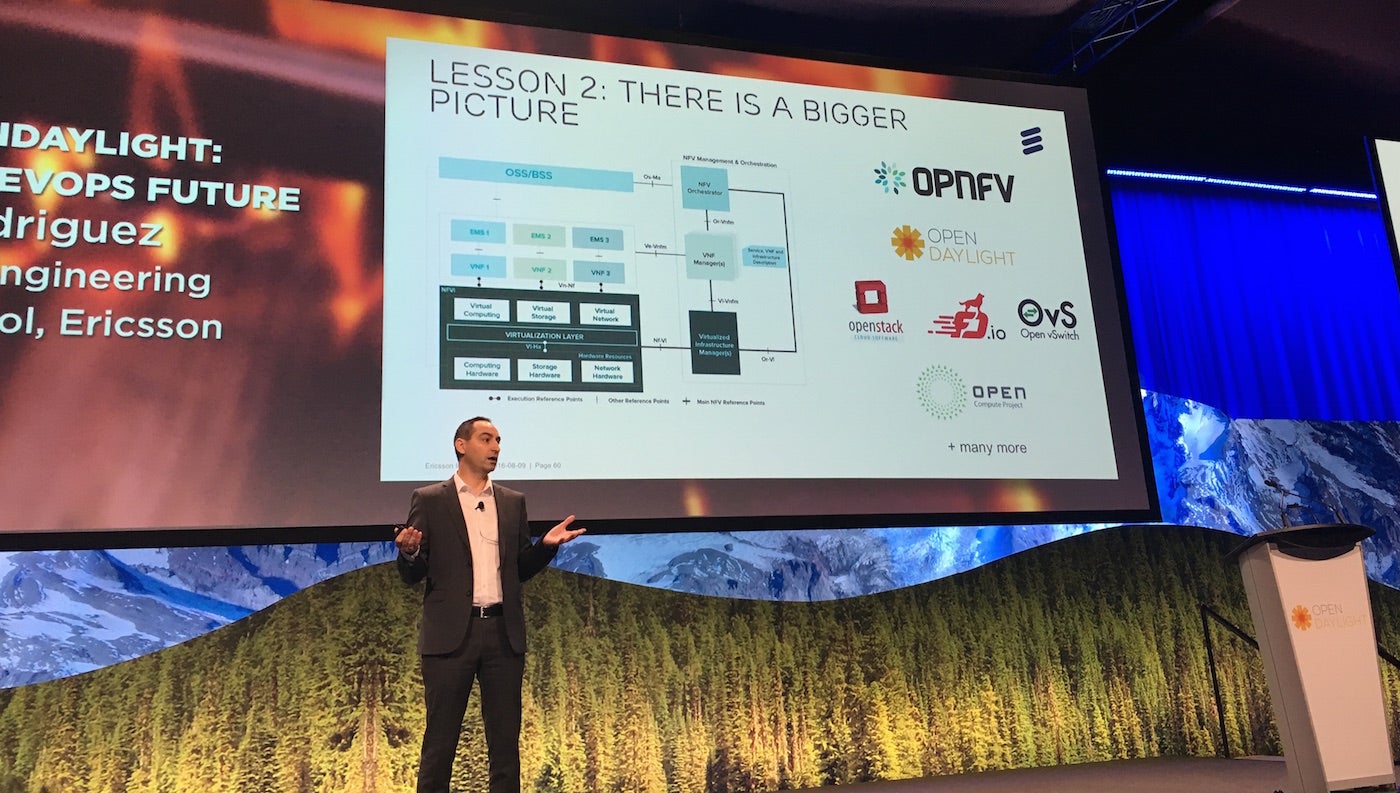

Google’s Minijail sandboxing tool could be used by developers and sysadmins to run untrusted programs safely for debugging and security checks, according to Google Software Engineer Jorge Lucangeli Obes, who spoke last month at the Linux Security Summit. Obes is the platform security lead for Brillo, Google’s Android-based operating system for Internet-connected devices.

Minijail was designed for sandboxing on Chrome OS and Android, to handle “anything that the Linux kernels grew.” Obes shared that Google teams use it on the server side, for build farms, for fuzzing, and pretty much everywhere.

Since “essentially one bug separates you and any random attacker,” Google wanted to create a reliable means to swiftly identify problems with privileges and exploits in app development and easily enable developers to “do the right thing.”

The tool is designed to assist admins who struggle with deciding what permissions their software actually needs, and developers who are vexed with trying to second guess which environment the software is going to run in. In both cases, sandboxing and privilege dropping tends to be a hit or miss affair.

Even when developers use the privilege dropping mechanisms provided by the Linux kernel, sometimes things go awry due to numerous pitfalls along that path. One common example Obes cited was trying to ride a switch user function that will drop-root and then forgetting to check the result of the situation relief, or setuid function, afterwards.

In this scenario, the exploit is in causing the setuid call to fail which still allows the program to run with root privileges. This in turn will exploit another bug in the process. The best way to stop this kind of exploit is to create a fix that will abort the program in the case of a setuid call fail.

Find and Fix

While security pros may be quick to scoff at such a rudimentary mistake, it’s often the simplest oversights that lead to the biggest security problems. Rather than judge one another, Obes said, remember that the goal is to find and fix problems in the software. Although there will always be bugs, eradicating as many as possible, from the simple to the sophisticated, is always the goal.

Minijail first identifies and flags roots where problems exist. It is unnecessary for developers to understand all the intricacies of dropping privileges using Linux kernels because the tool provides a single library for privilege dropping code.

“By using Minijail, we turned the 15+ lines of sign-in capabilities to one or three, because of formatting,” he said. The system never fails to check the results, such as result of a setuid call, and it provides for unit and integration testing, too, to ensure the app always works.

Eventually the team realized that Minijail was roughly 85 percent of the way to building real containers so they took the tool the rest of the way. “Minijail is essentially underlying this new technology that Google added to Chrome OS which allows you to run Android applications, natively with no emulation or distortion,” he said. “It’s just an Android system running inside a container.” Thus, Minijail evolved to be both a sandboxing and containment helper.

It accomplishes this primarily by blocking some root permissions through the use of capabilities to partition the information. In this way, developers can “grant specific subsets of that functionality directly to a process without granting the whole function to do that process.”

Obes returned to his Bluetooth D example as it needs permissions to configure a network interface. “That shouldn’t give it permissions to, for example, reboot the system or mount things,” he explained.

Watch the full presentation below.