The Internet of Things market is fragmented, amorphous, and continually changing, and its very nature requires more than the usual attention to interoperability. It’s not surprising then, that open source has done quite well here — customers are hesitant to bet their IoT future on a proprietary platform that may fade or become difficult to customize and interconnect.

In this second entry in a four-part series about open source IoT, I have compiled a guide to major open source software projects, focusing on open source tech for home and industrial automation. I am omitting more vertical projects related to IoT, such as Automotive Grade Linux and Dronecode, and I’m also skipping open source, IoT-oriented OS distributions, such as Brillo, Contiki, Mbed, OpenWrt, Ostro, Riot, Ubuntu Snappy Core, UCLinux, and Zephyr. Next week, I’ll cover hardware projects — from smart home hubs to IoT-focused hacker boards — and in the final part of the series, I’ll look at distros and the future of IoT.

The list of 21 projects below includes two major Linux Foundation hosted projects — AllSeen (AllJoyn) and the OCF (IoTivity) — and many more end-to-end frameworks that link IoT sensor endpoints with gateways and cloud services. I have also included a smattering of smaller projects that address particular segments of the IoT ecosystem. We could list more, but it’s increasingly difficult to determine the difference between IoT software and just plain software. From the embedded world to the cloud, more and more projects have an IoT story to tell.

All 21 projects claim to be open source, although it’s beyond the scope of this article to ensure they fully live up to those claims. They all run Linux on at least one component in the ecosystem, and most support it throughout, from desktop development to cloud/server, gateway, and sensor endpoint components. The vast majority have components that can run on Linux hacker boards like the Raspberry Pi and BeagleBone, and many support Arduino.

There is still plenty of proprietary technology in IoT, especially among the top-down, enterprise platforms. Yet, even some of these offer partially open access. Verizon’s ThingSpace, for example, which targets 4G smart city applications, has a free development API that supports hacker boards, even if the core platform itself is proprietary. Somewhat similarly, Amazon’s AWS IoT suite has a partially open device SDK and open source starter kits.

Other major proprietary platforms include Apple’s HomeKit and Microsoft Azure IoT Suite. Then there’s the 230-member Thread Group, which oversees the peer to peer Thread networking protocol based on 6LoWPAN. Launched by Nest, which is owned by Alphabet, the umbrella organization over Google, The Thread Group does not offer a comprehensive open source framework like AllSeen and the OCF. However, it’s associated with Brillo, as well as the Weave IoT communication protocol. In May, Nest launched an open source version of Thread called OpenThread (see farther below).

Here are 21 open source software projects for the Internet of Things:

AllSeen Alliance (AllJoyn) — The AllJoyn interoperability framework overseen by the AllSeen Alliance (ASA) is probably the most widely adopted open source IoT platform around.

Bug Labs dweet and freeboard — Bug Labs started out making modular, Linux-based Bug hardware gizmos, but it long ago morphed into a hardware-agnostic IoT platform for the enterprise. Bug Labs offers a “dweet” messaging and alerts platform and a “freeboard” IoT design app. Dweet helps publish and describe data using a HAPI web API and JSON. Freeboard is a drag-and-drop tool for designing IoT dashboards and visualizations.

DeviceHive — DataArt’s AllJoyn-based device management platform runs on cloud services such as Azure, AWS, Apache Mesos, and OpenStack. DeviceHive focuses on Big Data analytics using tools like ElasticSearch, Apache Spark, Cassandra, and Kafka. There’s also a gateway component that runs on any device that runs Ubuntu Snappy Core. The modular gateway software interacts with DeviceHive cloud software and IoT protocols, and is deployed as a Snappy Core service.

DSA — Distributed Services Architecture facilitates decentralized device inter-communication, logic, and applications. The DSA project is building a library of Distributed Service Links (DSLinks), which allow protocol translation and data integration with third party sources. DSA offers a scalable network topology consisting of multiple DSLinks running on IoT edge devices connected to a tiered hierarchy of brokers.

Eclipse IoT (Kura) — The Eclipse Foundation’s IoT efforts are built around its Java/OSGi-based Kura API container and aggregation platform for M2M applications running on service gateways. Kura, which is based on Eurotech’s Everywhere Cloud IoT framework, is often integrated with Apache Camel, a Java-based rules-based routing and mediation engine. Eclipse IoT sub-projects include the Paho messaging protocol framework, the Mosquitto MQTT stack for lightweight servers, and the Eclipse SmartHome framework. There’s also a Java-based implementation of Constrained Application Protocol (CoAP) called Californium, among others.

Kaa — The CyberVision-backed Kaa project offers a scalable, end-to-end IoT framework designed for large cloud-connected IoT networks. The platform includes a REST-enabled server function for services, analytics, and data management, typically deployed as a cluster of nodes coordinated by Apache Zookeeper. Kaa’s endpoint SDKs, which support Java, C++ and C development, handle client-server communications, authentication, encryption, persistence, and data marshalling. The SDKs contain server-specific, GUI-enabled schemas translated into IoT object bindings. The schemas govern semantics and abstract the functions of a diverse group of devices.

Macchina.io — Macchina.io provides a “web-enabled, modular and extensible” JavaScript and C++ runtime environment for developing IoT gateway applications running on Linux hacker boards. Macchina.io supports a wide variety of sensors and connection technologies including Tinkerforge bricklets, XBee ZB sensors, GPS/GNSS receivers, serial and GPIO connected devices, and accelerometers.

GE Predix — GE’s PaaS (Platform as a Service) software for industrial IoT is based on Cloud Foundry. It adds asset management, device security, and real-time, predictive analytics, and supports heterogeneous data acquisition, storage, and access. GE Predix, which GE developed for its own operations, has become one of the most successful of the enterprise IoT platforms, with about $6 billion in revenues. GE recently partnered with HPE, which will integrate Predix within its own services.

Home Assistant — This up and coming grassroots project offers a Python-oriented approach to home automation. See our recent profile on Home Assistant.

Mainspring — M2MLabs’ Java-based framework is aimed at M2M communications in applications such as remote monitoring, fleet management, and smart grids. Like many IoT frameworks, Mainspring relies heavily on a REST web-service, and offers device configuration and modeling tools.

Node-RED — This visual wiring tool for Node.js developers features a browser-based flow editor for designing flows among IoT nodes. The nodes can then be quickly deployed as runtimes, and stored and shared using JSON. Endpoints can run on Linux hacker boards, and cloud support includes Docker, IBM Bluemix, AWS, and Azure.

Open Connectivity Foundation (IoTivity) — This amalgamation of the Intel and Samsung backed Open Interconnect Consortium (OIC) organization and the UPnP Forum is working hard to become the leading open source standards group for IoT. The OCF’s open source IoTivity project depends on RESTful, JSON, and CoAP.

openHAB — This open source smart home framework can run on any device capable of running a JVM. The modular stack abstracts all IoT technologies and components into “items,” and offers rules, scripts, and support for persistence — the ability to store device states over time. OpenHAB offers a variety of web-based UIs, and is supported by major Linux hacker boards.

OpenIoT — The mostly Java-based OpenIoT middleware aims to facilitate open, large-scale IoT applications using a utility cloud computing delivery model. The platform includes sensor and sensor network middleware, as well as ontologies, semantic models, and annotations for representing IoT objects.

OpenRemote — Designed for home and building automation, OpenRemote is notable for its wide-ranging support for smart devices and networking specs such as 1-Wire, EnOcean, xPL, Insteon, and X10. Rules, scripts, and events are all supported, and there are cloud-based design tools for UI, installation, and configuration, and remote updates and diagnostics.

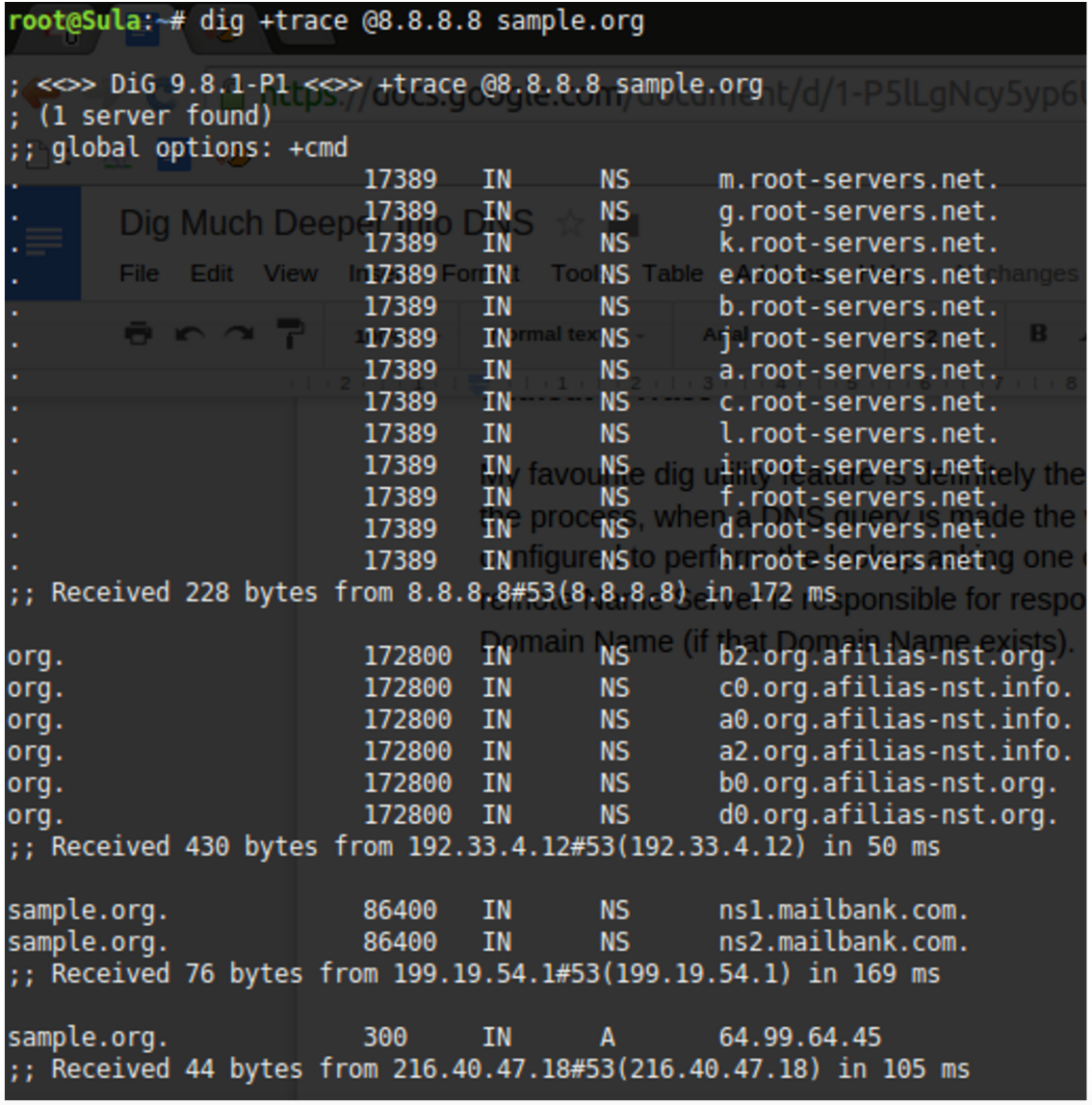

OpenThread — Nest’s recent open source spin-off of the 6LoWPAN-based Thread wireless networking standard for IoT is also backed by ARM, Microchip’s Atmel, Dialog, Qualcomm, and TI. OpenThread implements all Thread networking layers and implements Thread’s End Device, Router, Leader, and Border Router roles.

Physical Web/Eddystone — Google’s Physical Web enables Bluetooth Low Energy (BLE) beacons to transmit URLs to your smartphone. It’s optimized for Google’s Eddystone BLE beacon, which provides an open alternative to Apple’s iBeacon. The idea is that pedestrians can interact with any supporting BLE-enabled device such as parking meters, signage, or retail products.

PlatformIO — The Python-based PlatformIO comprises an IDE, a project generator, and a web-based library manager, and is designed for accessing data from microcontroller-based Arduino and ARM Mbed-based endpoints. It offers preconfigured settings for more than 200 boards and integrates with Eclipse, Qt Creator, and other IDEs.

The Thing System — This Node.js based smart home “steward” software claims to support true automation rather than simple notifications. Its self-learning AI software can handle many collaborative M2M actions without requiring human intervention. The lack of a cloud component provides greater security, privacy, and control.

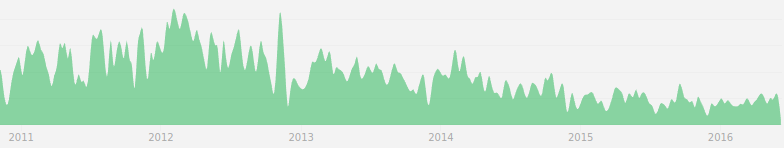

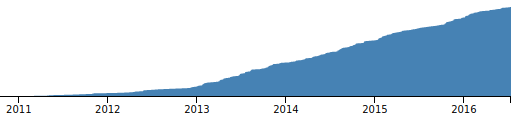

ThingSpeak — The five-year-old ThingSpeak project focuses on sensor logging, location tracking, triggers and alerts, and analysis. ThingSpeak users can tap a version of MATLAB for IoT analysis and visualizations without buying a license from Mathworks.

Zetta — Zetta is a server-oriented, IoT platform built around Node.js, REST, WebSockets, and a flow-based “reactive programming” development philosophy linked with Siren hypermedia APIs. Devices are abstracted as REST APIs and connected with cloud services that include visualization tools and support for machine analytics tools like Splunk. The platform connects end points such as Linux and Arduino hacker boards with cloud platforms such as Heroku in order to create geo-distributed networks.

Read the previous article in this series, Who Needs the Internet of Things?

Read the next article in this series, Linux and Open Source Hardware for IoT

Interested in learning how to adapt Linux to an embedded system? Check out The Linux Foundation’s Embedded Linux Development course.