This is the third and last tutorial in our series about creating CICD pipelines with Docker containers. Part one focused on how to use Docker Hub to automatically build your application images, and part two used the online Travis-ci platform to automate the process of running those units tests.

Now that our little flask application is tested by Travis, let’s see how we can make sure a docker image is being built and pushed to the hub, instead of using the autobuild feature. Then, we will see how to deploy the latest build image automatically every time some code is added to the project.

I will assume in this tutorial that you already have a remote host set up and that you have access to it through ssh. To create the examples, I have been using a host running on Ubuntu, but it should be very easy to adapt the following to any popular Linux distribution.

Requirements

First, you will need to have the travis command-line client installed on your workstation (make sure the latest version of ruby is installed and run):

sudo gem install travis

Login with your GitHub account by running:

travis login --org

On your remote host, make sure that Docker engine and docker-compose are installed properly. Alternatively, you can also install compose locally; this will be useful if you later add services on which your applications rely (e.g., databases, reverse-proxies, load-balancer, etc.).

Also, make sure that the user you are logging in with is added to the docker group; this can be done on the remote host with:

sudo gpasswd -a ${USER} docker

This requires a logout to be effective.

Building and pushing the image with Travis

In this step, you will be modifying your existing Travis workflow in order push to the image we’ve built and tested onto the hub. To do so, Travis will need to access the hub with your account. Let’s add an encrypted version of your credentials to your .travis.yml file with:

travis encrypt DOCKER_HUB_EMAIL=<email> --add

travis encrypt DOCKER_HUB_USERNAME=<username> --add

travis encrypt DOCKER_HUB_PASSWORD=<password> --add

We can now leverage the tag and push features of the Docker engine by simply adding the following lines to the script part:

- docker tag flask-demo-app:latest $DOCKER_HUB_USERNAME/flask-demo-app:production

- docker push $DOCKER_HUB_USERNAME/flask-demo-app:production

This will create an image tagged “production” and ready to download from your Docker Hub account. Now, let’s move on to the deployment part.

Automatic deployment with Travis

We will use Docker compose to specify how and which image should be deployed on your remote host. Create a docker-compose.yml file at the root of your project containing the following text:

version: '2'

services:

app:

image: <your_docker_hub_id>/<your_project_name>:production

ports:

- 80:80

Send this file to your production host with scp:

scp docker-compose.yml ubuntu@host:

Now that you have set up docker-compose on your remote host, let’s see how you can prepare Travis to do the same automatically each time your application is builded and tested successfully.

The general idea is that you will add some build commands in the Travis instructions that will connect to your remote host via ssh and run docker-compose to update your application to its latest available version.

For that purpose, you will create a special ssh key that will be used only by Travis. The user using this key will be allowed to run only one script named deploy.sh, which calls several docker-compose commands in a row.

Create a deploy.sh file with the following content:

docker-compose down

docker-compose pull

docker-compose up -d

Make the file executable and send it to your host with:

chmod +x ./deploy.sh

scp deploy.sh ubuntu@host:

Create the deploy key in your repo code with:

ssh-keygen -f deploy_key

Copy the output of the following command in your clipboard:

echo "command=./deploy.sh",no-port-forwarding,no-agent-forwarding,no-pty $(cat ./deploy_key.pub)

Connect to your host and paste this output to the .ssh/authorized_keys of your user. You should end up with a command similar to this one:

echo 'command="./deploy.sh",no-port-forwarding,no-agent-forwarding,no-pty ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQC/OAw[...]kQ728t3jxPPiFX' >> ~/.ssh/authorized_keys

This will make sure the only command allowed for the user connecting with the deploy key is our deployment script.

You can test that everything is in order by running once:

ssh -i deploy_key <your_user>@$<your_remote_host_ip> ./deploy.sh

Now that you have tested your deployment script, let’s see how you can have Travis run it each time the tests are successful.

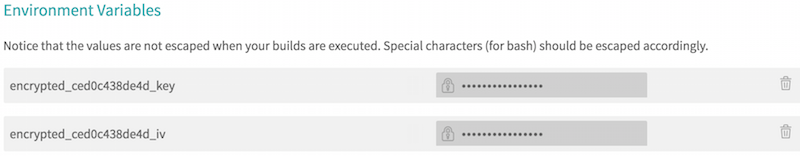

First, let’s encrypt the deployment key (necessary because you DO NOT want any unencrypted private key in your repository) with:

travis encrypt-file ./deploy_key --add

Note the use of the –add option that will help you by adding the decryption command in your travis file. Refer to the Travis documentation on encryption to learn more.

Add it to the project with:

git add deploy_key.enc

git commit -m "Adding enrypted deploy key" deploy_key.enc

git push

You should now be able to see your encrypted deploy_key in your projects settings on Travis-ci:

Finally, add the following section to your .travis.yml file, (take care of updating accordingly your remote host ip):

deploy:

provider: script

skip_cleanup: true

script: chmod 600 deploy_key && ssh -o StrictHostKeyChecking=no -i deploy_key ubuntu@<your_remote_host_ip> ./deploy.sh

on:

branch: master

Commit and push your change:

git commit -m "Added deployment instructions" .travis.yml

git push

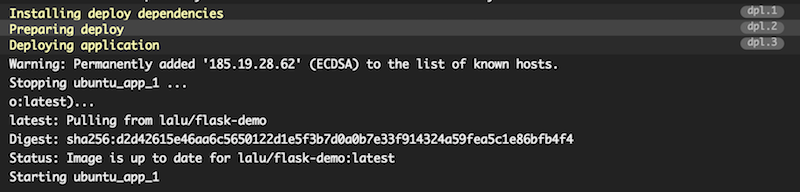

Head to your Travic-ci dashboard to monitor your build, you should see a build output similar to this one:

Your build has been deployed to your remote host! You can also verify this by running a docker ps on your host and check for the STATUS column, which should give you the uptime of the app container:

ubuntu@demo-flask:~$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

ae1797d92bf8 lalu/flask-demo:latest "/entrypoint.sh" 3 hours ago Up 10 minutes 0.0.0.0:80->80/tcp ubuntu_app_1

To sum it up, here is what your final travis.yml file should look like:

sudo: required

language: python

services:

- docker

before_install:

- docker login --email=$DOCKER_HUB_EMAIL --username=$DOCKER_HUB_USERNAME --password=$DOCKER_HUB_PASSWORD

- openssl aes-256-cbc -K $encrypted_ced0c438de4d_key -iv $encrypted_ced0c438de4d_iv

-in deploy_key.enc -out ./deploy_key -d

- docker build -t flask-demo-app .

- docker run -d --name app flask-demo-app

- docker ps -a

script:

- docker exec app python -m unittest discover

- docker tag flask-demo-app:latest $DOCKER_HUB_USERNAME/flask-demo-app:production

- docker push $DOCKER_HUB_USERNAME/flask-demo-app:production

after_script:

- docker rm -f app

deploy:

provider: script

skip_cleanup: true

script: chmod 600 deploy_key && ssh -o StrictHostKeyChecking=no -i ./deploy_key

ubuntu@demo-flask.buffenoir.tech './deploy.sh'

on:

branch: master

env:

global:

- secure: DCNxizK[...]pygQ=

- secure: cnpkOl9[...]dHKc=

- secure: wy5+mu0[...]MqvQ=

Et voilà! Each time you will be adding code to your repository that pass your set of tests, it will also be deployed to your production host.

Conclusion

Of course, the example application showcased in this series is very minimalistic. But it should be easy to modify the compose file to add, for example, some databases and proxies. Also, the security could be greatly improved by using private installation of Travis. Last but not least, the workflow should be customized to support different branches and tags according to your environments (dev, staging, production, etc.).

Read previous articles:

Integrating Docker Hub In Your Application Build Process

How to Automate Web Application Testing With Docker and Travis